The 10 Blue Links Are Gone. Here's What Replaced Them.

For 25 years, I watched the same cycle repeat. A new distribution channel emerges. Early movers figure out the rules. Everyone else catches up too late.

Right now, we're in the early mover window for AI search. Most brands are still playing by the old rules.

When someone asks ChatGPT, Gemini, Claude, or Perplexity for a recommendation, those models don't return a list of links. They return an answer. One answer. Maybe two options, synthesized from everything the model knows.

If your brand isn't in that answer, you don't exist in that moment. Not on page two. Not below the fold. Just gone.

I've spent the last year studying how this actually works in practice. Not the theory. The patterns. Akii's AI Visibility Index for Q4 2025 analyzed over 10,000 prompts across industries and engines. What came back wasn't abstract. It was specific, measurable, and surprisingly consistent.

Here are 12 real patterns we found, along with the framework to reverse-engineer them.

Why Should You Care About Specific AI Answers?

Because understanding why an AI model chose one source over another is the entire game now.

This isn't traditional SEO. AI models don't rank content based on keyword density or backlink counts the way Google's classic algorithm did. They build internal knowledge graphs, infer meaning, and reference entities based on understanding and authority.

If your competitor keeps showing up in ChatGPT recommendations and you don't, that's not luck. It's the result of how their brand is represented across the web: structured, factual, consistent.

By studying real AI answers, we can measure exactly what gets rewarded. The black box isn't as black as people think.

What patterns actually emerged?

The Q4 2025 index revealed clear engine-by-engine biases. Each model has its own logic, its own preferences, its own blind spots. But across all of them, two signals kept showing up above everything else: entity consistency and citation quality. Schema implementation was right behind them.

Those things matter more than your domain authority right now. That's a hard pill for a lot of SEO teams to swallow, but the data is clear.

What Did We Learn From ChatGPT?

ChatGPT provided the broadest coverage of all models tested, citing brands in 42% of prompts. That's a massive surface area for visibility. But its ranking logic demands something specific: external authority.

Pattern 1: Broad, Almost Democratic Inclusion

ChatGPT cited the largest variety of brands across our test set. It was surprisingly willing to include mid-market players alongside incumbents. Not always. But often enough to matter.

What does this tell us? ChatGPT rewards external quotability and active community discussion, even for brands that aren't household names. If people are talking about you in places the model can see, you have a shot.

Pattern 2: Inconsistent Citations

Here's where it gets tricky. A recommendation might link to a TechCrunch article in one run, then appear with no source attribution in the next. Same prompt. Different output.

You can't rely on ChatGPT for clean traffic attribution. What you can do is focus on brand understanding and external authority as your primary levers. The mention itself is the win. Don't get hung up on whether it links back to you.

Pattern 3: Authoritative Source Preference

When ChatGPT did cite mid-market brands, the mention almost always correlated with strong recent coverage on authoritative sites. Not the brand's own blog. External outlets.

This is Generative Engine Optimization in practice. You need to secure mentions in the high-authority outlets that models ingest. Your own content matters, but what others say about you matters more to these models.

Pattern 4: Sheer Scale

With 200 million plus weekly active users, any inclusion in a ChatGPT answer reaches a massive audience. Even an uncited mention, where the model recommends you by name without linking anywhere, influences buyer perception at scale.

Treat every mention as a visibility outcome. Don't dismiss it because there's no click to track.

What Makes Perplexity Different?

Perplexity is the most transparent platform for AI discovery right now. That transparency makes its ranking signals the easiest to trace and reverse-engineer.

If you're trying to understand how AI search works, start here.

Pattern 5: High Citation Quality

91% of Perplexity answers in our data carried clickable citations. That's extraordinary compared to other engines. It means Perplexity is the best surface for measuring real impact and tracing referral traffic via UTM tags.

Want proof that AI search can drive measurable results? Perplexity is where you'll find it.

Pattern 6: Data-Driven Content Preference

Perplexity's blended source lists pulled from media, reviews, and knowledge hubs. But it consistently rewarded brands investing in data-backed thought leadership.

The action here is straightforward. Publish authoritative, data-backed reports on third-party sites. Not opinion pieces. Not hot takes. Research with numbers that builds external authority.

Pattern 7: Extractable Summaries Win

AI extracts answers the way a human skims. It looks for concise summaries, question-based headings, and clear definitions. Structure your pages that way and you're giving the model exactly what it needs.

Build in concise definitions and TL;DR sections that the model can instantly quote. I call these "quotable canonicals." They're the AI equivalent of a featured snippet, but the stakes are higher because there's only one answer slot.

Pattern 8: The FlowBoard Case

This one is worth pausing on. Perplexity linked directly to FlowBoard's remote work report, driving measurable referral traffic. Not to their homepage. Not to a product page. To a specific, data-rich report.

The model rewarded the stacked effect of schema markup, authoritative content, and clean entity data. All working together. That's the playbook.

How Does Gemini Decide What to Show?

Gemini's ranking behavior showed a clear authority bias. It skews toward incumbent brands and those with strong signals in Google's existing system. If you're a challenger brand, this is the hardest engine to crack.

Pattern 9: Entity Alignment Is Everything

Inclusion skewed heavily toward brands with strong Knowledge Graph entries and schema markup. If your brand profile is inconsistent across the web, Gemini hesitates. It won't confidently recommend something it can't clearly identify.

Entity consistency isn't a nice-to-have here. It's a prerequisite.

Pattern 10: Schema Responsiveness

Google AI Overviews, which are often Gemini-powered, averaged 22% inclusion in our data. But they were highly responsive to FAQ and HowTo schema specifically.

Use structured data on your evergreen content. Provide concise, machine-readable answers that the model can extract without guessing. This is Answer Engine Optimization at its most practical.

Pattern 11: Mentions Without Attribution

Gemini rarely surfaced clickable citations. Salesforce and HubSpot almost always appeared in relevant answers, but attribution was difficult to trace.

Sound familiar? Same challenge as ChatGPT, but even more pronounced. The strategic response is identical: focus on getting mentioned, even when the primary goal isn't immediate click-through. Inclusion validates your brand in the buyer's mind. That has value even without a referral link.

Pattern 12: Platform Preference

Gemini consistently highlighted Google's own modules, like Google Travel, over competitors in relevant queries. This isn't surprising, but it matters.

Brands that align with Google's structured data standards get preferential treatment in Gemini's answers. That's the reality. Work with it.

How Do You Actually Reverse-Engineer This?

Seeing patterns is useful. Acting on them is what matters.

To move from invisible to consistently cited in AI answers, you need to audit and improve your content against the specific signals these models favor. Here's the framework we use at Akii, broken into four evaluation criteria.

Are You Showing Up in Trusted Sources?

These models rely on trusted, third-party sources to validate your brand's expertise. When you reverse-engineer an AI answer, ask this: did the model cite an authoritative external source like TechCrunch, an academic report, or a G2 review? Or did it only reference your own website?

If it's only your site, that's a ceiling. You need to be present in the high-authority outlets that models ingest.

The Akii AI Engage tool systematically educates models about your content by prompting major engines to analyze your optimized pages. It's one of the most direct ways to influence what models know about you.

Is Your Entity Data Clean?

Entity consistency is a prerequisite for being cited confidently. AI models penalize inconsistency. If your brand description says one thing on your website, something slightly different on Crunchbase, and something else on Wikidata, the model gets confused. Confused models don't recommend.

The fix is straightforward but tedious. One unified description. One taxonomy. One boilerplate. Replicated across your website, schema, directories, and knowledge bases.

Akii's AI Brand Audit tracks this directly, correlating entity consistency with the Brand Understanding dimension of your AI Visibility Score.

How Often Are You Being Mentioned?

Citation density measures how frequently and favorably your brand appears in AI answers. This is what the AI Visibility Score tracks, along with your inclusion percentage across engines.

Here's what most people miss: AI visibility is volatile. A brand can appear in 30% of answers one week and 15% the next. Continuous monitoring isn't optional. It's the only way to know if your efforts are working or if something changed underneath you.

Tools like the AI Search Tracker and AI Brand Audit provide automated monitoring across ChatGPT, Gemini, and Perplexity. They track inclusion rates and competitive positioning around the clock.

Is Your Content Machine-Readable?

AI engines extract answers from content that's structured for machines, not just humans. This is Answer Engine Optimization in its purest form.

Build Schema.org markup: Organization, Product, FAQ, HowTo. Provide concise, declarative statements that models can extract without interpretation. The Akii Website Optimizer analyzes up to 50 pages and generates the necessary Schema.org markup package built specifically for AI crawlers.

What Does This Look Like in Practice?

The FlowBoard case study is the clearest example of what happens when you stack these practices together.

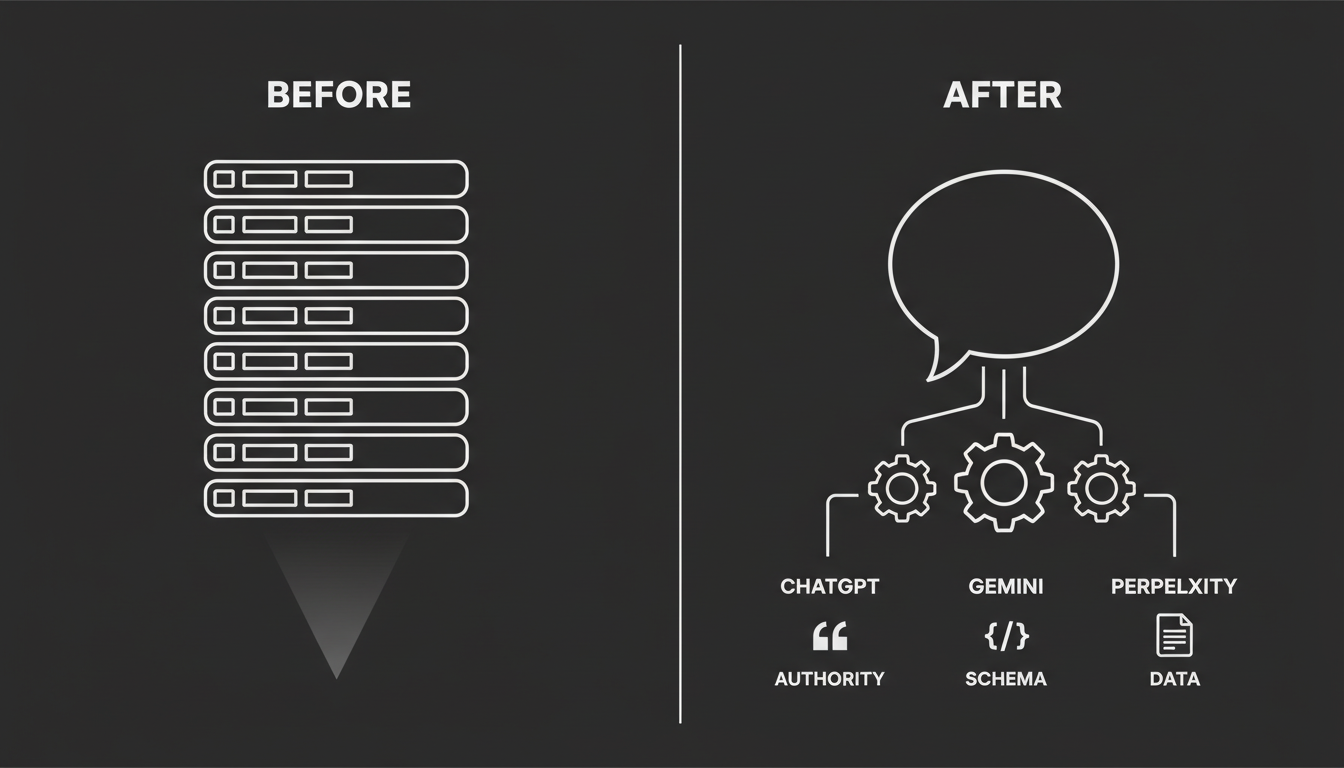

Before

FlowBoard had healthy traditional SEO rankings. Good content. Decent backlinks. But they were invisible in AI answers, showing up in just 9% of prompts.

When someone asked an AI model about project management tools, the engines defaulted to incumbents like Asana and Jira. FlowBoard didn't exist in that conversation.

What They Did

Two things, executed together.

First, they added FAQ schema to 20 plus feature pages. That's the AEO side. Making their content machine-readable and extractable.

Second, they published a data-driven industry report that earned external placements on authoritative sites. That's the GEO side. Building third-party validation.

They also cleaned up their entity data. Inconsistent descriptions across Crunchbase and Wikidata were standardized into one clear, unified profile.

After

Inclusion rose from 9% to 29% across engines. More than triple.

Perplexity linked directly to the external report, driving measurable referral traffic. Google AI Overviews surfaced the brand for the first time, referencing the structured content on their feature pages.

The gains didn't come from any single tactic. They came from schema, authoritative content, and entity hygiene working together. FlowBoard became both quotable and machine-readable. That combination is what the models reward.

So Where Does This Leave You?

I've been through enough technology shifts to know this: the window between "early adopters figure it out" and "everyone catches up" is shorter than you think. But it's still open right now.

The brands that will own AI search visibility in 2026 are building their entity profiles, publishing data-backed content, and setting up structured data today. Not next quarter. Today.

The patterns are clear. The framework is practical. The only question is whether you'll act on it before your competitors do.

If you want to see where you stand right now, get your free AI Visibility Score and find out exactly how models like Gemini, ChatGPT, and Claude perceive your brand. It takes minutes, and it'll tell you more about your actual discoverability than any traditional SEO audit can.

The rules changed. The playbook is here. Time to use it.