Your Website Looks Fine to Humans. AI Systems Can't See Half of It.

I keep running into the same assumption when I talk to founders and marketing leads. They've put real money into their website. Good design. Solid copy. Fast hosting. So they assume AI systems can read and understand it just as well as a human visitor can.

That assumption is wrong. And it's costing them visibility they don't even know they're losing.

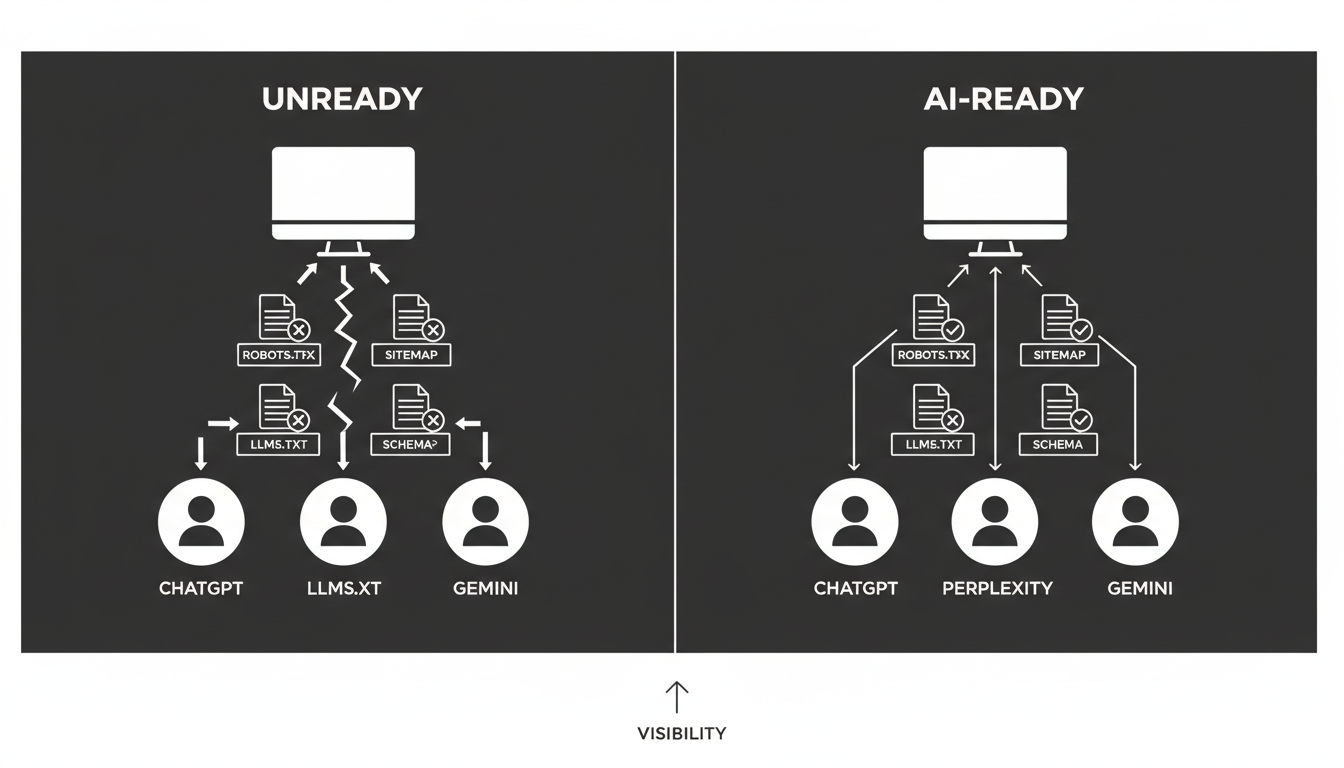

The gap between "looks good to people" and "readable by AI" is wider than most teams realize. No sitemap.xml. No structured data. No llms.txt. No clear signals telling AI models what your site is about, what's authoritative, and what deserves attention. These aren't edge cases or obscure technical requirements. They're the basics. Most sites are failing them.

Why Does This Matter Right Now?

The way people find information is shifting. Search engines still matter, but AI systems are increasingly the first layer between your content and the people looking for it. ChatGPT, Perplexity, Copilot, Gemini. These systems don't browse your site the way a person does. They crawl it, parse it, and decide whether your content is trustworthy and structured enough to reference.

If your site doesn't give them the right signals, you get skipped. Not penalized. Just invisible.

That's actually worse. A penalty you can diagnose and fix. Invisibility just looks like stagnation, and most teams never trace it back to the real cause.

What Actually Makes a Site "AI-Ready"?

This term gets thrown around loosely, so let me be specific.

AI readiness means your site has the technical foundation for AI systems to crawl, interpret, and trust your content. That breaks down into three layers.

Technical structure. Does your site have a robots.txt file? A properly formatted sitemap.xml? An llms.txt file that tells AI models how to interact with your content? These are table stakes. A surprising number of established sites are missing one or more of them.

Structured data. Schema.org markup tells machines what your content actually is. A product page. A FAQ. An article. A company profile. Without it, AI systems have to guess. They don't guess in your favor.

Content quality and clarity. Even strong content becomes invisible when it's poorly structured. Headers that don't follow a logical hierarchy. Pages that bury the key information. Content that's thorough but formatted in ways machines struggle to parse.

You can have genuinely good content and still be invisible to AI. That's the part most teams miss, and it's the part worth fixing first.

What Does Akii's Website Optimizer Actually Do?

There's no shortage of tools that will grade your site, hand you a score, and leave you with no clear next step. The Website Optimizer works differently. It scans your site and gives you four things.

An overall readiness score. Your baseline. It tells you where you stand across technical health, content quality, and AI readiness in one view.

Specific gap identification. Not vague recommendations. The tool flags exactly what's missing. No sitemap? It tells you. No schema markup? Flagged. No llms.txt? You'll see it clearly.

Generated files you can actually use. This is the part that saves real time. If your sitemap is missing, the Optimizer generates a properly formatted XML file listing your key pages. Same for llms.txt and other critical files. Download them. Upload them. Done.

A phased action plan with priorities. Not a 47-item checklist with no hierarchy. A roadmap broken into quick wins, medium-term fixes, and expected impact estimates so your team knows what to do first.

The goal isn't a report to file away. It's something your team can act on this week.

What Kinds of Issues Does It Find?

The same problems repeat across almost every site we scan. Here's what shows up most often.

Missing robots.txt

This file tells crawlers what they can and can't access. Without it, you're leaving AI systems to figure it out on their own. Some will crawl everything. Some will crawl nothing. Neither outcome is what you want.

No sitemap.xml

Your sitemap is how AI systems discover your pages. Without one, crawlers follow links manually and hope they find everything important. They won't. Pages get missed. Content gets orphaned. The Optimizer generates a ready-to-use XML sitemap based on your actual site structure, which alone can save hours of manual work.

No llms.txt file

Most sites don't have one yet, and that's a real gap. An llms.txt file gives AI language models explicit guidance on how to interact with your content. Think of it as robots.txt, but built specifically for the AI era. If you want AI systems to reference your content accurately, this file matters.

Missing schema.org structured data

Without structured data, AI systems can't reliably identify what your pages contain. Is this a product? A service? A blog post? A company profile? Schema markup answers those questions clearly. The Optimizer identifies where it's missing and tells you exactly what to add.

Content structure problems

Good content in bad structure is a common pattern. Headers out of logical order. Key information buried deep in a page. Content that's thorough but not scannable by machines. The tool highlights these gaps so you can fix them without rewriting everything from scratch.

How Long Does This Take?

The scan runs in minutes. That's the diagnostic part.

The action plan breaks implementation into realistic phases.

Quick wins, zero to two weeks. Generate and upload your XML sitemap. Fix robots.txt issues. Low effort, real impact, and your team can ship these fast.

Medium-term work, two to eight weeks. Add an llms.txt file. Build schema.org markup across key pages. Improve content structure where the tool flags gaps.

Based on what we're seeing across sites that go through this process, the expected impact includes a 20 to 30% improvement in load speed from technical fixes, a 15 to 25% increase in AI visibility scores, and a 10 to 20% improvement in search rankings.

Each task comes with time estimates, priority levels, and clear implementation steps. Your technical team doesn't have to interpret anything. They just execute.

Is This Just an SEO Tool With a New Label?

No. That distinction matters.

Traditional SEO tools focus on search engine ranking factors. Keywords, backlinks, page speed, meta tags. Those things still matter. But they don't address whether AI systems can read, interpret, and reference your content. AI readiness is a different layer entirely. It's about structured data, machine-readable files, and content clarity for systems that don't browse your site the way Google's crawler does.

These systems process your content differently. They need different signals. The Website Optimizer covers both layers, but the AI readiness piece is what most existing tools miss completely. That's the gap we built it to close.

What Happens After You Fix Things?

This is where teams often drop the ball. They run a scan, fix the issues, and move on.

AI visibility standards are still evolving. What's sufficient today might not be next quarter. New file formats emerge. Schema standards update. AI systems change how they weight different signals. After you set up the initial fixes, re-run the scan. Compare your updated scores to your baseline. Track the improvement.

That does two things: it proves ROI to whoever approved the work, and it keeps your site current as standards shift.

I've watched too many companies treat technical optimization as a one-time project. It's not. It's maintenance, the same way you keep servers patched or SSL certificates current. Build the habit early.

Who Should Be Running This?

If you're responsible for how your brand shows up in AI-generated answers, this is for you.

Marketing leaders who need to understand why organic visibility is flat despite good content. Technical teams who need a clear list of what to fix and in what order. Founders who want to make sure their site isn't invisible to the systems increasingly driving discovery.

You don't need a deep technical background to use it. The scan results are clear. The generated files are ready to roll out. The action plan is specific enough for a developer to pick up and run with immediately.

The Real Point

Your website is your most important digital asset. But its value depends entirely on who can find it and understand it. Right now, the systems doing that work are changing fast.

Most sites aren't ready. Not because the content is bad, but because the technical foundation wasn't built for how AI systems process information. Missing files, absent structured data, and poor machine readability create a gap between what your site contains and what AI systems can actually use.

That gap is fixable. Faster than most people think.

The Website Optimizer gives you the scan, the files, the priorities, and the roadmap. Your team handles the implementation. The result is a site that works for humans and for the AI systems that increasingly decide whether humans ever see it.

Run a free AI website readiness scan and see where your site actually stands. Not where you assume it does. Where it actually does.

That distinction is the whole point.