The Old Scoreboard Doesn't Work Anymore

For twenty years, the question was simple: are we on page one? That made sense when discovery meant ten blue links. It doesn't make sense anymore.

People are getting answers from ChatGPT, Gemini, Claude, Perplexity, and Google AI Overviews. They're not scrolling through results. They read a single synthesized response and move on. The new question isn't "what rank do we hold?" It's "are we even mentioned?"

I've watched discovery shift before. Directories to search engines. Desktop to mobile. Organic to paid. Each time, the companies that measured the new thing early won the next cycle. The ones that kept improving the old thing fell behind slowly, then all at once.

This is one of those shifts.

What Is the AI Visibility Index, and Why Should You Care?

The AI Visibility Index is our attempt to put a real number on something most companies are still tracking with screenshots and gut feel. In this Q4 2025 edition, we analyzed over 10,000 prompts across 12 industries to measure how often brands appear in AI-generated answers, how they're framed, what tone gets applied, and whether the answers include usable citations.

The result is a standardized AI Visibility Score. Comparable across engines. Comparable across sectors. Repeatable quarter over quarter.

Here's what most people miss: this isn't about vanity. If your brand doesn't show up when someone asks an AI engine "best tools for X" or "how does Y work," you're not in the consideration set. You're invisible at the exact moment a buyer is forming an opinion.

That's not a branding problem. That's a pipeline problem.

How Did We Actually Measure This?

Any benchmark is only as good as the method behind it. AI search introduces real challenges. Models update constantly, answers vary between runs, and some engines barely cite their sources. So let me walk through how we built this.

The prompt basket

We designed 10,000 prompts to simulate how real people actually ask questions. Four intent categories:

- Definitional: "What is predictive analytics?" or "How does Invisalign work?"

- Evaluative: "Best SaaS tools for small businesses" or "Top activewear brands 2025"

- Comparative: "Salesforce vs HubSpot" or "YNAB vs Mint vs ClearBudget"

- Transactional: "Notion pricing" or "Is there a free trial for FlowBoard?"

Every prompt was tagged by industry vertical and funnel stage. That let us see not just whether brands appeared, but how visibility shifted depending on what the user was actually trying to do.

The engines

Five engines that currently shape discovery:

- ChatGPT (browsing enabled)

- Google Gemini (Pro)

- Claude (Opus tier)

- Perplexity (Pro)

- Google AI Overviews

All tested in English, consistent geography, multiple runs per prompt to account for variability.

The scoring

Each answer got scored on four axes:

- Inclusion: 0 (not mentioned) to 3 (strong recommendation with reasoning)

- Positioning: leader, challenger, alternative, niche, or cautionary

- Sentiment: positive, neutral, or negative

- Citation quality: first-party, authoritative third-party, or miscellaneous

Scores were normalized to a 0 to 100 scale per engine, then averaged into a cross-engine AI Visibility Score. That lets us compare sectors and brands without one engine's style biasing the results.

The limitations

I want to be honest about what this doesn't capture. AI search is volatile. Inclusion swings can reflect model refreshes, not brand performance. Smaller verticals produce sparse data. Citation opacity in Claude and Gemini limits attribution.

But the methodology gives a reliable directional view. It shows which brands are included, why they're included, and what levers actually move the number. That's what matters.

What Did Each Engine Actually Do in Q4?

Each AI engine has its own personality. Its own biases. Its own blind spots. Here's what we found.

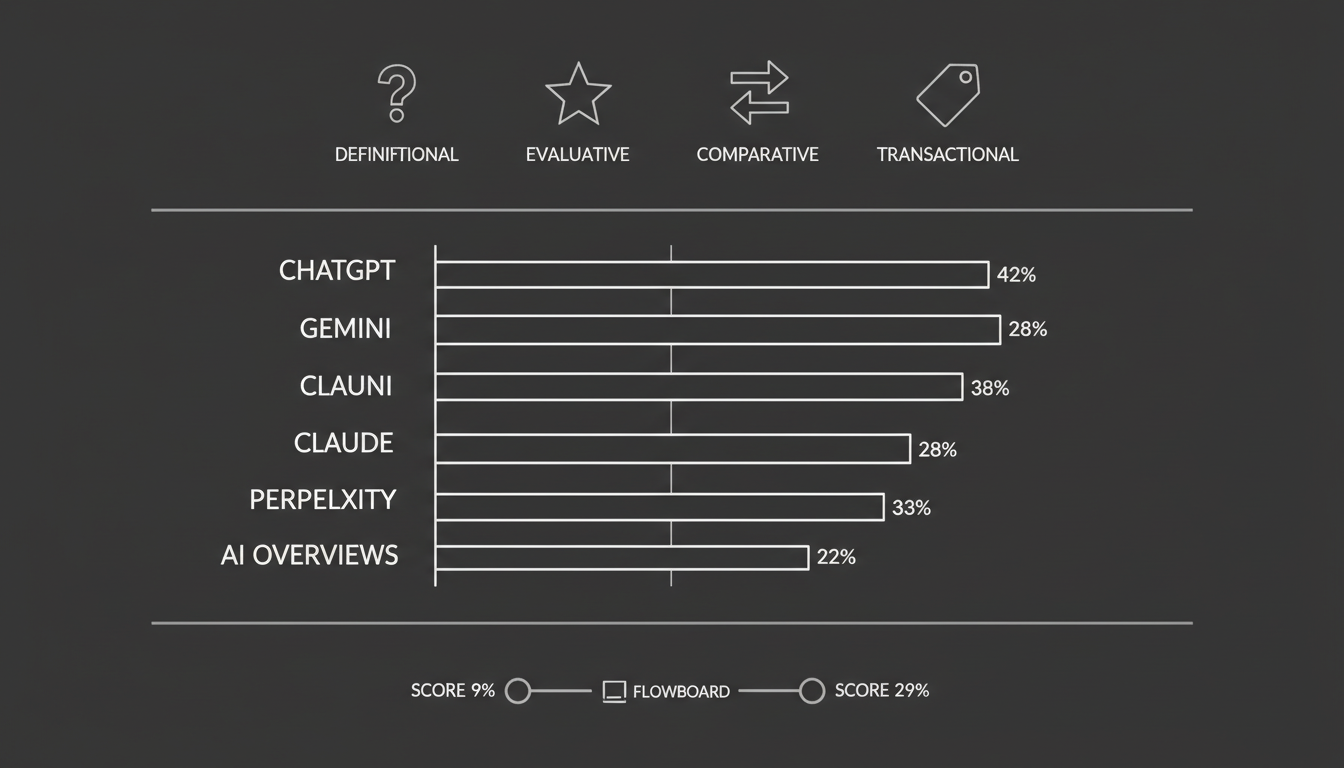

ChatGPT: The broadest net, the messiest citations

ChatGPT cited brands in 42% of prompts. The highest of any engine. It was also the most democratic, occasionally surfacing mid-market players when they had strong recent coverage or active community buzz.

The problem? Citation quality was all over the place. One run links to a TechCrunch article. The next gives the same recommendation with no source at all.

For marketers, ChatGPT is both the biggest opportunity and the biggest headache. You can get visibility without being a category leader. But proving impact through traffic or click-throughs is hard. The lever here is external quotability. Your brand needs to be present in the high-authority outlets that models ingest.

Gemini: Rewards the incumbents

Gemini averaged 35% inclusion and skewed heavily toward brands with strong Knowledge Graph entries, schema markup, and Google-aligned profiles. Results were more predictable than ChatGPT's but far less diverse.

In SaaS queries, Salesforce and HubSpot appeared almost every time. Smaller competitors were consistently ignored. Gemini rarely surfaced citations, which makes ROI measurement difficult. What was clear is that structured data and entity consistency influenced whether a brand appeared at all.

Claude: Hard to crack, but sticky

Claude had the lowest inclusion rate at 28%. But it also had the narrowest volatility. Answers often came with disclaimers, and brand mentions were limited to those with unimpeachable authority: Wikipedia entries, academic citations, major news coverage.

Here's the interesting part. Once a brand achieved inclusion in Claude, it tended to stay there quarter over quarter. Claude is hard to penetrate but rewards patience. Brands aiming to appear here should think in terms of long-term authority signals, not quick campaigns.

Perplexity: The experimenter's dream

Perplexity inclusion averaged 33%. But 91% of its answers carried clickable citations. Far more than any other engine. That makes it invaluable for testing.

Marketers can seed content externally, add UTM tags, and actually trace referral traffic. Perplexity's blended source lists rewarded brands investing in data-driven thought leadership. If you want to prove that AI visibility drives real outcomes, Perplexity is where you start.

AI Overviews: Schema-sensitive and unpredictable

AI Overviews had the lowest inclusion rate at 22% but were the most responsive to FAQ and HowTo schema. Brands with concise canonical answers saw measurable gains.

The challenge was volatility. Inclusions shifted dramatically week to week, reflecting Google's frequent model refreshes. AI Overviews are the most tactically sensitive surface right now. You can move the needle with structured data, but you have to track results constantly.

Which Industries Are Winning, and Why?

The engines don't treat all industries the same. Some verticals benefit from clear authority structures. Others get swallowed by platforms. Here's how four major sectors performed.

SaaS: The clear leader

SaaS brands averaged 48% inclusion across engines. The highest of any sector. This isn't surprising. SaaS companies are digitally native, with abundant schema markup, extensive review systems, and strong entity consistency.

The leaders: Salesforce (71%), HubSpot (64%), Notion (59%). Challengers like ClickUp, Monday.com, and Airtable landed in the 40 to 45% range.

Why does SaaS lead? Entity saturation across Wikidata, Crunchbase, LinkedIn, and schema-rich sites. Frequent citations in analyst reports from Gartner and Forrester. Schema adoption on help centers and feature pages that makes answers machine-readable.

What's interesting is that smaller players like ClickUp achieved outsized visibility by publishing horizontally targeted content. "Best tools for HR." "Top platforms for marketers." They ensured inclusion across multiple niches instead of competing head-on for category terms.

In SaaS, visibility is less about size than about being everywhere models look for authority.

eCommerce: Platforms eat the space

eCommerce brands averaged 36% inclusion. But the results clustered around platforms and aggregators, not individual direct-to-consumer brands.

Amazon dominated at 83%. eBay at 51%. Shopify at 44%, benefiting by association as the platform behind "best stores" or "build your online shop."

DTC brands rarely surfaced unless amplified by earned media. One boutique fitness apparel startup appeared in ChatGPT and Perplexity only after being profiled in Vogue and Women's Health. Schema (Product, Offer, AggregateRating) helped engines parse details but didn't drive inclusion without press.

For eCommerce challengers, schema is necessary but not sufficient. Press coverage and review velocity are what actually get you in.

Healthcare: Trust above everything

Healthcare scored 28% average inclusion with the strongest trust bias of any vertical. Engines overwhelmingly favored nonprofit institutions, academic centers, and government resources.

Mayo Clinic (62%), WebMD (57%), Cleveland Clinic (48%). Telehealth startups and commercial providers barely registered. When they did appear, sentiment was often qualified. ChatGPT included BetterHelp but appended language like "some users report mixed experiences."

Why? Engines prioritize reputable, non-commercial sources when health is at stake. Academic citations carried disproportionate weight, especially in Claude. Review systems like Zocdoc influenced local-level inclusions but not national brand visibility.

Healthcare visibility is won through trust signals: schema-rich FAQs, peer-reviewed citations, and impeccable reputation management. Engines are quick to exclude brands associated with negative coverage.

Travel: The most volatile sector

Travel had the lowest visibility at 19% average inclusion. Engines defaulted to platforms and directories, rarely surfacing individual brands.

Expedia (41%), Tripadvisor (37%), Booking.com (34%). AI Overviews were the most volatile surface here. Expedia might appear one week and be replaced by Booking.com the next. Claude largely avoided brand recommendations in travel queries altogether.

Google's self-preference was visible. Gemini consistently highlighted Google Travel modules over competitors.

Travel brands have to accept volatility and focus on structured data for deals and reviews, while diversifying presence across third-party travel blogs and forums.

What the industry differences prove

AI engines don't apply a single rulebook. They weigh trust signals differently by category. Winning requires adapting your approach to the norms of your vertical, not copying what works in SaaS and hoping it transfers.

Can Challenger Brands Actually Move the Needle?

Data tells the big picture. Case studies show what actually works in practice. These examples show how smaller brands, without the incumbency advantages of Salesforce or Expedia, improved their inclusion rates within a single quarter.

FlowBoard: From 9% to 29% in 90 days

FlowBoard, a mid-market SaaS project management platform, had healthy SEO rankings but negligible AI presence. An initial audit found inclusion in just 9% of prompts, with engines defaulting to Asana, Monday.com, and Jira.

What they did:

- Added FAQ schema to 20+ feature and pricing pages with concise canonical answers

- Published a data-driven industry report ("The State of Remote Project Management 2025") that earned placements in TechCrunch and Entrepreneur

- Standardized entity data across Crunchbase, Wikidata, and G2, removing inconsistent descriptions

What happened:

- Inclusion rose to 29% across engines. A 20-point gain.

- ChatGPT began citing FlowBoard in "best tools for startups" queries

- Perplexity linked directly to the remote work report, driving about 11% new visitors from referral traffic

- AI Overviews surfaced FlowBoard for the first time under "affordable project management tools"

The gains didn't come from one lever. They came from the stacked effect of schema, authoritative content, and entity hygiene. That combination made the brand quotable and machine-readable. Exactly what engines reward.

BrightSmile Dental: Local visibility from 3% to 24%

BrightSmile, a Chicago-based dental clinic, ranked well in local SEO but was almost invisible in AI answers. Only 3% of prompts mentioning "dentist Chicago" surfaced the brand. Most results were dominated by Yelp or Zocdoc.

What they did:

- Implemented LocalBusiness schema including geo-coordinates, opening hours, and sameAs links to Yelp and Healthgrades

- Launched a review acquisition campaign, securing 200+ new Google reviews in 90 days

- Built a FAQ content hub on the clinic website ("How much does Invisalign cost in Chicago?", "How long do implants last?")

What happened:

- AI Overviews inclusion rose to 24%, with several answers citing BrightSmile's FAQ hub

- Perplexity cited the clinic in 31% of queries, linking to Zocdoc reviews

- ChatGPT mentioned BrightSmile in multi-clinic lists, positioning it as a "well-reviewed option in Chicago"

- The clinic reported a 17% increase in patient bookings via organic search

For local providers, review velocity and schema clarity outweighed traditional keyword tactics. Engines treated BrightSmile as trustworthy once multiple signals aligned: structured markup, consistent entities, and recent positive sentiment.

The pattern across both cases

Neither of these brands had massive budgets or category dominance. What they had was discipline. Structured data to make answers extractable. Earned authority through media or review sites. Entity consistency across directories and profiles.

AI visibility isn't reserved for giants. But it doesn't happen by accident either.

How Do You Actually Improve Your Score?

This is where I see the most confusion. People hear "AI visibility" and think it requires some new, exotic playbook. It doesn't. It requires doing a handful of things well, consistently, and measuring the results.

Treat it like a KPI, not a curiosity

Many companies still treat AI search as a novelty. Marketing teams run ad hoc prompts, share screenshots in Slack, and move on. That mindset is already outdated.

AI answers are influencing buyer perception and shaping consideration sets right now. Make AI Visibility a core KPI. Report it monthly. Track it across engines. Put it alongside SEO, paid, and PR in executive dashboards. If you're not measuring it, you're not managing it.

At Akii, this is exactly the kind of signal we believe companies need to track systematically, not anecdotally.

Build a single source of truth

Engines penalize inconsistency. If your Crunchbase profile lists one description, your LinkedIn says another, and your schema uses outdated product names, models will hesitate to include you.

Create a master entity profile. One description. One taxonomy. One boilerplate. Replicate it across your site, schema, directories, and knowledge bases. Brands that looked "the same everywhere" were consistently more visible in our data.

Publish quotable canonicals

AI engines don't always parse sprawling content. They extract crisp, declarative statements that can stand on their own. For example:

"AI Visibility measures how often and how favorably a brand appears in AI-generated answers."

Brands that embed these canonicals in schema, FAQs, and executive summaries are far more likely to be cited. Clarity beats verbosity every time.

Balance AEO and GEO

Answer Engine Optimization (AEO) ensures your own content is structured and machine-readable. Generative Engine Optimization (GEO) ensures you're mentioned by others that engines trust: press, analysts, directories, reviewers.

High performers in Q4 paired the two. Without AEO, your content gets skipped. Without GEO, your brand feels unsupported. You need both.

Manage sentiment proactively

This is the one most companies overlook. Inclusion without positivity is dangerous.

Some fintech brands scored highly for visibility but were framed as controversial. Engines reflect what they ingest. Bad press and negative reviews erode not just reputation but visibility itself. Monitoring sentiment, improving review velocity, and correcting misinformation in neutral sources are now visibility strategies, not just PR strategies.

You can explore how Akii approaches tracking these signals across AI engines on our features page.

What Does 2026 Look Like?

The Q4 2025 data shows where brands stand today. But the bigger question is where this is heading. Three forces will reshape discovery by the end of 2026.

Entities will matter more than keywords

Search engines are leaning fully into entity-first indexing. Gemini and AI Overviews already reward structured, consistent entities more than keyword coverage. This will accelerate.

Engines will prioritize verified nodes in their knowledge graphs: brands, products, and organizations with clear relationships. The old playbook of targeting strings of text will fade. Success will come from strengthening entity profiles: clean schema with sameAs links, up-to-date Wikidata entries, consistent directory profiles, and harmonized messaging everywhere.

The winners won't be those who rank for "best software 2026." They'll be those whose entities are canonical and trusted.

Sentiment will become a ranking filter

Our 2025 data already showed engines hedging when citing brands with mixed reputations. In 2026, expect models to down-rank or exclude brands whose sentiment signals skew negative.

Brands with low Trustpilot scores, poor Glassdoor ratings, or viral controversies will find themselves omitted from AI-generated answers altogether. PR, customer experience, and search will converge. Reputation won't just affect how you're perceived. It will decide if you're perceived at all.

Paid amplification will enter AI answers

Monetization is inevitable. Gemini already experiments with sponsored shopping modules. Perplexity has signaled interest in brand partnerships. By 2026, we'll see paid amplification inside AI answers: sponsored inclusions alongside organic mentions.

This won't replace organic AI Visibility. But it will blur lines the way SEO and SEM once did. Smart brands will treat paid amplification as a complement, not a substitute. Secure organic visibility first, then reinforce it with sponsored slots where competition is fiercest. The danger is overreliance. Brands that neglect organic entity strength will pay more and gain less.

I've seen this pattern before. Every new discovery channel starts organic, then gets monetized. The companies that build organic strength early always have the best economics when paid enters the picture.

How to Run Your Own AI Visibility Audit

You don't need 10,000 prompts or enterprise infrastructure to get started. Here's a scaled-down version any brand can run internally.

Build a prompt basket

Start with 30 to 50 prompts that mirror real buyer behavior in your category. Balance them across the four intent types:

- Definitional: "What is [your category]?"

- Evaluative: "Best [category] tools for startups"

- Comparative: "[Brand A] vs [Brand B]"

- Transactional: "[Brand] pricing" or "Does [Brand] offer a free trial?"

Tag prompts by funnel stage so you can see how visibility changes as queries become more commercial.

Score the answers

For each engine, score responses on the four axes: inclusion (0 to 3), positioning (leader/challenger/cautionary), sentiment (positive/neutral/negative), and citation quality. A simple spreadsheet handles this. Normalize to a 0 to 100 scale to calculate a cross-engine score.

Set a cadence

- Monthly: Run the basket, record scores, note inclusion swings

- Quarterly: Roll results into an executive report and compare against previous quarters

- Annually: Expand the basket and benchmark against competitors

Even with a modest setup, you'll see patterns. Which engines respond to schema updates. Where media coverage translates to mentions. How sentiment affects visibility.

If you want to see how Akii can help automate and scale this kind of tracking, take a look at our pricing to find the right fit for your team.

The Bottom Line

AI visibility is measurable. It's improvable. And it's already shaping which brands buyers consider and which ones they never see.

The brands that combined Answer Engine Optimization with Generative Engine Optimization saw large gains in just 90 days this quarter. Those that ignored entity consistency, sentiment management, or structured data slipped further behind.

I've been through enough technology cycles to recognize the early innings of a real shift. This is one. The companies that treat AI Visibility as a KPI today, measured monthly and reported quarterly, will define the competitive baseline for the next decade.

The data is clear. The playbook is available. The only question is whether you start measuring now or wait until your competitors already have.