The Metric That Defined Marketing for Two Decades Is Now Broken

For twenty years, the question was simple: Are we on page one of Google?

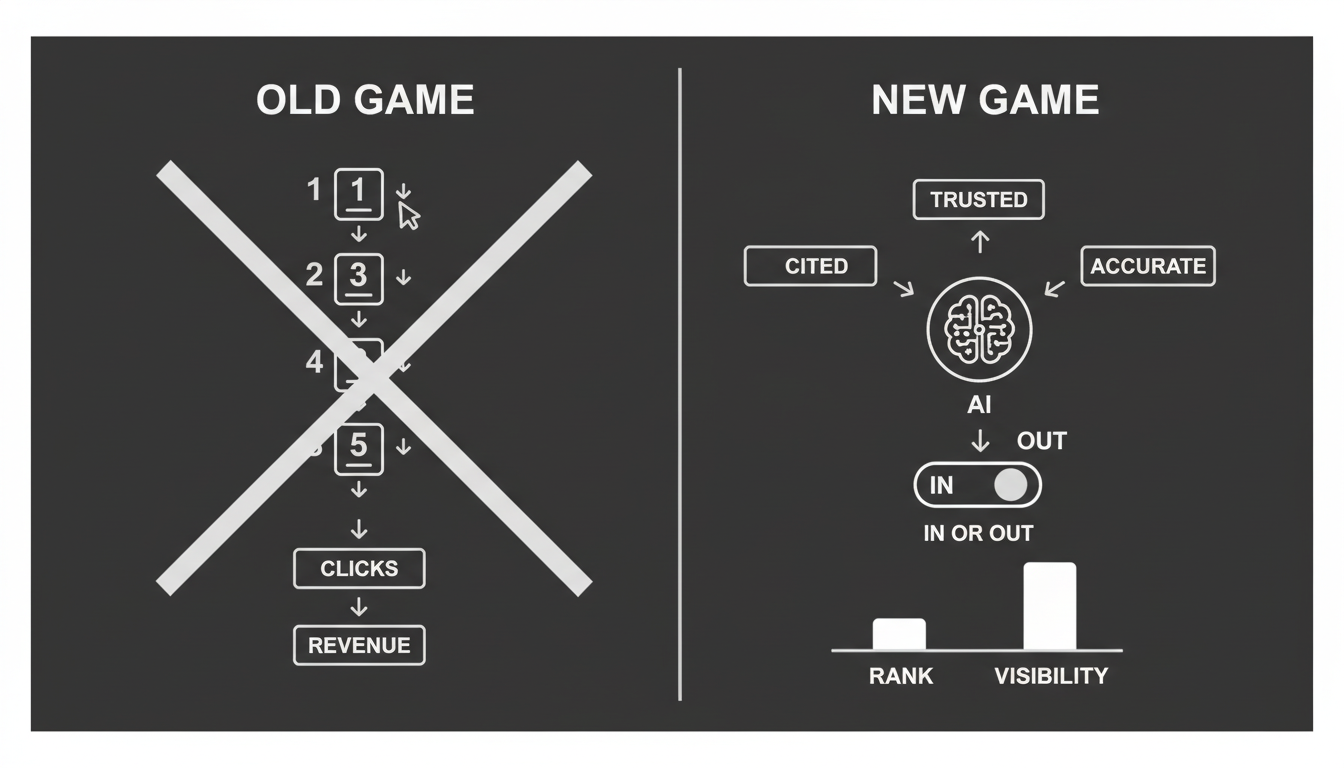

That was the game. Entire industries, careers, and software categories were built around answering it. And for a long time, it worked. Position one meant clicks. Clicks meant revenue. The logic was clean.

In 2025, that logic has collapsed.

How people discover brands has changed at a structural level. We've moved from ten blue links to synthesized answers. Users skip search results entirely and ask AI assistants like ChatGPT, Gemini, and Claude for recommendations.

If your brand isn't cited in the AI's answer, you're invisible. Even if you rank number one in traditional search.

I've watched technology cycles rewrite the rules of marketing before. This one is moving faster and cutting deeper than most people realize. The tools we've relied on for years don't measure what matters anymore. And the gap between what rank trackers show you and what's actually happening to your brand grows every week.

Why Does Traditional Rank Tracking Break Down in an AI-First World?

The core problem is simple. Rank trackers measure a list of links. Modern buyers are consuming conversations.

Rank does not equal recommendation. In traditional SEO, position one captured the click. In AI search, a model synthesizes data from multiple sources to answer a user's question. The AI acts as a gatekeeper. If it doesn't include your brand in that shortlist, your "rank" is irrelevant.

The zero-click reality is here. AI models summarize reviews, compare products, and analyze value propositions instantly. Industry analysis suggests that 68.5% of web traffic is now influenced by AI search, with AI-driven recommendations converting at 8x the rate of traditional traffic.

Think about what that means. The highest-converting traffic channel in your market may be one you can't see, can't measure, and aren't tracking.

I've talked to marketing leaders who are staring at stable keyword rankings while their pipeline quietly shrinks. They assume SEO is "working" because the dashboard says so. The dashboard is measuring the wrong thing.

How Do AI Models Actually Decide What to Recommend?

To understand why rank trackers fail here, you need to understand how AI models construct answers. They don't use a keyword index. They use something closer to a knowledge graph.

The process works roughly like this:

- Retrieval. The model scans for verified entities in its internal map of the world.

- Synthesis. It analyzes attributes, relationships, and sentiment from across the web.

- Reasoning. It infers which solution best fits the user's intent. Something like "Best CRM for small business" triggers a comparison, not a link list.

- Recommendation. It generates a synthesized answer, citing specific brands.

Because models rely on understanding rather than indexing, they don't have "pages" or "positions" in the traditional sense. They have inclusion and exclusion.

You're either in the answer or you're not. There is no position seven.

This is a binary that most marketing teams aren't built to handle. Their entire measurement infrastructure assumes a spectrum of rankings. AI visibility is closer to a light switch.

What Are Rank Trackers Completely Blind To?

Traditional SEO tools are built to analyze HTML and backlinks. They're completely blind to the factors that determine whether a generative AI model recommends your business.

Here's what they can't see:

Citations, not links. AI models prioritize what I'd call "quotable canonicals." Concise, extractable facts. Not backlink volume. Your link profile might be strong, but if there's nothing clean for the model to quote, you won't get cited.

Brand understanding. Rank trackers can't tell you if an AI model understands what you sell. If your entity profile is unclear, the model may hallucinate your product features or categorize you incorrectly. I've seen this happen to well-known companies. The AI confidently describes a product that doesn't exist.

Sentiment and trust. In 2025, sentiment functions as a ranking signal for AI models. They may exclude brands associated with negative reviews or low trust signals, even if their technical SEO is perfect. Your on-page optimization means nothing if the model doesn't trust you.

Entity consistency. Models penalize inconsistency. If your business description varies between your website, Crunchbase, and LinkedIn, the model loses confidence and may simply ignore you, defaulting to a competitor whose signals are cleaner.

None of these factors show up in any traditional rank tracking tool. Not one.

So What Should You Actually Be Measuring?

If "ranking" is the wrong metric, what's the right one?

The new standard for visibility focuses on how AI models perceive your brand across four dimensions. These are the things that actually determine whether you show up in the answers that drive decisions.

Discoverability. How frequently is your brand mentioned when users ask for recommendations in your industry? This is the most basic question, and most companies can't answer it.

Accuracy. Does the model accurately describe your product and value proposition? Or is it hallucinating features you don't have, quoting wrong pricing, or confusing you with a competitor?

Recommendation likelihood. How well does your content cover the specific high-intent queries that drive AI citations? Not just keyword coverage. Intent coverage.

Competitive presence. Are you being positioned as a leader, a challenger, or are you absent from the lists where your competitors appear? Do you even know?

These four dimensions are what Akii is built to track. Not keyword positions. Not backlink counts. The actual signals that determine whether AI models recommend your brand or skip it entirely.

What Does It Look Like When Rankings and AI Visibility Diverge?

The disconnect between SEO rank and AI visibility isn't theoretical. It's happening right now to real companies.

Consider a pattern I've seen repeatedly. A mid-market SaaS platform with healthy traditional rankings for their target keywords. Good content. Decent backlinks. The SEO dashboard looks fine.

But when you check AI-generated answers, the brand appears in only 9% of relevant responses.

Why? The keywords were strong, but the entity signals were weak. AI models defaulted to citing incumbents because the smaller brand lacked structured data and entity saturation across knowledge bases. The models simply didn't have enough confidence to recommend it.

Here's the part that matters. By building Answer Engine Optimization, specifically FAQ schema, and publishing authoritative external reports, companies in this situation have tripled their AI inclusion rates in a single quarter. From 9% to 29%.

A traditional rank tracker would have shown "no change" during that entire period. The biggest visibility gain the company had seen in years was completely invisible to their existing tools.

That's the gap I keep coming back to. The most important shifts in your market position are happening in a channel your current stack can't see.

What Does the New Measurement Stack Actually Look Like?

Modern marketing teams need to upgrade their stack. You can't manage AI visibility with a tool built for 2010.

The new stack requires multi-model monitoring. You need to know if ChatGPT recommends you even if Gemini ignores you. You need to track intent-based prompts, things like "Compare Brand A vs Brand B," rather than just static keywords. And you need to do it continuously, because model behavior changes as training data updates.

This is exactly why we built Akii's AI Brand Audit. It provides automated monitoring across ChatGPT, Claude, and Perplexity, tracking the sentiment and context of every mention. Not just whether you appear, but how you appear and what the model says about you.

The difference between a rank tracker and a visibility intelligence tool is the difference between counting links and understanding perception. One tells you where you sit in a list. The other tells you whether the AI that's increasingly mediating purchase decisions actually knows who you are and trusts you enough to recommend you.

That's not a small distinction. That's the whole game.

How Do You Know If You're Already Losing AI Visibility?

If you rely solely on Google Search Console or legacy SEO tools, you might be losing market share right now without knowing it. That's the uncomfortable part of this shift. The damage is silent.

Run through this quick self-diagnosis:

The "Best of" Test. Ask ChatGPT for the "top 5 tools" in your category. Is your brand listed? If not, you're invisible to every user who asks that question.

The Hallucination Test. Ask Gemini to describe your pricing and top features. Does it get them right, or does it invent them? Inaccurate information is often worse than no information.

The Competitor Blindspot. Do you know which AI models are currently recommending your competitors as a "better alternative" to you? If you don't, you're flying blind in the channel that converts at 8x traditional rates.

The Traffic Drop. Are you seeing a decline in organic traffic despite stable rankings? This often signals that users are getting answers directly from AI instead of clicking through to your site. Your rank didn't change. The behavior around it did.

If you answered "no" or "I don't know" to any of these, your visibility KPIs are broken. You're measuring the old game while the new one is already being played.

Where Does This Go From Here?

I've been through enough technology cycles to know that the tools we use shape the decisions we make. When the tools measure the wrong things, we make the wrong calls. We chase metrics that no longer drive outcomes. We feel confident while we're actually falling behind.

That's where most marketing teams are right now. Their dashboards are green. Their actual visibility is declining.

The shift from rank tracking to visibility intelligence isn't a nice-to-have upgrade. It's a correction. The measurement layer catching up to how buyers actually find and evaluate brands in 2025.

The companies that figure this out first will have a compounding advantage. Not because they have better content or bigger budgets, but because they can see what's actually happening and respond to it.

Stop guessing how AI sees your brand. Check your AI visibility baseline and find out what the models are actually saying when your customers ask.