The Hidden Risk of AI Representation

Right now, AI systems are describing your brand to potential customers. There's a real chance they're getting it wrong.

Not maliciously. Not because of some conspiracy. They're working with whatever information they've absorbed, and that information might be outdated, incomplete, or shaped more by your competitors than by you.

Most companies don't know this risk exists. They're watching search rankings, monitoring social mentions, tracking ad performance. Nobody is checking what ChatGPT, Gemini, or Perplexity actually says when someone asks about their company.

I've spent 25 years building and advising technology companies. I've watched brands get blindsided by platform shifts before. This one is different because it's quieter. There's no dramatic traffic drop. No public crisis. Just a slow, invisible erosion of how your brand is understood by the systems that are increasingly shaping purchase decisions.

What does "wrong" actually look like?

It's not always dramatic. Sometimes an AI describes your product using language from three years ago, before your last major repositioning. Sometimes it places you in a market you've deliberately moved away from. Sometimes it surfaces a competitor's framing of what you do instead of your own.

Here's a simple test. Ask ChatGPT what your company does. Then ask Gemini. Then Perplexity. Compare those answers to how you actually describe your business today.

If you're like most companies I've talked to, at least one of those answers will surprise you. Not in a good way.

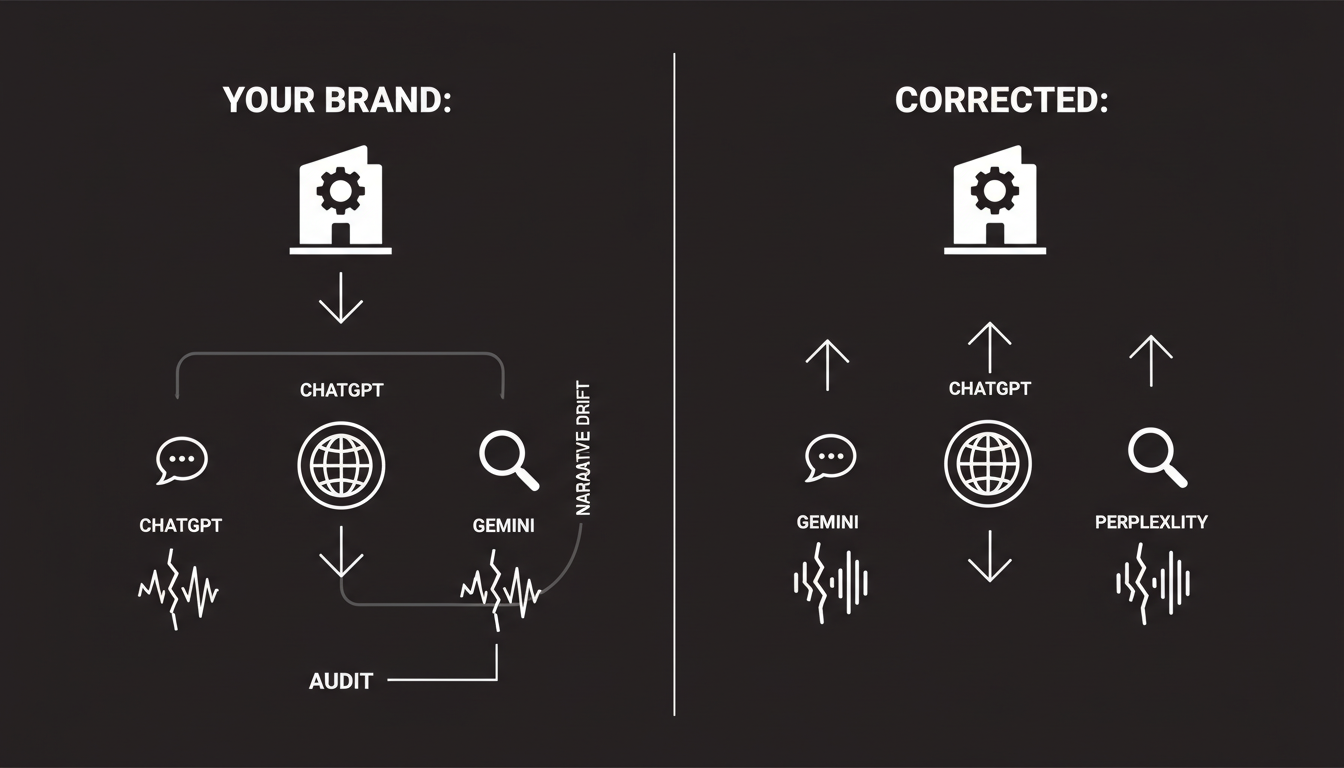

How Narrative Drift Happens

Your brand narrative doesn't stay fixed inside AI systems. It drifts. Slowly, but consistently.

Two main forces drive this.

Stale sources

AI models train on massive datasets that include old web pages, archived articles, outdated press coverage, and historical content that may no longer reflect who you are. When a model was last trained matters. But even models with real-time web access tend to weight certain sources more heavily than others, and those sources might not be the ones you'd choose.

If you rebranded 18 months ago, the AI might still describe the old version of your company. If you shifted your positioning from "enterprise software" to "mid-market platform," the AI might not have caught up. It pulls from a mix of sources, and the freshest, most accurate ones don't always win.

Competitor reinforcement

This one is more subtle. Frankly, it's more dangerous.

When your competitors produce content that positions you in a certain way, AI systems absorb that framing too. If a competitor consistently describes your category in terms that favor their approach, the AI learns that framing. Your brand gets described through their lens.

It's not intentional manipulation in most cases. It's the natural result of who's producing more content, more consistently, with clearer positioning. The AI doesn't know whose version of reality to trust. It synthesizes what it finds.

So the real question is: who is doing more to shape the narrative that AI systems absorb? You, or everyone else?

Why Companies Rarely Notice

This is the part that concerns me most. Not that the problem exists, but that almost nobody is watching for it.

Analytics blindness

Traditional analytics tools weren't built for this. Google Analytics tells you about website traffic. Your SEO platform tells you about search rankings. Your social listening tool tells you about mentions on Twitter and Reddit.

None of them tell you what an AI said about your brand when a potential customer asked "What's the best solution for X?" and your company was either misrepresented or left out entirely.

These AI conversations happen in closed environments. There's no referral link. No click to track. The customer forms an opinion about your brand inside an AI interface and you never see it happen.

Lack of monitoring

Most companies have no process for checking AI outputs about their brand. It's not that they've decided it doesn't matter. It just hasn't occurred to them yet.

Think about how much effort goes into monitoring brand mentions on social media. Companies have entire teams and tool stacks dedicated to it. Now think about how much effort goes into monitoring what AI systems say about their brand.

For most companies, the answer is zero.

That gap between the importance of the channel and the attention being paid to it is where the real risk lives. By the time you notice the effects, the narrative has already drifted significantly. Correcting it takes longer than you'd expect.

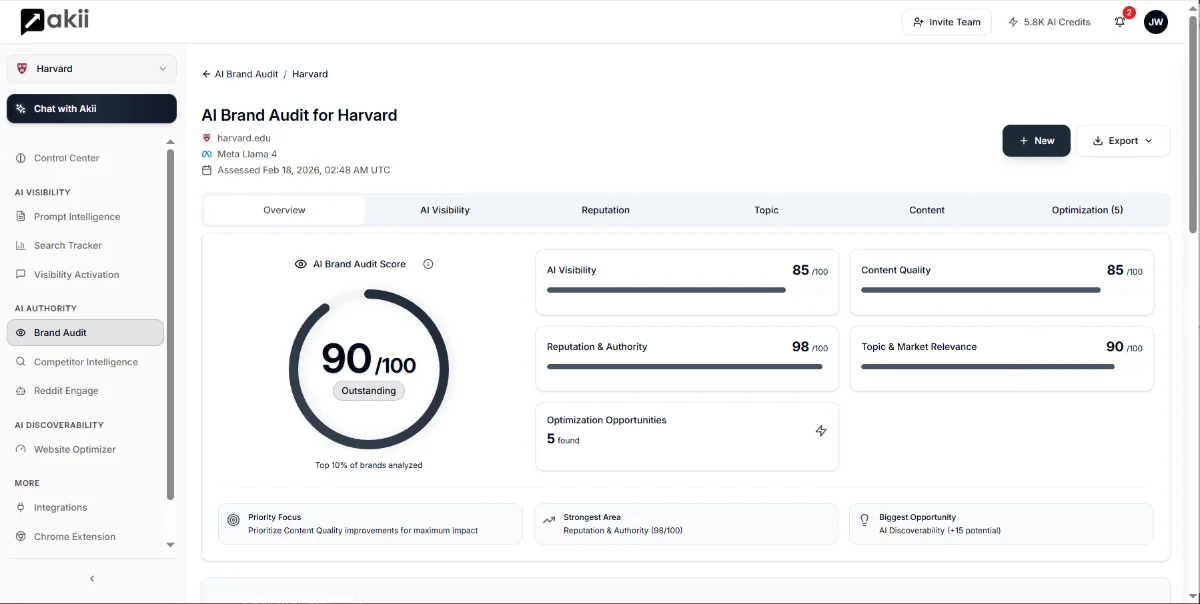

This is exactly the kind of blind spot we built Akii's AI Brand Audit to address. Not as a nice-to-have reporting layer, but as a way to see what's actually being said about your brand in the places where it increasingly matters.

The Consequences of AI Misrepresentation

What actually happens when AI gets your brand wrong? It's tempting to treat it as a minor issue. A curiosity. Something to fix eventually.

It's not.

Wrong category placement

When an AI places your brand in the wrong category, you lose before the conversation even starts.

Imagine a buyer asks an AI assistant: "What are the best project management tools for creative agencies?" If the AI categorizes your product as "enterprise project management" instead of recognizing your creative-agency focus, you won't appear in that answer. Not because you're not relevant, but because the AI's model of who you are doesn't match the question being asked.

This is different from SEO. With search, you can see the keywords you're ranking for and adjust. With AI answers, the categorization happens inside a black box. You don't get to see the logic. You just get excluded.

Lost recommendations

AI systems don't just describe brands. They recommend them. Those recommendations are becoming a meaningful part of how people discover and evaluate products.

When someone asks "What should I use for X?" the AI produces a short list. If your brand narrative has drifted, if the AI's understanding of what you do is outdated or inaccurate, you won't make that list. Your competitor, whose positioning is clearer and more current in the AI's training data, will.

Every time that happens, you've lost a potential customer in a way that's completely invisible to your existing analytics. No bounce rate to analyze. No lost click to measure. Just an opportunity that never existed because the AI didn't think of you correctly.

I've seen this pattern before with earlier platform shifts. The companies that noticed early and adapted had a real advantage. The ones that waited until the impact was obvious spent years catching up. Understanding the AI perception gap is the first step toward not being in that second group.

Detecting AI Narrative Risk

The good news is that this isn't an unsolvable problem. It just requires a different kind of attention than most marketing teams are used to.

Prompt testing

The simplest starting point is manual. Pick 10 to 15 prompts that represent how your potential customers might ask about your category, your competitors, or your specific brand. Run them across multiple AI systems. Document the answers.

Some prompts worth trying:

- "What does [your company] do?"

- "What are the best [your category] tools?"

- "How does [your company] compare to [competitor]?"

- "What's the difference between [your company] and [competitor]?"

- "Who should I use for [specific use case]?"

Do this monthly at minimum. The answers change as models get updated, and what was accurate last month might not be accurate today.

Cross-engine comparison

Different AI systems produce different answers about your brand. This matters because your customers aren't all using the same one.

ChatGPT might describe you accurately while Gemini gets your positioning wrong. Perplexity might cite outdated sources while Claude gives a more current picture. Each system has different training data, different update cycles, and different ways of pulling information together.

Checking just one system gives you a false sense of security. You need to compare across engines to understand the full picture of how AI represents your brand.

This is where Brand State Snapshots become practical. Instead of running manual checks across every AI system every month, you can track how your brand representation changes over time and across platforms. It turns an ad-hoc exercise into an actual process.

What This Means Right Now

Here's what I think most people are getting wrong about this: they're treating AI brand representation as a future problem. Something to think about next quarter. Something to add to the roadmap.

Wrong. AI systems are answering questions about your brand today. Those answers are shaping perceptions today. The narrative drift is happening today.

The companies that come out ahead are the ones that start paying attention now. Not with panic, but with the same rigor they apply to any other channel where their brand shows up.

You wouldn't ignore what Google says about you on page one. Don't ignore what AI says about you in its first answer.

Start with the basics. Test the prompts. Compare the engines. Document what you find. Then decide what to do about it.

The risk isn't that AI will destroy your brand overnight. The risk is that it will quietly reshape how people understand you, and by the time you notice, the gap between your actual positioning and the AI's version of it will be wide enough to cost you real revenue.

That's worth taking seriously. Start today.