The Machine Already Has an Opinion About You

AI is already telling people what your brand is. Not what you say it is. What the machine thinks it is.

Those two things are often very different.

I've watched this pattern repeat across every major technology shift for 25 years. A new distribution channel emerges. Companies assume their existing positioning will carry over. It doesn't. The ones that adapt early win. The rest spend years wondering why their pipeline dried up.

This time, the channel is AI itself. And the gap between how you define your brand and how AI models describe it to potential buyers is one of the most underestimated risks in business right now.

What Exactly Is the AI Perception Gap?

Think about the last time you Googled something. You clicked a link. You read a page that someone at that company wrote and approved. Their words, not a machine's interpretation of them.

Now think about what happens when someone asks ChatGPT, Claude, or Gemini for a product recommendation. The AI doesn't send the user to your homepage. It builds a narrative on the spot, pulling from dozens of sources and compressing everything into a short answer. Your carefully written positioning gets run through a blender.

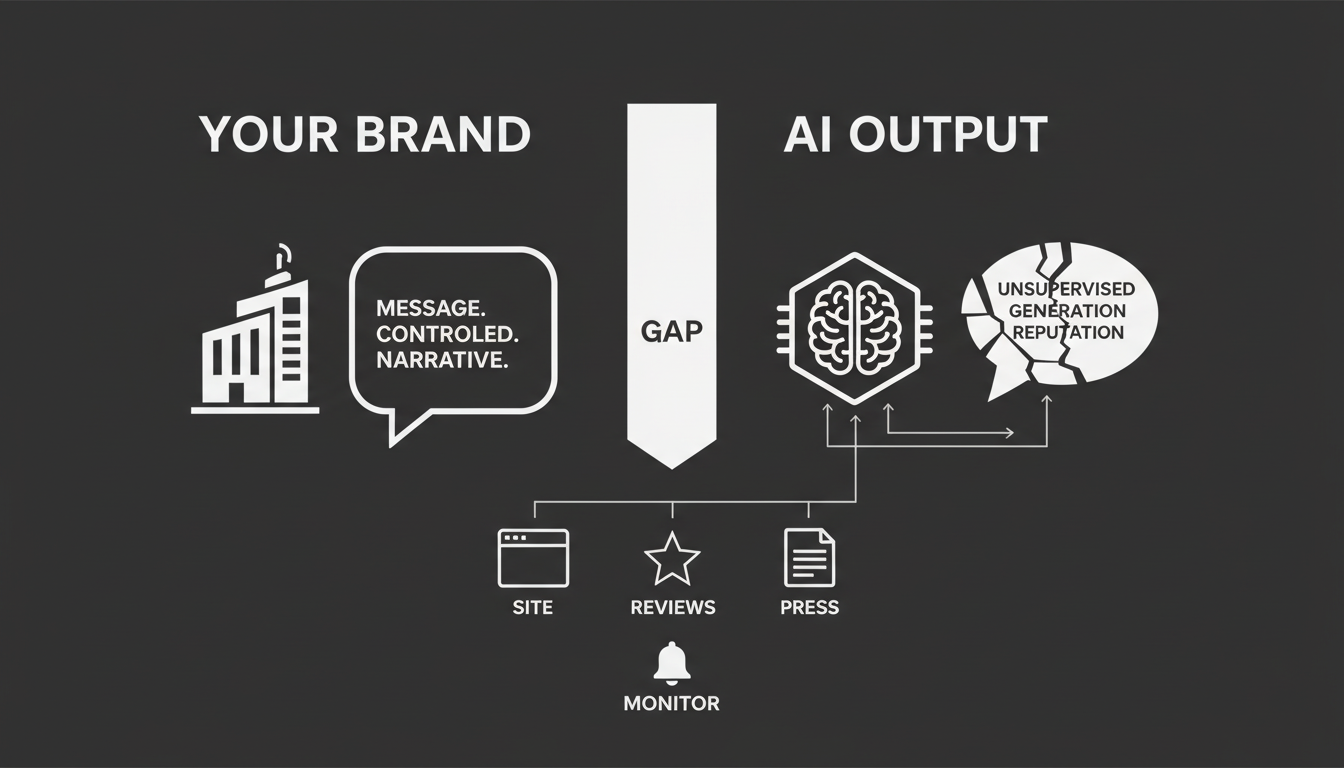

The AI Perception Gap is the space between what you believe your brand represents and what AI models actually tell people about you.

Maybe you sell a premium enterprise analytics platform. But the AI calls you a "budget dashboard tool for freelancers." Maybe you've invested millions in a specific market position. But the machine slots you into the wrong category entirely because your digital signals are inconsistent.

This isn't theoretical. It's happening right now, to companies that have no idea it's going on.

Why Should You Care How a Machine Describes You?

Because the machine is increasingly the first touchpoint. Not your website. Not your sales team. Not your ad.

When 73% of consumers are asking AI assistants for business recommendations, the AI's answer becomes your first impression. If that first impression is wrong, you never get a chance to correct it. The buyer moves on before they ever see your actual site.

Most people are still framing this as an SEO problem. It's not. It's a brand integrity problem. The AI isn't just ranking you. It's defining you.

What makes it worse: you lose deals you never knew existed. There's no impression to count, no click to track. The AI simply didn't mention you, or mentioned you incorrectly, and the buyer went somewhere else. Your analytics show nothing. Your pipeline just quietly shrinks.

How Does AI Actually Decide What Your Brand Is?

This is where people get it wrong. They assume the AI is reading their website and summarizing it. That's not how it works.

Large language models process your brand the way an analyst would if you handed them 10,000 data points and asked for a 150-word summary. The model pulls from your site, your competitors' sites, review platforms, press mentions, social media, Crunchbase profiles, LinkedIn pages, and whatever else it can find. Then it compresses all of that into a short, confident-sounding answer.

The compression is where the damage happens.

The Jargon Tax

If your marketing copy is full of vague, aspirational language, the AI doesn't know what to do with it. When a model encounters something like "AI-driven multi-modal interface for customer success," it tries to fit that into a category it already understands. Often it picks the simplest one. Your sophisticated platform becomes "a chatbot."

I've seen this over and over. Companies invest in positioning that sounds great on a slide deck but gives the AI nothing concrete to work with. The machine doesn't care about your vision. It cares about classifiable facts.

Category Buckets Are Prisons

Once an AI assigns you to a category, that label acts as a filter for every future query. If Gemini decides you're "accounting software" when you actually sell procurement automation, you're invisible for every procurement query. You might show up for accounting queries instead, but your pricing and features won't match what those buyers need.

You lose both ways.

The Freshness Problem

AI models care about recency. If your last major press mention was from 2022 and your G2 reviews dried up six months ago, the model's confidence in your data drops. When confidence drops, the AI hedges. Instead of "Brand X is the leading solution for..." you get "Brand X appears to have been known for..."

That hedging language kills conversions.

What Does Misclassification Actually Cost?

Let me make this concrete. AI misrepresentation hits companies in four specific ways.

Wrong positioning. The AI categorizes your enterprise solution as an SMB tool. You're excluded from high-value queries entirely. The buyers you want never see your name.

Missing features. Your core differentiators, things like API access, security compliance, or specific integrations, don't appear in the AI's answer because they aren't structured in a way the model can extract. A buyer asks "Does Brand X integrate with Salesforce?" and the AI says it doesn't know. That buyer picks the competitor whose integration is clearly documented.

Wrong pricing. The AI quotes your pricing from three years ago. Or worse, says "pricing is not publicly available," which in an AI answer reads as "this company is hiding something."

Toxic comparisons. The AI recommends your competitor as the "industry standard" and frames you as a "risky alternative." Not because that's true, but because the competitor has more recent, more structured data backing their position.

Here's a real example. An electric bike brand called AeroCycle found that Gemini consistently described them as selling "standard commuter bicycles." The words "electric" and "lightweight," their entire value proposition, were missing. Why? Their site lacked the specific structured data tags that would make those attributes machine-readable. They were excluded from every "best e-bike" query. Not because their product was bad. Because the machine couldn't see what made it different.

How Do These Gaps Form in the First Place?

AI models aren't malicious. They're pattern-matching engines doing their best with whatever data they can find. When they get your brand wrong, it's almost always because of one of these infrastructure failures.

Fragmented Identity

This is the most common cause, and it's entirely self-inflicted.

Your LinkedIn page says "Consultancy." Your Crunchbase profile says "Marketing Platform." Your website says "SaaS Tool." The AI sees three contradictory signals and has to pick one. Usually it picks the most generic option to avoid being wrong. Or it leaves you off competitive shortlists entirely.

How many companies have actually audited what their brand description says across every platform? In my experience, almost none. Every inconsistency is a signal to the AI that your identity is uncertain.

Outdated Information

Models prioritize fresh data. If your competitors are publishing new content, earning new citations, and generating new reviews while your last blog post is from Q3 2023, the model's picture of your market shifts. Your competitors become the "current" players. You become the "former" player. Even if nothing about your actual product has changed.

Competitor Displacement

This one is subtle and dangerous. Your competitors don't need to attack you directly. They just need to flood the AI's data sources with their own structured, authoritative signals.

If a competitor is aggressively publishing content that associates their brand with "Enterprise CRM," the AI's probabilistic weights shift. The competitor becomes the category standard. You become the alternative. You didn't change anything. They just outpaced you in the machine's information diet.

How Do You Find Out What the AI Actually Thinks?

You can't fix what you can't see. Traditional SEO tools are completely blind to this problem because they're built to track Google's blue links, not AI-generated answers.

Run a Prompt Audit

Start by asking the major AI models the questions your buyers would actually ask. Not just "What is [Brand Name]?" but the full range of buying intent.

Ask ChatGPT, Gemini, Claude, and Perplexity: "What is [Brand Name] and what problem does it solve?" Compare that answer to your actual positioning. Ask "How much does [Brand Name] cost?" If the AI says pricing isn't available, you have a data extraction gap. Ask "Compare [Brand Name] vs. [Competitor]." This reveals whether you're treated as a peer or a footnote.

The results will probably surprise you. I've seen companies that thought their positioning was airtight discover that one or more major AI models had them in completely the wrong category.

Test Across Multiple Models

A mistake I see constantly: companies test one AI model and assume the results apply everywhere. They don't.

Each model has different training data, different retrieval methods, and different risk profiles. ChatGPT casts a wide net and rewards community buzz, but its citations can be inconsistent. Gemini leans heavily on Google's Knowledge Graph and schema markup, favoring established incumbents. Perplexity relies on live web retrieval and rewards data-driven thought leadership and PR citations. Claude is conservative and risk-averse, requiring strong authority signals before it will recommend a brand confidently.

Your brand might look great in ChatGPT and be completely misrepresented in Gemini. If you're only checking one model, you're flying blind. Akii's AI Search Tracker provides a live, multi-model visibility dashboard that shows exactly where these gaps exist across engines, so you're not running this audit manually every week.

How Do You Actually Fix the Gap?

Once you know where the AI is getting you wrong, you can't just submit a correction ticket. There's no "Hey ChatGPT, please update my brand description" form. You have to engineer the fix by giving the models better raw material to work with.

This comes down to technical clarity, external validation, and continuous monitoring.

Make Your Brand Machine-Readable

This is the foundation. If the AI can't extract clean, structured facts from your digital presence, it will guess. And its guesses will be wrong.

Unify your entity. Create one master description of your company. One clear category, one consistent set of facts. Then replicate that exact language across your website's About page, your LinkedIn Company Page, your Crunchbase profile, and your Wikidata entry. Word for word. This consistency forces the model to accept your definition as the single source of truth instead of averaging across contradictory signals.

Deploy schema markup. AI agents don't read your pages the way humans do. They extract structured data. If your pricing is just text on a page, the model might miss it or misread it. Wrap your core attributes in Schema.org markup: Product, Offer, Organization schema. This turns your marketing copy into machine-parseable facts.

Create quotable content. Structure your high-traffic pages so the AI can easily extract key statements. Put TL;DR summaries at the top. Use question-based headings like "What is [Product]?" followed by a short, declarative answer. You're pre-compressing the information so the AI doesn't have to guess what matters.

Akii's Website Optimizer can analyze your pages and generate the specific schema markup, AI-friendly robots.txt configurations, and LLM-readable directories your site needs. It's designed to handle the technical side without requiring your engineering team to become AI search experts.

Build External Proof

Technical clarity makes your site readable. External validation makes it trustworthy.

AI models are inherently cautious. They rely on third-party corroboration before they'll confidently recommend anything. If you claim to be the fastest enterprise CRM on your own blog, the AI treats that as marketing. If Gartner, TechCrunch, or a peer-reviewed study says the same thing, the AI treats it as fact.

Target authority sources. Focus PR efforts on the publications and analysts that AI models treat as ground truth: industry reports, major tech publications, academic citations. Manage review velocity. Models ingest user sentiment directly from platforms like G2, Capterra, and Trustpilot. A steady flow of recent positive reviews signals that your brand is current and trusted. A stale review profile signals the opposite.

This isn't new advice for anyone who's done PR or reputation management. What's new is that the audience for these efforts isn't just human readers anymore. The AI is reading them too, and weighting them heavily.

Monitor Continuously

Here's the part most companies skip. And it's the part that matters most long-term.

Closing the perception gap isn't a one-time project. Models constantly ingest new data. Your competitors are constantly publishing. The narrative can drift back into misclassification within weeks if you're not watching.

You need an ongoing system that tracks how AI models describe your brand, alerts you when something changes, and gives you enough lead time to correct it before it affects your pipeline. Akii's AI Brand Audit runs 24/7 automated monitoring across seven dimensions, tracking discoverability, content quality, and reputation. If your sentiment drops or your narrative drifts, you get an alert on day one, not at the end of the quarter.

Is This Really Different From Traditional Brand Management?

Yes. And the difference matters.

Traditional brand management assumed you controlled the narrative because you controlled the channel. You wrote the ad. You designed the landing page. You trained the sales rep. The customer interacted with your words.

In an AI-first world, you don't control the channel. The AI is the channel. It's constructing a version of your brand story in real time, based on whatever signals it can find. You're not the author anymore. You're the editor.

That's a fundamental shift in how brand management works. Companies that treat it like a minor tweak to their existing SEO strategy are going to fall behind the ones that recognize it for what it is: a new discipline entirely.

What Happens If You Do Nothing?

I want to be direct about this because I think the risk is genuinely underappreciated.

If you allow the AI Perception Gap to persist, you are letting a probability engine define your value proposition, choose your category, and decide whether to send customers to you or your competitors. Every day that your digital signals are inconsistent, outdated, or unstructured, the machine's picture of you degrades a little more.

Meanwhile, the companies actively managing their AI presence are getting stronger. Their signals are cleaner. Their citations are fresher. Their entity profiles are unified. The gap between you and them widens, and it compounds.

This isn't a problem that gets better with time. It gets worse.

Where Do You Start?

If you've read this far and you're wondering what to do first, here's the practical sequence.

Week one: Run a prompt audit across ChatGPT, Gemini, Claude, and Perplexity. Document every inaccuracy, every missing feature, every wrong category assignment.

Week two: Audit your entity consistency across every platform where your brand appears: LinkedIn, Crunchbase, Wikidata, your own site. Fix the contradictions.

Week three: Build schema markup on your highest-traffic pages. Make your pricing, features, and category machine-readable.

Week four: Build a monitoring system. Whether you use Akii's tools or build something in-house, you need ongoing visibility into how AI models are representing you.

The brands that win in 2026 won't be the ones with the biggest ad budgets or the cleverest taglines. They'll be the ones that understood, early, that the AI's version of their story matters as much as their own. And they'll be the ones that took control of it before the gap became uncloseable.

Start with a full AI Brand Audit from Akii to see exactly where you stand today. Because the machine already has an opinion about you. The only question is whether you know what it is.