Why a Single AI Visibility Score Tells You Almost Nothing

Most marketing teams haven't caught up to this yet: the rules of search changed, but their measurement habits didn't.

For a decade, SEO offered something comfortable. If you ranked number one on Monday, you probably ranked number one on Friday. You ran an audit, fixed some errors, checked back in a month. That worked because the environment was predictable.

That world is gone.

AI models like ChatGPT, Gemini, and Perplexity don't retrieve a fixed list of links. They synthesize a new answer every time someone asks a question. The output depends on context, on recently ingested data, on probabilistic reasoning that shifts constantly. So when someone tells you your AI Visibility Score is 75, the honest follow-up is: compared to what? Compared to when?

A score without history is just a number. A number without direction is noise.

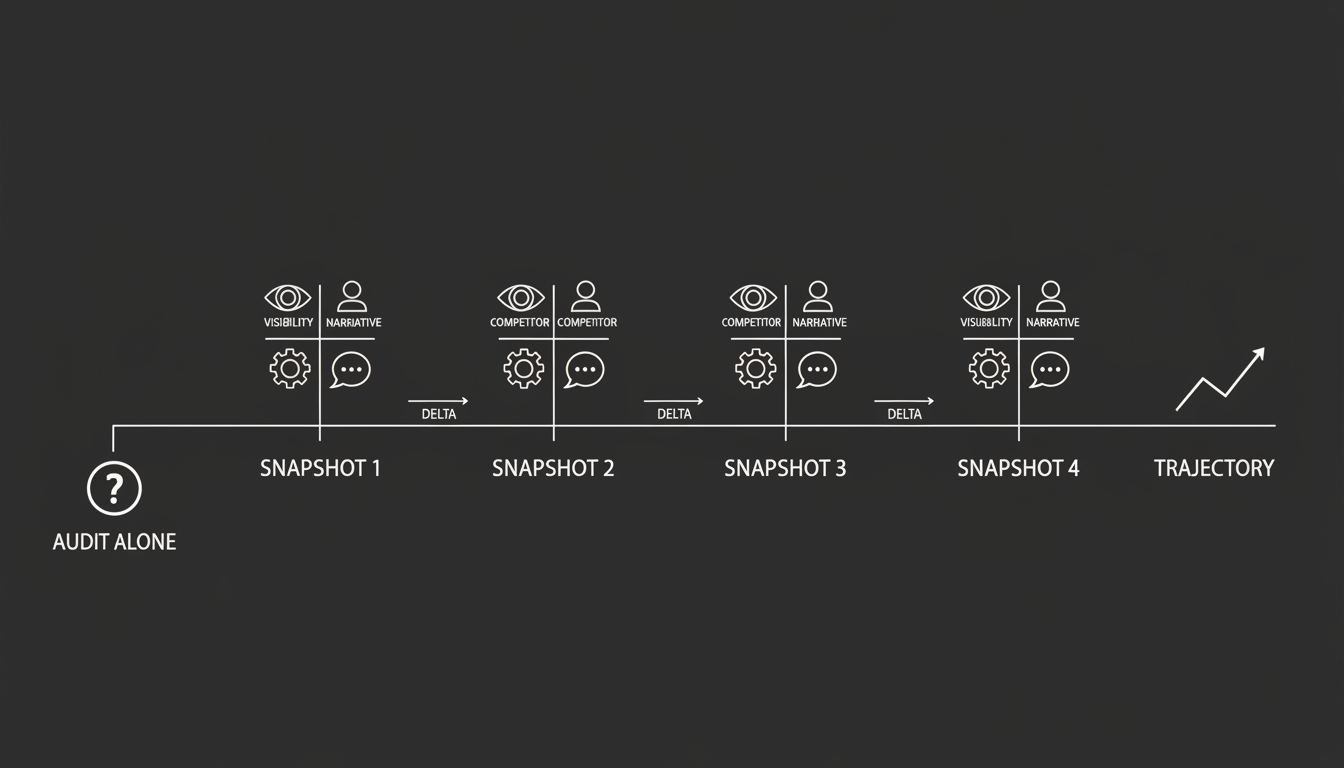

What's missing from most AI search strategies is continuous measurement. I think of it as the Brand State Snapshot. Not a one-time audit. Not a quarterly PDF. A timestamped, comparable record of how AI engines perceive your brand at a specific moment, designed to be stacked against previous records so you can actually see what's happening over time.

Why Does AI Visibility Keep Shifting When Nothing on Your Site Changed?

This is the question that trips up experienced marketers most. In traditional search, if your rankings dropped, it usually meant one of two things: you broke something technical, or a competitor outranked you. Cause and effect were relatively clear.

In AI search, your visibility can change because the narrative drifted.

Here's what that looks like in practice. Day 1, an AI model digests a TechCrunch article calling you a market leader. Your sentiment is positive. Day 14, the same model ingests a viral LinkedIn post complaining about your customer support. Day 15, it re-synthesizes its answer. It still mentions you, but now adds a cautionary note about support delays.

You haven't moved down a rank. There is no rank. What changed is the story being told about you. A traditional tracker sees you're still present and reports "No Change." But the actual answer a buyer reads is materially different.

It gets worse. Your competitors aren't sitting still. If a competitor launches a campaign to saturate the web with "enterprise" citations, they alter the probabilistic weight of the entire category. Your brand didn't change, but the context around it did. Without a record of the market before their campaign, you can't even diagnose why you're suddenly losing ground on "Best Enterprise Tool" queries.

I've watched this pattern play out across multiple technology cycles. The companies that win aren't the ones with the best single measurement. They're the ones who can see the trajectory.

Visibility in 2026 is a movie, not a photograph. If you're only looking at individual frames, you miss the plot.

What's Actually Wrong With the PDF Audit?

The most dangerous artifact in modern marketing might be the one-time audit exported to PDF.

Agencies love them. Internal teams love them. They feel thorough. They look professional in a slide deck. But in the context of AI search, they fail for two specific reasons.

No comparison baseline

Say you run a scan and find your Brand Understanding Score is 65 out of 100. Is that good? Maybe, if you were at 40 last week. Is it a crisis? Yes, if you were at 90 last week.

Without a previous snapshot, a score has no vector. You don't know if your strategy is working or failing. You're making decisions without knowing which direction you're moving.

No context for volatility

AI models are non-deterministic. Check a prompt once and your brand is missing. That might be random fluctuation. Check it every day for a week and you're missing every time. That's a structural problem.

A one-time audit captures the noise. A series of snapshots captures the signal.

I've seen teams panic and redirect resources based on a single bad reading that turned out to be meaningless. Meanwhile, actual structural erosion of their brand went undetected because nobody was tracking the pattern. Single-point measurement doesn't just fail to help. It actively misleads.

What Should Replace the Audit?

If the PDF audit is dead, the replacement isn't a better PDF. It's a different kind of record entirely.

A Brand State Snapshot is an immutable, timestamped capture of your brand's total reality inside the AI search system. Not just a visibility score. A complete view of how the machine perceives you at a specific moment in time.

To build a valid snapshot, you need to capture four distinct states simultaneously. Skip any one of them and you're flying partially blind.

The Visibility State: Are we part of the conversation?

This measures raw presence. Your inclusion rate across a meaningful set of prompts covering definitional queries ("What is X?"), evaluative queries ("What's the best X?"), and transactional queries ("Where should I buy X?").

The metric looks something like: "Appeared in 42% of prompts across 5 models."

This is your top-line market share in AI answers. If this number drops between snapshots, you have a fundamental discoverability problem. If it's stable, the issue is somewhere else.

The Competitive State: Who is standing next to us?

AI search is zero-sum in a way traditional search wasn't. There's no page two. If you aren't in the shortlist, someone else is. A snapshot must record exactly which competitors appeared alongside you and how often.

The metric: Share of Voice relative to each named competitor.

Why this matters so much is that it detects competitive insertion early. If a brand you've never seen before suddenly appears in your snapshot, that's an early warning. Someone just started a campaign, and it's working. You want to know that before it costs you revenue, not after.

The Technical State: Are we machine-readable right now?

This captures the infrastructure layer. The state of your Schema markup, your robots.txt configuration, your Knowledge Graph connections. The plumbing that AI models read when they decide whether to include you.

The metric: "Product Schema Validated: Yes/No."

Here's where this gets practical. If your Visibility State drops, the first thing you check is your Technical State. Did a code deployment break your schema? Did someone accidentally block a crawler? A snapshot lets you correlate visibility drops with technical changes. Without it, you're guessing.

The Narrative State: What story is being told about us?

This is the qualitative layer. It captures the actual text of the AI's answer, analyzing sentiment, the adjectives used, the specific features cited, and any claims being made.

The metric might read: "Sentiment: Neutral. Key Attribute: 'Expensive'."

This is where you detect hallucinations and sentiment drift. If the narrative shifts from "innovative" to "legacy," your revenue will suffer long before your traffic numbers move. Most brands don't have this early warning system. They should.

How Do You Actually Use Snapshot Comparisons?

Snapshots sitting in a database are worth nothing. Their value comes entirely from comparison. You need a workflow that analyzes the delta between snapshots and turns that into specific actions.

Here's a practical four-step process.

Step 1: Establish the baseline

Before you change anything, freeze your current state.

Run a full AI brand audit across ChatGPT, Gemini, Claude, and Perplexity. Save this data as your "Golden Master." This is the benchmark against which all future performance is measured. Note the date and any active campaigns.

This step feels tedious. It's also the most important thing you'll do. Every insight that follows depends on having a clean starting point.

Step 2: Set the right comparison interval

You can't compare snapshots randomly. You need a cadence that matches how fast your market moves.

If you're in a high-volatility sector like SaaS, crypto, or news, take snapshots daily. Models update frequently in these spaces, and news cycles shift sentiment overnight. If you're in a more stable sector like manufacturing or B2B services, weekly is usually sufficient.

The key is consistency. Irregular snapshots create gaps in your data that make it harder to identify when a change actually started.

At Akii, we built the AI Brand Audit to capture and timestamp these states automatically, specifically because doing this manually doesn't scale.

Step 3: Read the deltas, not the reports

This is where most teams waste time. Don't read the full reports. Look only at what changed between Snapshot A and Snapshot B.

The Competitor Gain Delta. Your inclusion rate stayed flat, but Competitor X jumped 10%. Compare their Narrative State across the two snapshots. Did the AI start citing a new source for them? Did they publish a new report? Once you identify the new citation source, you can target it for your own campaign.

The Hallucination Delta. Your Brand Understanding score dropped 15 points. Check the Narrative State. The AI is now quoting your pricing as "$500" instead of "$50." This is a critical error. Deploy corrected Schema immediately and force a re-crawl. Without the snapshot comparison, you might not catch this for weeks.

The Sentiment Delta. Your inclusion is stable, but sentiment shifted from Positive to Neutral. The AI has ingested cautionary language. Check the Competitive State. Are competitors being framed as "safer"? If so, you need fresh positive signals. A review generation campaign on Trustpilot or G2 can shift this by giving the model recent, positive data to weight.

Each of these scenarios is invisible to a one-time audit. They only become visible through comparison.

Step 4: Validate and close the loop

After taking action, wait for the next snapshot.

Did the delta close? Did the hallucination disappear? Did sentiment recover?

This is where you prove ROI to leadership. Not with vague claims about "brand health," but with specific evidence: "On November 1st, we were invisible for this query category. We deployed corrected Schema. On November 15th, we're recommended. Here's the chart."

I've found this kind of before-and-after evidence is the fastest way to get continued investment in AI search strategy. It turns an abstract problem into a concrete business case.

Why Is This a Strategy Shift and Not Just Better Reporting?

There's a meaningful difference between reporting and infrastructure. Reporting is passive. It looks backward and asks, "What happened?"

Infrastructure is active. It maintains a continuous memory of the market and asks how the environment is changing and whether you're keeping pace.

Adopting a snapshot strategy means making that shift. You're not producing reports for a quarterly review. You're building a system that gives you persistent awareness of how AI engines perceive your brand, your competitors, and your category.

Here's why the memory part matters so much. AI models have a context window, but they don't have a long-term memory of your brand strategy. You have to provide that memory yourself.

By maintaining a historical chain of Brand State Snapshots, you create something your competitors probably don't have. You're the only one who knows that six months ago, Gemini preferred your brand because of a specific article. If Gemini stops citing that article, you know exactly what to fix. Your competitors, relying on one-time audits, will be baffled by their sudden drop in leads.

That information asymmetry is a real advantage. It compounds over time.

Where Does This Leave Most Brands Right Now?

Most brands are still operating with measurement habits built for a search environment that no longer exists. They're running periodic audits, getting a score, and treating it as ground truth. In a stable system, that was fine. In a probabilistic, constantly shifting system, it's a recipe for slow, invisible decline.

The fix isn't complicated. It's just different from what most teams are used to.

Stop treating AI visibility as a number to check. Start treating it as a signal to track. Build the baseline. Set the cadence. Read the deltas. Act on what changed. Validate the fix.

If you want to see what this looks like in practice, Akii's feature set is built around exactly this workflow. We designed it because I got tired of watching smart teams make bad decisions based on incomplete data.

Data without history is noise. The brands that win in AI search won't be the ones with the best single score. They'll be the ones who can see the trajectory and act on it before the competition even knows something changed.

Stop auditing. Start tracking.