The Scorecard Changed and Nobody Sent You the Memo

I keep hearing the same story from marketing leaders. It goes like this:

"We ran a full technical audit. Our site is faster. Our domain authority is higher. Our content is fresher, deeper, better researched. We rank number one on Google for our core category terms."

Then they open ChatGPT. Or Gemini. Or Perplexity. They type "What's the best software for [their category]?" And the AI recommends their competitor.

The competitor with the slower site. The one whose blog hasn't been updated in six months. The one they beat on every traditional SEO metric that exists.

This isn't an edge case. It's a pattern I've watched play out across dozens of categories over the past year.

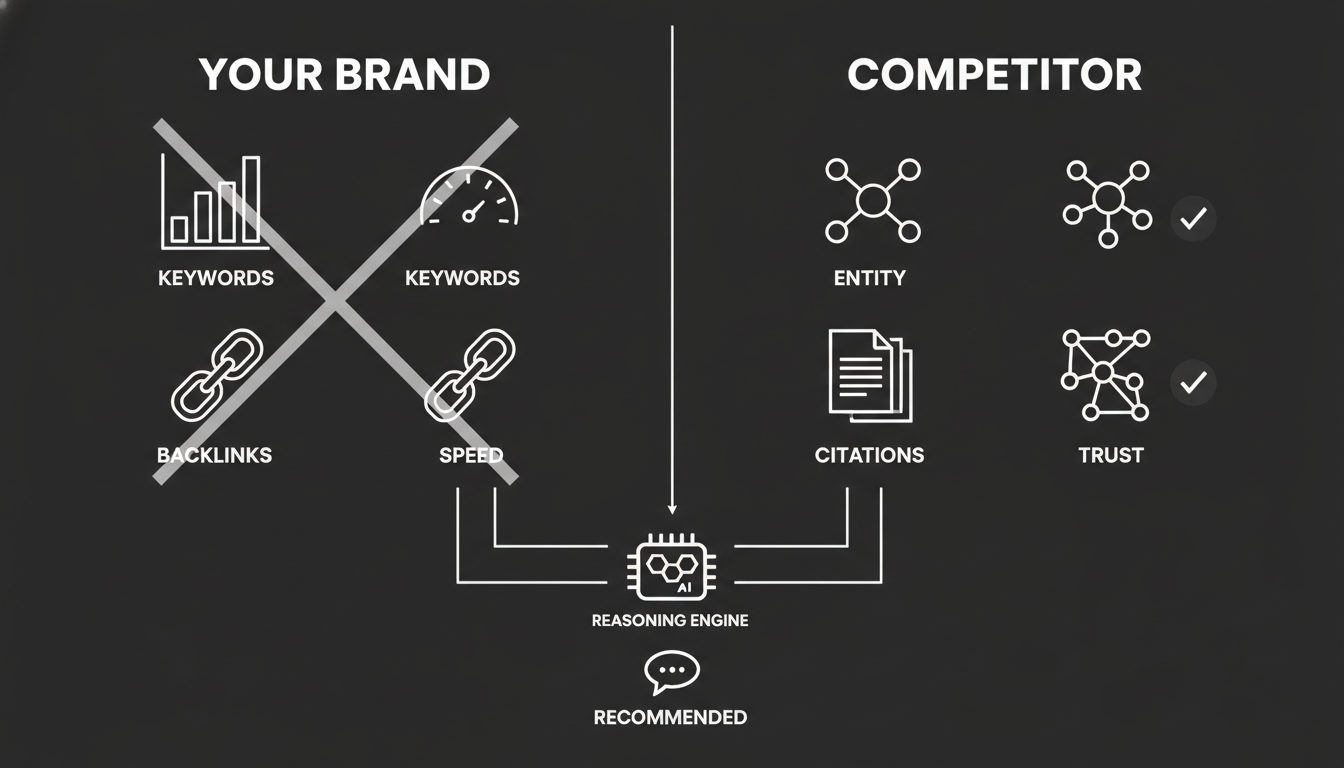

The reason is straightforward, but it requires accepting something uncomfortable: the scorecard changed. You're improving for a search engine. Your competitor, whether by accident or intent, is optimized for a reasoning engine.

Those are not the same thing.

Why Does a "Worse" Site Beat a "Better" One in AI Search?

In traditional SEO, the algorithm is a filter. It looks at millions of pages and filtering them by relevance and authority. Poor technical SEO gets you filtered out. Good technical SEO keeps you in the running.

AI search doesn't work like a filter. It works like a synthesizer.

Think about how a human analyst recommends a product. They don't care if the company's website loads in 0.5 seconds or 1.5 seconds. They care about trust, clarity, and consensus. Do I trust this company? Do I understand exactly what they do? Does the rest of the world agree?

Your competitor is winning because the AI model has a clear, consistent understanding of who they are. In the model's internal knowledge graph, your competitor exists as a well-defined entity. Your brand, despite all that perfect SEO, might exist as a loose collection of keywords rather than a verified entity.

The AI prefers the verified node it understands over the optimized page it merely reads.

That distinction matters more than any Core Web Vitals score.

What Is "Authority Bias" and Why Does It Favor Incumbents?

One of the most counterintuitive things I've seen in AI visibility data is how heavily these models lean on what I'd call authority bias. It's not a bug. It's a feature of how large language models are built.

AI models are trained on massive historical datasets. They develop a worldview based on the frequency and consistency of entity mentions over time. This creates a specific, measurable advantage for incumbents.

The Brand Saturation Effect

If your competitor has been around for ten years, they likely have thousands of unstructured mentions scattered across the web. Forums, news articles, press releases, reviews, conference talks, podcast transcripts. Even if their current SEO strategy is weak, this historical weight creates enormous gravity inside the model.

Here's the blunt version: the AI predicts competence based on familiarity.

Your competitor has 5,000 mentions across the web over five years. The AI predicts the next word associated with their brand is "leader" or "standard." You have 500 mentions, mostly on your own blog. The AI predicts the next word associated with your brand is nothing. It doesn't have enough data to be confident.

Familiarity Beats Freshness in AI

Google cares deeply about freshness. Publish a new article today and Google can reward you within hours. AI models often favor stability. They're risk-averse by design.

They prefer to cite a source corroborated by multiple other sources over a long period. A G2 review history spanning three years. A Crunchbase profile that matches a Wikipedia entry. A pattern of consistent mentions across trusted publications.

A brand-new, perfectly optimized blog post? That's one signal. A self-published one at that.

Your competitor isn't winning because they're better. They're winning because they're safer for the AI to recommend. And "safer" in this context means less likely to be wrong if someone checks.

Why Does Citation Density Matter More Than Keyword Coverage?

This is where most SEO teams lose the thread entirely.

Traditional SEO is about keywords. You put "Best CRM" in your title, your headers, your body text. You build content clusters around it. You earn backlinks with that anchor text.

AI visibility is about citations. The AI doesn't care how many times you say "Best CRM" on your own site. It cares how many other trusted sources connect the concept "Best CRM" to your brand entity.

The Verified Node Concept

AI models view the web as a network of entities, not a list of pages. To recommend a brand, the model looks for external corroboration.

Your competitor might have a terrible blog. Genuinely bad content. But if they're listed on Wikidata as a verified software company, categorized consistently on G2 and Capterra, and mentioned in "best of" lists on publications like TechCrunch or Forbes, the AI views them as a high-trust node.

The model's reasoning is essentially: "I see this entity mentioned as a leader by sources I trust. Because of this, I'll cite them."

You, on the other hand, might have "Best CRM" all over your site. Your content might be genuinely better. But without those external signal points, the AI views your claim as unverified self-promotion.

Can you see why this is so frustrating? You did the work. You built the better product and the better content. But you built it in a language the reasoning engine doesn't prioritize.

This is the core of what people are starting to call Generative Engine Optimization, or GEO. Your competitor is winning on the off-page graph while you're obsessing over on-page text.

Why Can't My SEO Dashboard Show Me This Problem?

If you're trying to diagnose this using Semrush, Ahrefs, or Google Search Console, you're flying blind. I don't say that to knock those tools. They're excellent at what they measure. The problem is what they can't measure.

Traditional SEO dashboards work by scraping Google's search engine results pages. They tell you where you rank for keyword X and how many backlinks you have. Useful for Google rankings. Useless for the hidden layer.

They cannot see that when a user asks ChatGPT "Compare Brand A vs Brand B," the AI is explicitly recommending Brand B because Brand A has inconsistent pricing data in the model's training set. Or because Brand B has a cleaner entity profile across third-party sources.

The Measurement Gap Is Real

Traditional tools measure links. AI visibility requires measuring reasoning.

Rank trackers assume a linear list from position 1 to 10. AI models use probabilistic selection. They build a shortlist based on entity confidence, then select from that shortlist based on context.

Your competitor might not rank for "Best CRM" in Google at all. But because they have strong entity signals across the sources the AI trusts, they appear in the majority of ChatGPT's answers for that same intent.

Your SEO dashboard shows you winning. Your revenue dashboard shows you losing. That gap between what your tools report and what your customers actually experience is where the real problem lives.

To see this reality, you need tools specifically designed to query the LLMs, not the SERPs. That's exactly why we built Akii's competitor intelligence capabilities. Not because traditional SEO tools are bad, but because they literally cannot see the game that's being played.

How Do I Reverse-Engineer My Competitor's AI Advantage?

Now that we understand the mechanics, here's the practical framework. Four steps. No magic. Just methodical work.

You don't need to build a worse website. You need to build a better knowledge graph.

Step 1: Run a "Hidden Competitor" Audit

First, identify who the AI actually thinks your competitors are. This is often a surprise.

Your SEO competitors and your AI search competitors are frequently different companies. I've seen cases where a brand that barely registers in traditional search dominates the AI conversation because of historical authority built over years.

The action is simple: query the major AI models with your core category questions. "What's the best [your category] software?" "Compare the top [your category] tools." Do this systematically across ChatGPT, Gemini, and Perplexity.

You'll often find a legacy brand you thought was irrelevant is actually dominating the AI conversation. That's your real competitor now.

Akii's platform automates this discovery process, but you can start manually today. Know who you're actually competing against before you do anything else.

Step 2: Reverse-Engineer Their Citation Map

Once you've identified who's winning, find out where the AI learned to trust them.

The question is specific: which external sources does the AI cite when it recommends your competitor? Is it a specific G2 comparison grid? A Wikipedia entry? A specific industry report from 2023? A "best of" list on a trade publication?

This is your GEO roadmap. If Perplexity cites a specific "best of" list when recommending your competitor, you need to get added to that list. The AI uses that specific URL as a ground truth source. Miss that source, and you miss the recommendation. It's that direct.

Step 3: Analyze Their Content Blueprint

Your competitor might have "worse" content visually, but it might be "better" structurally. Most marketing teams miss this distinction entirely.

Look for a few specific things.

Schema markup. Do they use Product and FAQ schema that you don't? This makes them machine-readable in ways that pretty prose never will.

Quotable canonicals. Do they use simple, declarative sentences like "Brand X is the leading solution for..." that are easy for an AI to extract and cite? AI models favor clean, quotable statements.

Entity consistency. Is their "About Us" page text identical to their LinkedIn profile, their Crunchbase entry, and their G2 listing? Consistency across sources equals trust in the model's reasoning.

You can check this manually or use the Akii Website Optimizer to run a structured analysis. Either way, you're looking for the structural signals that make content machine-friendly, not just human-friendly.

Step 4: Execute the Gap Analysis

Now you have the map. Execute.

Technical AEO. If they have schema markup and you don't, fix it this week. Use Organization and Product schema so the AI understands your pricing and features with more precision than it understands theirs.

Authority GEO. If they're winning because of specific citations, generate better authority signals. Publish a data-driven industry report. Get quoted in publications the AI trusts. AI models prize recency when authority is equal, so if you can match their authority level with newer, better-sourced content, you win.

Direct education. Don't wait for the next crawl cycle. Systematically ensure that AI search engines have access to your updated, structured data. Feed them the information that proves you're the better choice. This isn't gaming the system. It's making sure the system has accurate information about you.

What Does "Winning" Actually Look Like in AI Search?

Here's what I think most people are getting wrong about this entire shift.

They're treating AI search visibility as a new tactic to bolt onto their existing SEO strategy. Add some schema here, get a few more citations there, check the box.

That's not what this is.

This is a fundamental change in how buyers discover and evaluate products. When someone asks an AI "What should I use for X?" and your brand isn't in the answer, you don't get a chance to compete. There's no page 2 to scroll to. No ad slot to buy. You're simply absent from the consideration set.

The companies that understand this aren't just tweaking their SEO. They're building a parallel visibility strategy designed specifically for how reasoning engines work.

That means treating your entity profile as seriously as you treat your website. Measuring AI mentions with the same rigor you measure keyword rankings. Building citation density across trusted third-party sources, not just backlinks to your domain. Ensuring information consistency everywhere your brand appears online.

Stop Refining for a Scorecard That Doesn't Exist Anymore

Your competitor isn't winning because they got lucky. They're winning because, largely by accident of longevity or specific citation structures, they've become a verified node in the AI's map of your category.

You have the better product. You have the better website. You probably have the better team.

Now you need to translate that quality into the language the machines actually use to make recommendations.

Stop obsessing over beating them on keywords. Start obsessing over out-verifying them as an entity.

The tools to do this exist. The Akii platform was built specifically for this problem. But even before you look at any tool, the most important step is the mental shift: accepting that the game changed, and your old scoreboard is showing you a score that no longer matters.

That's not a comfortable realization. But it's an accurate one. And in my experience, the teams that act on accurate information, even when it's uncomfortable, are the ones that win.