The New Way Brands Lose Sales (Without Knowing It)

Being invisible on Google used to be the worst thing that could happen to your brand online. Stuck on page two. Nobody clicks.

That's no longer the worst outcome.

The worst thing now is being misrepresented by an AI that your customers trust more than your own website. When someone asks ChatGPT or Gemini for a recommendation, the AI doesn't hand them a list of links to sort through. It gives them an answer. Synthesized, confident, and often wrong.

If that answer calls your premium product a "budget alternative," or hallucinates a price you haven't charged in two years, or skips the one feature that separates you from every competitor, the sale is gone before the customer ever finds your site.

I've watched this pattern accelerate over the past 18 months. Brands spending millions on traditional marketing while an AI agent quietly misdescribes them to the exact audience they're trying to reach. Most have no idea it's happening.

This is the hidden tax of the AI era: revenue lost to machine error. Here's how it works, why it happens, and what you can actually do about it.

Why Are AI Hallucinations Different From Bad Search Results?

In the old world of ten blue links, a wrong result was survivable. The user would click through to your site and read the facts for themselves. You still had a shot.

Answer engines don't work that way. The AI acts as gatekeeper. It reads, synthesizes, and delivers a verdict. Most users never click through at all.

So when an AI model hallucinates something about your brand, the impact on your funnel is immediate and severe.

Think about what happens when Gemini tells a potential buyer your software lacks an integration it actually has. You're not just ranked lower. You're filtered out of the consideration set entirely, and the buyer never evaluates you.

It gets worse. AI models penalize inconsistency. If your data contradicts itself across the web, models lose confidence in your entity. That leads to cautionary language in their answers. Things like "Some users report mixed experiences." That's a conversion killer, and you didn't do anything wrong except fail to control your own data.

What Are the Most Common Ways AI Gets Brands Wrong?

This isn't random. Based on data from the Akii AI Visibility Index, misrepresentation falls into five specific categories. I've seen all five hit real companies with real revenue consequences.

Wrong Positioning

The model categorizes your specialized enterprise solution as a generic SMB tool. A CTO asking "What's the best enterprise data platform?" never sees your name because the AI thinks you serve small businesses. You're disqualified before the conversation starts.

Wrong Features

The model fails to list your key differentiators because they aren't tagged in your schema. Users genuinely believe you lack functionality you've spent years building. This one is particularly painful because it's entirely preventable.

Wrong Comparisons

The model recommends your competitor as the "best solution" while framing your brand as a "risky alternative." Why does this happen? Usually because your competitor has more authoritative citations and your brand doesn't have enough structured data to compete on equal footing.

Wrong Pricing

The model hallucinates an outdated price point, or fails to return pricing at all because it can't parse your pricing page. Either way, the prospect forms an expectation that doesn't match reality. You lose them at the moment of decision.

Outdated Facts

The model relies on old data from third-party directories rather than your current website. It describes products you retired years ago. It references partnerships that ended. It presents a version of your company that no longer exists.

How many of these are affecting your brand right now? Most companies I talk to discover at least two when they actually check.

Why Does This Keep Happening? Is It Random?

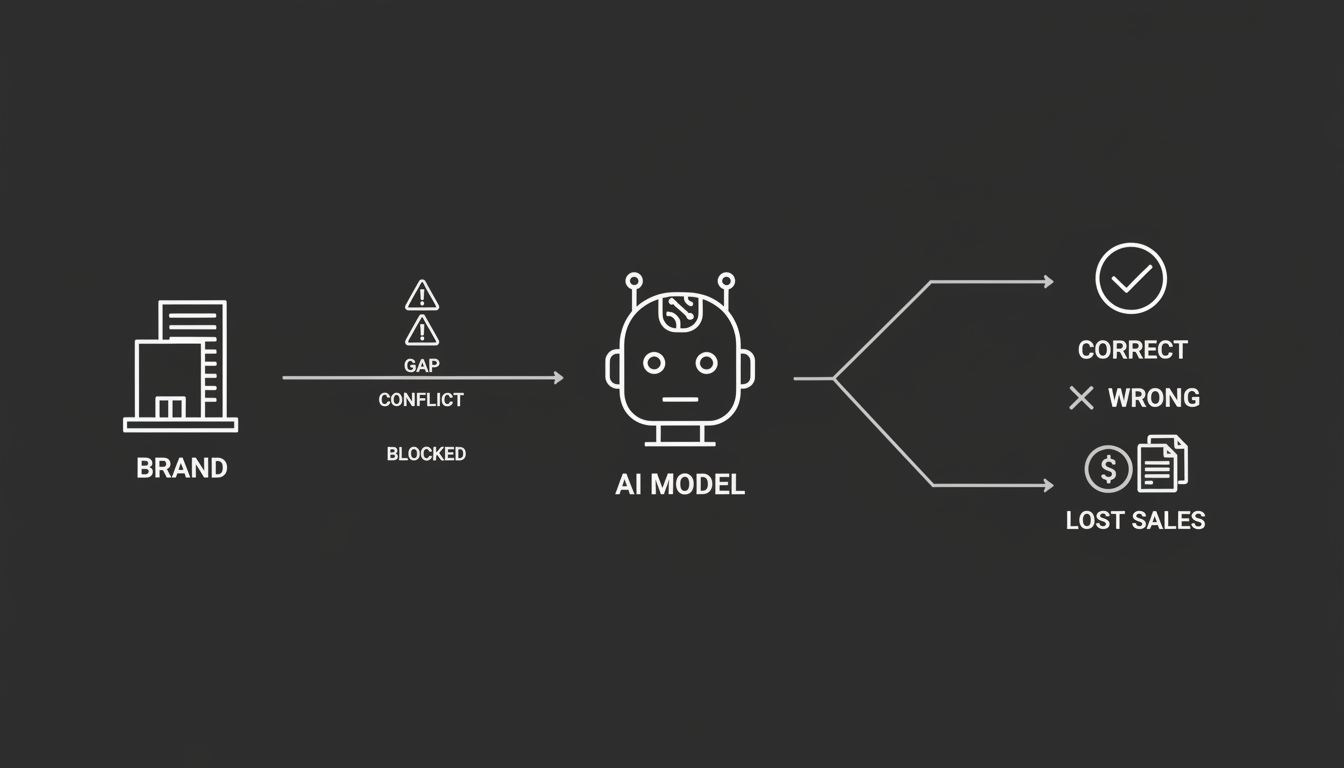

Not at all. AI models aren't malicious. They're logical reasoning engines working with whatever data they can find. When they misrepresent a brand, it's almost always because of weak signals in that brand's Knowledge Graph.

Three root causes explain nearly every case I've seen.

Data gaps. AI models rely on structured data to "read" your site. Without Product or Offer schema, your specific attributes are invisible to the crawler. The model can see your marketing copy but can't parse your actual capabilities as facts. So it guesses. Guessing is where hallucinations start.

Conflicting sources. If your brand description on LinkedIn says one thing, your Crunchbase profile says something slightly different, and your website says a third thing, the model detects a conflict. To avoid being wrong, it either hallucinates a generic description that splits the difference or excludes you entirely. Neither outcome helps you.

Technical blocking. This is the one that surprises most people. AI agents read websites differently than Google's traditional crawler. If your site lacks proper configuration for AI crawlers, the agents are forced to guess based on third-party data. You've locked the front door and forced them to get their information from your neighbors.

None of these problems are about your product quality or brand reputation. They're about data hygiene and technical readiness. Which means they're fixable.

How Do You Know If Your Brand Is Being Misrepresented?

Most brands have no idea this is happening. Traditional analytics tools can't track AI conversations. Your Google Analytics dashboard won't show you that ChatGPT told 10,000 people your product doesn't support SSO when it does.

There are two approaches worth using together.

Manual Checks

Start by prompting ChatGPT, Gemini, and Claude with questions your buyers would actually ask:

- "What are the pros and cons of [Your Brand]?"

- "How much does [Your Brand] cost?"

- "How does [Your Brand] compare to [Competitor]?"

- "What integrations does [Your Brand] support?"

Write down every answer. Compare them to reality. You'll likely find at least one big error across the major models.

Useful, but limited. It captures a single moment in time. Models update constantly. What's accurate today might be wrong next week.

Automated Monitoring

To actually protect revenue, you need continuous visibility. The Akii AI Visibility Monitor tracks your brand across seven dimensions around the clock, so you can catch hallucinations immediately before they become permanent fixtures in the model's training data.

That last part matters more than most people realize. AI models learn from their own outputs and from web content that references those outputs. A hallucination that goes uncorrected can become self-reinforcing. The longer it persists, the harder it becomes to fix.

How Do You Actually Fix What the AI Gets Wrong?

You can't email OpenAI to correct a hallucination. There's no "report incorrect information" button that works the way it does on Google. You have to engineer the correction by feeding the models better data.

Three approaches work consistently. They're not complicated, but they require discipline.

Fix Your Entities: Create a Single Source of Truth

This is the foundation. Build a Master Entity Profile: one unified description, one taxonomy, one boilerplate. Then replicate this exact text across your website, LinkedIn, Crunchbase, and Wikidata.

Exact. Not "similar." Not "aligned in spirit." The same words, the same claims, the same structure.

Consistency is how AI models determine ground truth. When every source agrees, the model treats your description as fact. When sources conflict, the model treats everything as uncertain. Consistency forces the model to accept your definition.

This sounds simple. In practice, most companies have five or six slightly different descriptions of themselves scattered across the internet. Cleaning that up is the single highest-impact thing you can do.

Set up Quotable Canonicals

AI models prefer concise, extractable facts. They struggle with long narrative paragraphs full of marketing language. They do well with clear, declarative statements that answer specific questions.

Rewrite your "About" and "Product" sections using Answer Engine Optimization tactics. Use question-based headings like "What is [Product]?" followed by clear two-sentence summaries. You're writing for a machine that needs to summarize you in 50 words to a stranger. If your content doesn't make that easy, the machine will improvise. You won't like the improvisation.

Deploy Technical Fixes

Make your data machine-readable. Use tools like the Akii Website Optimizer to generate specific Schema.org markup for your products, pricing, and FAQs.

Explicitly tagging attributes like "free trial," "enterprise security," or "SOC 2 compliant" ensures the model parses them as facts rather than marketing copy. There's a real difference between a model reading "We offer world-class security" (which it will ignore) and reading a schema tag that says securityCertification: SOC 2 Type II (which it treats as a verifiable fact).

Most brands fall behind on the technical layer. Not because it's hard, but because nobody on their team is thinking about AI readability as a distinct requirement from SEO or web performance.

What Happens If You Don't Fix This?

The stakes here are real, and the window for easy correction is closing.

Every day an AI model misrepresents your brand, that misrepresentation gets reinforced. Other content references the AI's answer. The AI references that content. The error compounds.

Meanwhile, competitors who are managing their AI visibility get recommended more often, described more accurately, and win deals you never knew you lost.

The brands that figure this out in the next 12 months will have a structural advantage that's genuinely hard to reverse. The ones that wait will spend years trying to correct entrenched misrepresentations.

I've been through enough technology shifts to recognize this pattern. Early movers don't just get a head start. They get a compounding advantage that late movers can't easily close.

Where Should You Start?

If you've read this far, here's what I'd do this week.

Day one. Run manual checks across ChatGPT, Gemini, and Claude. Ask the questions your buyers ask most. Document every error.

Day two. Audit your brand descriptions across every major platform. Find the inconsistencies. Write one canonical description and start updating everything to match it.

Day three. Check your technical readiness. Do you have Product schema? Offer schema? FAQ schema? If not, that's your next project.

Beyond that. Set up continuous monitoring so you're not doing this manually every week. Akii's platform was built specifically for this problem because I watched too many good companies lose visibility to errors they didn't know existed.

The shift from search engines to answer engines is the most major change in how buyers find and evaluate products since Google itself. The brands that adapt will win. The ones that ignore it will wonder where their pipeline went.

This isn't about chasing a trend. It's about controlling how your company gets described to the people who matter most. That's always been the job. The tools just changed.