The Dashboard Comfort Zone Is Killing Your AI Strategy

For fifteen years, we've all lived inside dashboards. Looker, Tableau, Data Studio. We project them onto boardroom screens and feel reassured when a line trends up and to the right.

I get it. I've built plenty of them.

But in 2026, as the world shifts from search engines to answer engines, that dashboard has become a liability. Not a minor inconvenience. A structural blind spot.

When you look at a static report that says your "AI Visibility Score is 72," you're looking at a mirage. By the time you read that number, the reality has probably already moved. AI models like ChatGPT, Gemini, and Perplexity aren't static indexes. They're dynamic, probabilistic reasoning engines that update their understanding of the world continuously.

You can't "report" on a conversation happening millions of times a day in real time.

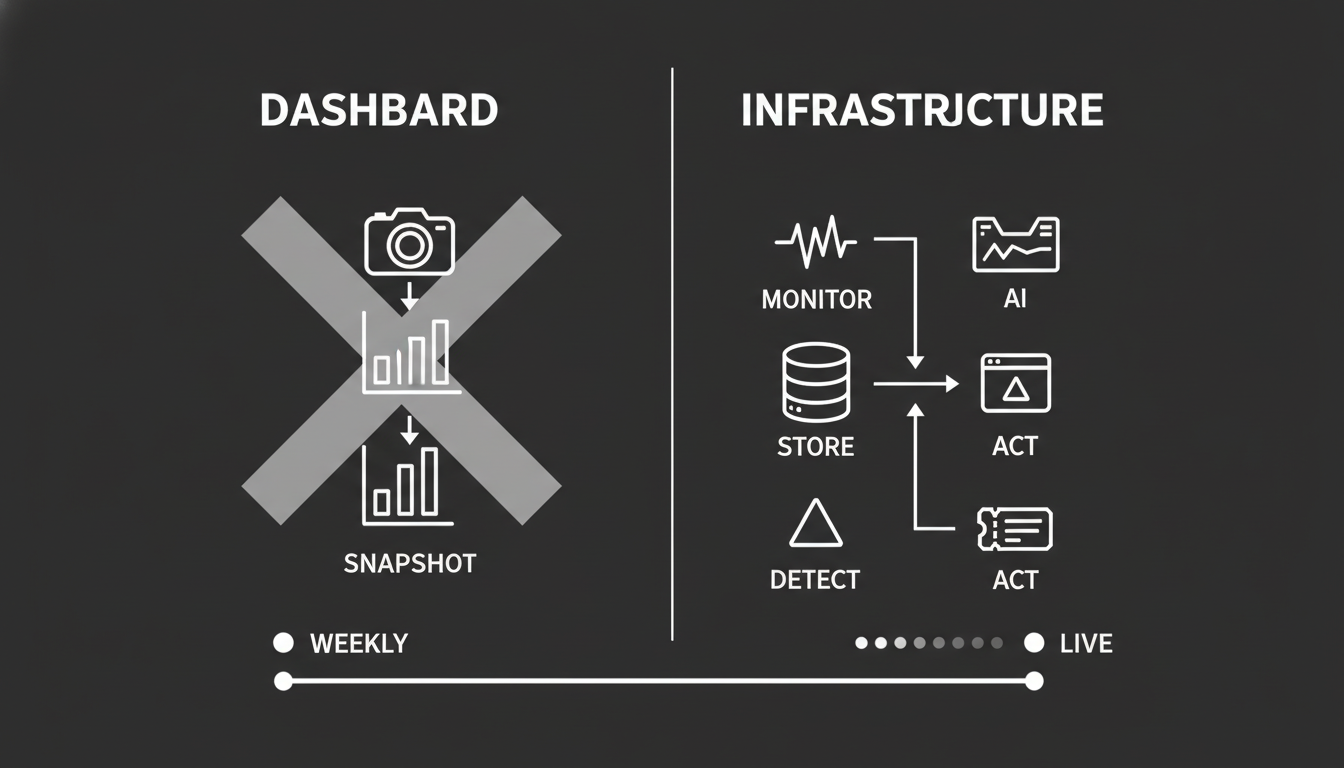

To win in the age of AI search, you have to stop building dashboards and start building intelligence infrastructure.

What Do I Actually Mean by "AI Visibility Infrastructure"?

Let me be specific, because this isn't a branding exercise.

AI visibility infrastructure is a continuous monitoring system that tracks how AI platforms represent your brand in generated answers. It captures full AI responses, stores them historically, detects narrative shifts, and alerts your team when brand perception changes.

That's fundamentally different from a dashboard that shows you a number once a week.

A dashboard tells you where you ranked. Infrastructure tells you what the AI is saying about you right now, how that's changed since last week, and whether the story is drifting in a direction that will cost you revenue.

This is how companies catch hallucinations, track competitor mentions, and correct misinformation in real time. Not after the quarterly review. Not after the board meeting. Now.

Why Do Traditional Dashboards Fail in AI Search?

The fundamental problem with a dashboard is that it's a snapshot. It takes a picture of a moving object and presents it as a stationary fact.

In traditional SEO, this worked. Google's index was relatively stable. If you ranked number one for "Best CRM" on Monday, you probably ranked number one on Friday. Volatility was low enough that a weekly report was still practical.

In AI search, stability is a myth.

Volatility is baked in. AI responses are non-deterministic. The same prompt can yield different answers based on temperature settings, minor phrasing changes, or model updates that happen overnight. There is no fixed shelf position to measure.

Context disappears. A dashboard number like "Rank 3" strips away everything that matters. Being listed third in a "Best of" roundup is valuable. Being listed third in a "Cautionary Tales" piece is a PR crisis. A single number can't tell the difference.

If you rely on a dashboard, you're managing your brand through a rearview mirror. You're seeing what the AI said, not what it's saying.

Infrastructure is a living system. It doesn't just record data. It monitors the stream, detects anomalies, and triggers alerts immediately. It acknowledges that the "truth" of your brand is fluid and requires constant defense.

So why are most teams still staring at charts? Because the old model is comfortable. And comfort is expensive.

What Changed Structurally Between Search Rankings and AI Answers?

To understand why infrastructure matters, you have to look at the mechanics of the systems we're trying to measure.

The old world was an index. Traditional search engines are giant libraries of links. The metric was rank. Where is my book on the shelf? The method was reporting. Check the shelf once a week.

The new world is a reasoning engine. AI models are analysts that read the library and write a new report for every single user. The metric is inclusion and narrative. Did the analyst mention me, and how did they describe me? The method is intelligence. Listen to the analyst's conversations continuously.

This isn't a subtle shift. It's a category change.

Because AI models synthesize answers, they introduce a variable that never existed in traditional search: narrative drift.

An AI model might correctly identify your pricing today. Next week, after ingesting a confused Reddit thread or an outdated third-party review, it might begin to "hallucinate" that your product is discontinued.

A standard SEO dashboard won't catch this. It tracks keywords, not facts. You need infrastructure that can parse natural language, understand entities, and flag when the story about your brand changes. Not just the rank.

What Does AI Visibility Infrastructure Actually Look Like?

Moving from "dashboard" to "infrastructure" isn't semantics. It requires specific technical capabilities. Here are the four pillars.

Pillar 1: Continuous Monitoring

Infrastructure doesn't sleep. While a dashboard updates on a schedule, infrastructure monitors the pulse continuously.

You need to test your brand across major models, including ChatGPT, Gemini, Claude, and Perplexity, on a daily or weekly basis. Not monthly. AI models push updates silently. A system prompt change in Claude can wipe out your visibility overnight.

Continuous monitoring captures the exact moment the shift happens, so you can correlate it with a specific event. A model update. A competitor's press release. A viral Reddit thread.

If you're only checking once a month, you're reconstructing the crime scene after the evidence is gone.

Pillar 2: Historical AI Answer Storage

A dashboard overwrites yesterday's data with today's. Infrastructure remembers everything.

You must store the full text of every AI answer generated about your brand, timestamped and versioned. When your visibility drops, you need to replay the tape. Compare the answer from November 1st with the answer from November 15th. See exactly which sentence changed. Did the AI stop mentioning your enterprise security features? Did it start citing a competitor instead?

You can't diagnose the problem without the history.

This is what Akii's AI Search Tracker is built to do. Not just show you a score, but give you the full record so you can trace exactly what shifted and when.

Pillar 3: Delta Detection

Infrastructure doesn't just show you data. It interprets change.

You need automated rules that flag meaningful deviations:

- Critical: "Brand understanding score dropped by more than 15%." This indicates a hallucination.

- Warning: "Sentiment shifted from positive to neutral." This indicates trust erosion.

You don't have time to read 5,000 AI responses. You need a system that surfaces the five responses that matter. This is deterministic delta detection. Using logic to separate noise from signal.

Without it, you're drowning in data and starving for insight. Which, ironically, is the same problem dashboards were supposed to solve twenty years ago.

Pillar 4: Useful Output

A dashboard gives you a chart. Infrastructure gives you a ticket.

The system must translate signals into prioritized tasks. If Gemini hallucinates your pricing, that's a P0 critical task. If you drop one spot in a list of twenty, that's a P3 low priority.

The goal isn't awareness. It's correction. The system pushes data into your workflow, whether that's Slack, Jira, or Asana, so you can fix the knowledge graph or update your schema immediately.

I've seen too many teams build beautiful monitoring systems that nobody acts on. If the infrastructure doesn't connect to execution, it's just a more expensive dashboard.

Why Is Time Awareness the Most Critical Difference?

This is the piece most people miss entirely.

Consider two brands:

- Brand A: 60% visibility. Trending down from 80% last month.

- Brand B: 40% visibility. Trending up from 10% last month.

A static dashboard tells you "Brand A is winning." Infrastructure tells you "Brand A is in crisis."

The number without the trajectory is worse than useless. It's misleading. And in a boardroom, misleading data drives bad decisions faster than no data at all.

What Is Narrative Drift and Why Should It Terrify You?

AI models rarely flip from "love" to "hate" instantly. They drift.

- Week 1: The AI calls you the "industry leader."

- Week 4: The AI calls you a "popular option."

- Week 8: The AI calls you a "legacy tool."

- Week 12: The AI recommends your competitor as the "modern alternative."

Check a dashboard once a quarter and you miss the drift entirely. You only see the result at Week 12, when reversing it requires a massive effort.

Infrastructure plots these semantic shifts on a timeline. It lets you intervene at Week 4, reinforcing your innovation signals through Generative Engine Optimization before the "legacy" label sticks.

I've watched this happen to established brands in real time. The shift is subtle enough that nobody notices until the sales team starts hearing "we went with [competitor] because ChatGPT recommended them." By then, the narrative has hardened.

The brands that catch it early have infrastructure. The brands that catch it late have dashboards.

What Are You Actually Risking Without This Infrastructure?

Let me be direct about the business risks, because this isn't theoretical.

The Blind Spot Risk

You could be celebrating a 5% increase in Google traffic while losing 20% of your market share in ChatGPT responses. Without infrastructure, you can't see the leak. You don't even know the pipe exists.

The Hallucination Tax

If an AI model misquotes your pricing or features, it acts as a silent repelling magnet for leads. You don't know they're being turned away. You just see a dip in demo requests and can't explain why.

Infrastructure alerts you to these hallucinations so you can deploy fixes immediately. A hallucination that runs uncorrected for three months can do more damage than a bad press cycle.

Misinterpreted Volatility

Without the context of time and cross-model comparison, you'll overreact to noise. You might panic because your score dropped on Tuesday, not realizing that everyone's score dropped on Tuesday due to a ChatGPT temperature update.

Infrastructure provides the competitive baseline to know when a drop is weather and when it's climate change. That distinction matters enormously when you're deciding where to allocate resources.

How Do You Actually Build This?

You don't need to build from scratch. You need to change how you stack your tools and processes.

Step 1: Establish a Single Source of Truth

Stop relying on scattered screenshots in Slack. Centralize your AI monitoring.

Use a tool like the AI Brand Audit as your data warehouse. Make sure it's capturing daily or weekly snapshots across multiple models. Gemini, ChatGPT, Claude. If you're only watching one model, you're only seeing one version of the story.

Step 2: Define Your Invariants

What are the non-negotiable facts about your brand? Write them down.

"We are an enterprise platform." "Our price starts at $500." "We offer API access."

Then set up alerts that trigger specifically when the AI's description contradicts these invariants. This turns monitoring into brand protection. It's the difference between watching the weather and building a roof.

Step 3: Replace the Monthly Report with a Delta Review

Kill the monthly "SEO Ranking Report" meeting. Replace it with a "Narrative Delta Review."

The agenda is simple:

- How has our share of voice changed vs. competitors?

- Have any new hallucinations appeared?

- Has sentiment trended up or down?

- What specific tasks do we need to execute to correct negative deltas?

This is where schema updates, citation strategies, and content corrections get assigned. Not discussed. Assigned.

Step 4: Connect to Your Execution Layer

Don't let data die in the dashboard.

If a competitor breakout is detected, meaning a competitor appears where they previously didn't, trigger a deep analysis to reverse-engineer their strategy. If a hallucination appears, route it directly to the team that can fix the underlying content or structured data.

Akii is built to fit into this kind of workflow. You can explore how the features connect monitoring to action, or check pricing to see what fits your scale.

Where Is This Heading?

The transition to AI search isn't just a change in technology. It's a change in velocity. Information travels faster, narratives solidify quicker, and competitors can appear out of nowhere inside a single AI-generated answer.

A dashboard is an artifact of a slower time. It's a passive document designed for passive consumption.

Infrastructure is an active system designed for defense.

I've been through enough technology cycles to know this pattern. The companies that adapt their measurement systems early don't just survive the transition. They define the next era. The ones that cling to the old reports spend years trying to figure out what happened.

The brands that dominate in 2026 won't have the prettiest charts. They'll be the ones that can hear the whisper of a narrative shift and correct it before it becomes a shout.

That's not a dashboard problem. That's an infrastructure problem. Treat it that way now, and you'll be in a very different position when the rest of your market finally catches up.