AI Search Already Knows Who Your Competitors Are. Do You?

For the last decade, competitive intelligence meant reverse-engineering spreadsheets. We tracked keyword rankings, monitored backlink profiles, estimated organic traffic. If a competitor ranked number one on Google for a core industry term, we assumed they were winning.

That assumption is breaking down.

When a buyer asks ChatGPT, Gemini, or Perplexity to "compare the top enterprise CRM platforms," the AI doesn't return a list of links. It acts as an analyst. It pulls from reviews, pricing pages, PR mentions, forum discussions, and synthesizes a conversational answer. It decides who the leaders are, who the budget alternatives are, and who isn't worth mentioning at all.

Here's what most people miss. Because AI models are reasoning engines designed to summarize the web's consensus, they've inadvertently become the most transparent competitive intelligence systems ever built. Every time an AI generates an answer about your industry, it reveals exactly how the market perceives your competitors. What strengths they're known for. Where their weaknesses are. And whether your brand exists in the conversation at all.

I've watched this pattern play out across multiple technology cycles. The companies that see the shift early don't just adapt. They build a structural advantage that compounds over time.

Why Are AI Answers a Better Market Signal Than Traditional Research?

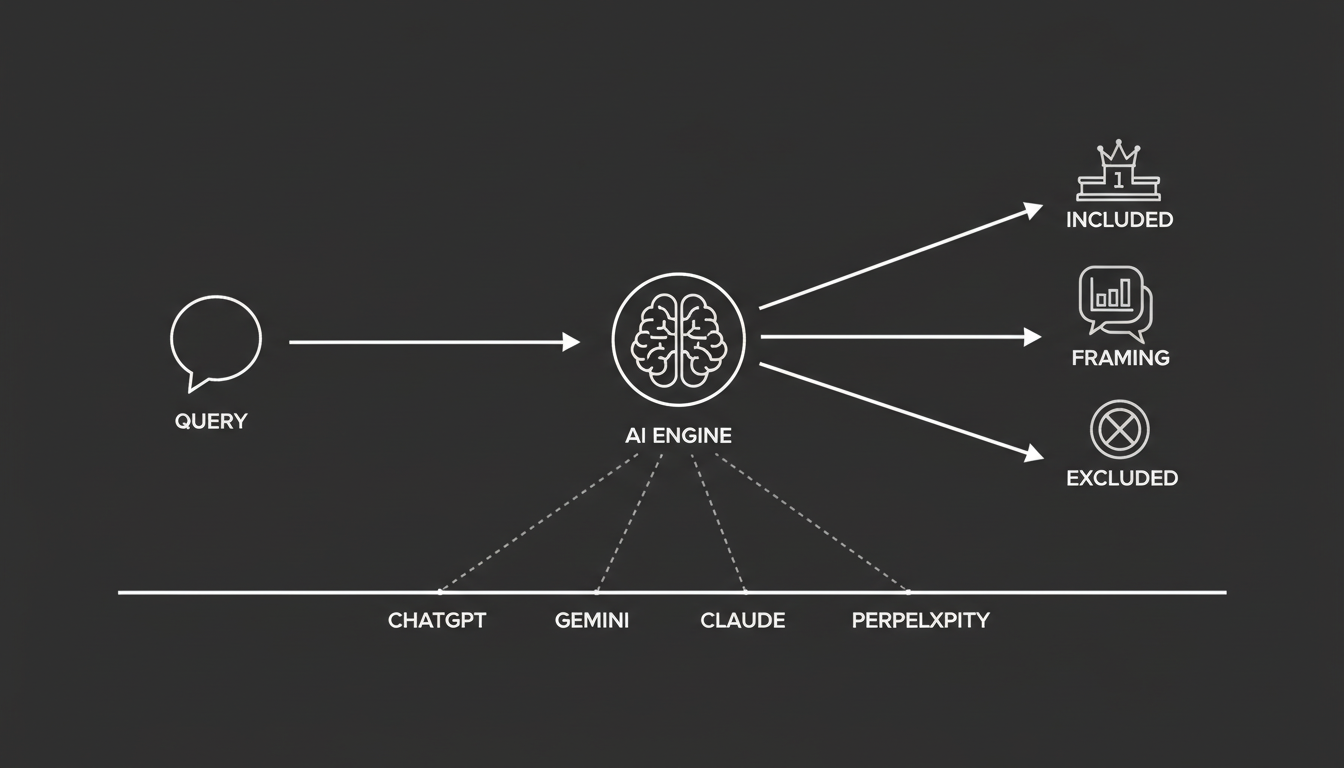

To understand why AI search functions as competitive intelligence, you need to understand how models like Claude, Gemini, and ChatGPT construct their answers. They don't index web pages based on keyword density. They build knowledge graphs based on entities and relationships.

When you prompt an AI for a recommendation, you're effectively asking it to query its knowledge graph and output the current public consensus on a specific category. Traditional market research takes months and costs thousands of dollars. AI search provides an instant, synthesized snapshot.

By analyzing AI outputs, you can uncover things that used to require expensive consultants. Which brands have the most consistent, structured data across the web. Which trusted third-party sources are driving the most authority in your space. Which specific product features the market associates most closely with your competitors.

That's real intelligence. And it's sitting right there in the answer box.

Entity strength. Which brands have the most consistent, structured data across the web.

External corroboration. Which trusted third-party sources, like G2, Gartner, or TechCrunch, are driving the most authority in your space.

Feature perception. Which specific product features the market associates most closely with your competitors.

What Does It Mean When a Competitor Gets Included, or Left Out?

In AI-first search, visibility is a zero-sum game. The AI acts as a gatekeeper, filtering noise to present a curated shortlist of options.

Inclusion signals that the AI views a brand as a verified node. Clear attributes, consistent facts, high-trust external validation. Exclusion is equally telling. If a major traditional competitor is completely missing from an AI answer, it reveals a structural flaw in their digital presence. They might lack proper Schema.org markup. Their entity profile might be inconsistent enough that the AI treats them as a hallucination risk.

Think about that for a second. A company that spent millions on SEO and brand awareness can be invisible to the AI that's increasingly shaping buyer decisions. Not because they did something wrong in the old model, but because they didn't adapt to the new one.

When you track AI search, you aren't just seeing who's winning. You're seeing who the reasoning engine has deemed irrelevant.

How Do You Read Competitor Inclusion Patterns?

If you want to use AI search for competitive intelligence, you can't run a single prompt and call it a day. You need to look for inclusion patterns across multiple AI models over time.

Who shows up everywhere?

When you run a basket of prompts across different models, certain brands appear in almost every answer. These are often not the brands with the highest SEO traffic. They're the brands with the highest citation density.

I've seen this surprise people repeatedly. A "hidden competitor," a brand you previously ignored because they had a mediocre SEO blog, absolutely dominates ChatGPT and Perplexity recommendations. Why? Because they have a pristine Wikidata entry, a highly active Trustpilot profile, and frequent mentions in digital PR. AI models reward that kind of brand saturation with consistent inclusion.

Your SEO dashboard won't show you this. Your AI monitoring will.

Who disappears, and what does that tell you?

Monitoring AI search also lets you track narrative drift and disappearance. Because AI models update continuously, a competitor's visibility can vanish overnight.

If a competitor disappears from Claude but remains in ChatGPT, it likely means they've lost a critical high-authority citation. Claude is highly conservative and relies on unimpeachable trust signals. If a competitor suddenly drops out of Google's AI Overviews, it often signals a technical failure. A broken FAQ schema. A site architecture issue.

By tracking who disappears, you can identify exactly when a competitor's digital infrastructure breaks. That gives you a window to capture their lost market share before they even realize what happened.

Who Controls the Narrative in Category Questions?

Being mentioned in an AI answer is only the beginning. The real battleground is how the AI frames you relative to competitors.

How does framing actually work?

When an AI synthesizes a comparison, it assigns a specific framing to each entity based on the sentiment of its training data. If your competitor has aggressively managed their review velocity and published authoritative, data-driven reports, the AI will likely frame them as the "industry standard" or the "premium choice."

If the AI finds conflicting data or negative sentiment, it will frame them with cautionary language. "Budget alternative." "Some users report complex integrations."

This framing advantage is devastating in the zero-click era. If an AI tells a buyer that your competitor is the safest choice, the buyer will likely bypass your website entirely. They won't even know you exist.

What's the AI visibility flywheel?

AI models are path-dependent. When a model repeatedly cites a competitor as a leader, and users interact positively with that answer, the model reinforces that brand's position as a foundational truth.

I think of this as a flywheel effect. The brands that get recommended get more engagement, which validates the recommendation, which makes the model more confident in recommending them again. Analyzing AI narratives lets you see which competitors are currently spinning that flywheel and locking in their status as the category default.

If you're not tracking this, you're watching the game on a two-week delay. At best.

Where Are Your Competitors Actually Vulnerable?

The most valuable aspect of using AI as competitive intelligence is its ability to highlight exactly where competitors are exposed. You can reverse-engineer AI outputs to find strategic gaps in the market.

What are intent gaps?

By running a diverse set of evaluative and transactional prompts, you can map the boundaries of your competitors' knowledge graphs. A competitor might dominate broad queries like "best accounting software" but completely disappear for specific intent-based queries like "best accounting software for freelance graphic designers."

When the AI fails to recommend a competitor for a specific niche, it means that competitor lacks content coverage and intent-based schema mapping. Those missing prompts are your roadmap for content creation.

This is where smaller, more focused brands can win against larger incumbents. The big players improve for broad categories. The specific, high-intent queries are often wide open.

What are knowledge gaps?

AI models are notorious for misrepresenting brands when their data is unstructured. When you run an AI audit on your competitors, look for hallucinations or missing facts.

Does the AI mistakenly state that your competitor doesn't have an API? Does it quote an outdated price for their software? If AI models consistently misunderstand a competitor's capabilities, that competitor has a brand understanding failure.

You can use this gap by improving your own answer engine presence aggressively, using Product and Offer schema to ensure the AI knows exactly how capable and cost-effective your solution is by comparison. The competitor won't even know they're losing deals to bad AI data. You will.

How Do You Actually Turn This Into a System?

Treating AI search as competitive intelligence requires moving away from manual, one-off ChatGPT queries and building a systematic monitoring process. Here's a practical framework based on the principles we apply at Akii.

Step 1: Build your prompt basket

You can't rely on a single keyword. You need to simulate the full spectrum of user intent. Build a basket of 20 to 50 prompts categorized by type:

Definitional: "What is [Competitor Name]?"

Comparative: "Compare [Your Brand] vs. [Competitor]."

Evaluative: "What are the best [Category] tools for [Specific Industry]?"

This basket becomes your measurement instrument. Without it, you're guessing.

Step 2: Run multi-model competitive scans

AI models behave differently. Run your prompt basket across multiple reasoning engines, including ChatGPT, Gemini, Claude, and Perplexity, to get a true market view.

Record how often your competitors appear, the sentiment of the output, and how they're positioned relative to your brand. One model's answer is an anecdote. Patterns across models are intelligence.

Step 3: Reverse-engineer the citations

When an AI recommends a competitor, find out why.

Look at transparency-first engines like Perplexity, which provide clickable citations for the vast majority of their answers. Which external sources is the AI citing to validate your competitor? A specific G2 comparison grid? A Wikipedia entry? A TechCrunch article?

That citation map becomes your immediate PR and content roadmap. If the AI relies on a specific digital directory to build trust in your competitor, you need your brand listed and improved on that exact same directory.

Step 4: Automate the intelligence loop

AI search is volatile. A competitor might update their website schema on a Tuesday and suddenly dominate Google AI Overviews on a Thursday.

This is where Akii's competitive intelligence features come in. Instead of relying on static PDF audits or manual prompt checks, you can deploy continuous monitoring that captures brand state snapshots over time. By tracking the delta week over week, you get alerts when a competitor gains share of voice, letting you reverse-engineer their new strategy before the advantage compounds.

Manual checks are fine for getting started. But if you're serious about this as an ongoing intelligence function, automation is the only way to keep up.

What Happens If You Ignore This?

Traditional SEO tools will tell you where your competitors rank on a page. AI search intelligence tells you if your competitors are part of the conversation at all. Those are fundamentally different questions.

In 2025 and beyond, AI search isn't just another channel. It's the most accurate real-time mirror of digital authority available. The answers generated by these models shape buyer perception, frame market leaders, and quietly disqualify brands that fail to adapt.

I've seen this pattern before. In the early days of SEO, the companies that understood how search engines worked gained a decade-long advantage. The same thing is happening now with AI answer engines, but the window is shorter and the stakes are higher.

By actively monitoring AI search as a competitive intelligence system, you stop guessing what your competitors are doing and start seeing exactly how the world's most powerful reasoning engines perceive them. The brands that build this intelligence loop now won't just outperform their competitors. They'll understand the game their competitors don't even know they're playing.

If you want to see how Akii tracks these patterns, start here. The data is already being generated. The only question is whether you're reading it.