The Old Scoreboard Is Broken

For twenty years, marketing success came down to one question: Are we on page one of Google?

That question doesn't work anymore.

We've moved from ten blue links to synthesized answers. Users skip search results entirely and ask AI assistants like ChatGPT, Gemini, and Claude for direct recommendations. The question that matters now isn't "What rank do we hold?" It's "Are we cited in the answer?"

If your brand isn't in that answer, you're invisible. Even if you rank number one in traditional search.

To survive this shift, you need to understand how the new scoring system actually works. Not the marketing version. The mechanical version.

Why Does SEO Logic Fail in AI Search?

Here's the fundamental mistake I see brands make: they apply SEO thinking to AI systems.

Traditional search engines are indexes. They match keywords in your query to keywords on a page. AI models are reasoning engines. They don't match words. They build representations of entities, infer meaning, and reference brands based on understanding and authority.

AI models don't "rank" a list of links. They form knowledge graphs, reason about relationships, and prioritize what I'd call verified nodes. Brands with clear, consistent, well-corroborated identities.

If an AI can't reason about who you are because your data is scattered, inconsistent, or unstructured, it won't guess. It will leave you out. The model is built to minimize hallucination risk. Excluding you is the safe move.

This is a different game. Most brands are still playing the old one.

What Are AI Models Actually Evaluating?

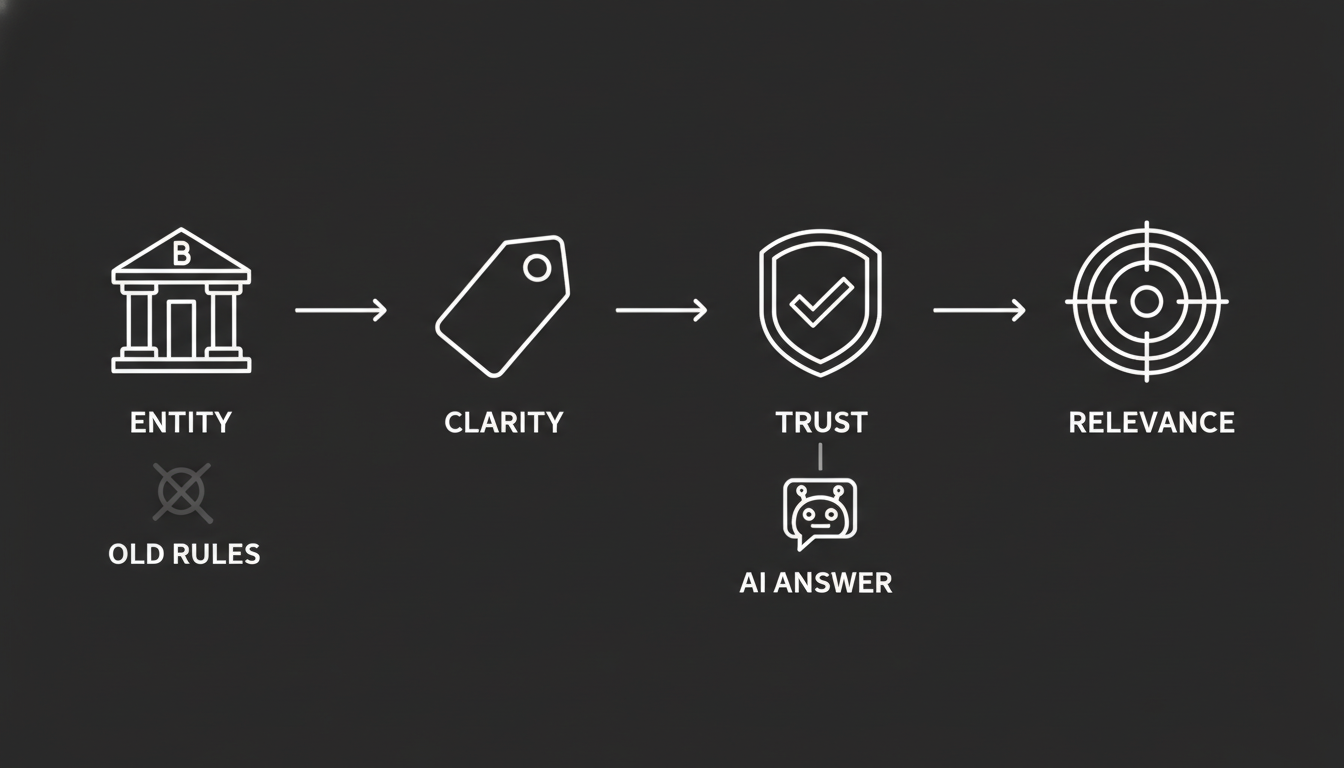

When a user asks a high-intent question like "What is the best CRM for small businesses?", the AI model runs your brand through a rapid four-part interrogation. It happens in milliseconds, but the logic is clear.

1. Who are you?

This is entity identification. The model scans for verified nodes. Are you a distinct, recognized entity in its knowledge graph? Or just a string of text on a webpage?

If you don't exist as a clear entity, you can't be recommended. Full stop.

2. What do you do?

This is functional clarity. Does the model accurately classify your product, or is it guessing? If your descriptions vary wildly across sources, the model can't map your solution to the user's intent.

It needs to understand what you do with precision. Not marketing language. Structural clarity.

3. Can you be trusted?

AI models are built to minimize risk. They look for external corroboration. Are you cited by sources the model already trusts, or only by your own website?

Think about it from the model's perspective. If the only entity saying you're great is you, that's not evidence. That's a claim.

4. Are you relevant right now?

Does your content cover the specific attributes the user needs? Enterprise-grade? Free trial? HIPAA compliant? Models infer whether a product solves a specific problem based on relationships defined in your entity graph.

If those relationships aren't explicit, you don't get matched. Even if you actually have the feature.

What Specific Signals Drive AI Recommendations?

This is where it gets practical. There are four signal categories that determine whether you show up in AI-generated answers. Miss any one of them and you're likely excluded.

Entity clarity

This is the foundation. If your brand description varies between your website, Crunchbase, and LinkedIn, the model loses confidence. It can't reconcile conflicting information, so it drops you.

The fix requires discipline. Build what amounts to a master entity profile. One unified description, one taxonomy, one boilerplate. Replicate it everywhere. I know this feels counterintuitive. SEO taught us to vary our copy. In the AI context, consistency is what builds confidence.

Reputation signals

For a generative model to cite you, it needs external corroboration. This is the domain of what's being called Generative Engine Optimization, or GEO. Models weigh entity saturation across high-trust knowledge bases like Wikidata and Crunchbase, and review platforms like G2 and Trustpilot.

Your own blog saying you're the best doesn't count. Third-party validation from sources the model already trusts? That counts.

Content depth

AI engines extract answers through what's known as Answer Engine Optimization, or AEO. They prefer quotable canonicals. Concise, declarative statements that can be lifted directly into an answer.

Content structured with FAQ and HowTo schema gives models exactly what they need. Clean definitions. Clear statements. Extractable facts. Long prose that buries the answer in paragraph seven? The model skips it.

Technical accessibility

Machine readability is non-negotiable. AI agents rely on Schema.org markup (Product, Offer, Organization) to parse details like price, availability, and ratings. If that structured data is missing, you're technically invisible to the reasoning engine.

It doesn't matter how good your content is if the machine can't read it.

Why Do Two Brands With Similar SEO Get Completely Different AI Results?

This is the question that should keep marketers up at night. Because it happens constantly.

Consider a pattern that plays out across industries. A SaaS platform has healthy SEO rankings but shows up in only 9% of AI answers. Meanwhile, incumbents in the same space get cited consistently. The SEO metrics look comparable. The AI outcomes are wildly different.

Why? Because AI models don't evaluate based on keyword volume. They evaluate based on competitive interpretation. The incumbents have entity saturation, structured data, and consistent descriptions across multiple trusted sources.

The smaller brand typically lacks all of that. So the model defaults to what it can verify.

When brands in this position build FAQ schema and publish authoritative external content, the results shift fast. I've seen inclusion rates triple. Not because the AI needed more keywords. It needed the brand to become machine-readable and authoritative.

How Does an AI Actually Choose Between Two "Best Tools"?

The reasoning flow looks something like this:

Retrieval. The user asks for the "best project management tool." The model scans its knowledge graph for entities tagged with that attribute.

Synthesis. It finds Brand A and Brand B. Brand A has consistent descriptions across G2, Wikipedia, and its own site. Brand B has conflicting pricing data and no third-party citations.

Reasoning. The model infers Brand A is a verified node with high trust signals. Brand B gets flagged as a potential hallucination risk due to data inconsistency.

Recommendation. The model generates: "I recommend Brand A because it is widely recognized for..." Brand B doesn't make the shortlist. Not because it's worse. Because it's unverifiable.

You're not competing for rank anymore. You're competing for trust in a reasoning engine.

Where Are Most Brands Failing Without Knowing It?

Most brands are invisible to AI not because they have bad products. They send weak signals. The worst part is they have no idea it's happening.

Inconsistent descriptions

Varying your boilerplate to "avoid repetition" is an SEO habit that kills AI visibility. Every variation dilutes the model's confidence in what you actually are. In the AI context, repetition isn't a penalty. It's a signal.

Missing corroboration

Relying solely on your own blog for authority fails in the GEO era. Without external validation from trusted nodes, models treat your claims as unverified. You need third-party sources confirming what you say about yourself.

Technical blind spots

Long walls of text without structure force the model to guess. Without Schema markup, your pricing and features are effectively invisible to the reasoning engine. You might have the best product page in the world. If it's not machine-readable, it doesn't exist to AI.

So What Do You Actually Do About This?

I've been through enough technology transitions to know that the brands who win aren't the ones with the biggest budgets. They're the ones who understand the new rules first and adapt fastest.

The new rules are clear.

Consistency beats creativity in entity representation. Save the creative copy for humans. Give machines clean, repeatable facts.

External validation beats self-promotion. Invest in being cited by sources AI models already trust. Your own content is necessary but not sufficient.

Structure beats volume. One well-structured page with proper schema will outperform ten blog posts that bury the answer.

Measurement beats assumption. You can't fix what you can't see. Right now, most brands have zero visibility into how AI models perceive them.

That last point is why we built Akii. We track how AI engines actually mention, cite, and recommend brands in real answers. Not how you rank in traditional search. How you show up when a user asks an AI for a recommendation.

The shift from search to synthesis isn't coming. It's here. The brands that measure it first will be the ones that own it.

Don't guess how AI agents perceive your business. See which signals AI models are using for your brand versus your competitors.