The Victory That Isn't One

A marketing lead types their brand into ChatGPT, gets a glowing recommendation, screenshots it, and drops it in Slack with a celebration emoji. AI strategy handled.

Except it isn't.

Across town, a potential buyer asks Claude the same question and gets a vague, noncommittal summary. An investor checks Perplexity for a vendor list and the brand doesn't appear. A competitor asks Gemini for a pricing comparison, and the AI invents numbers that kill the deal before it starts.

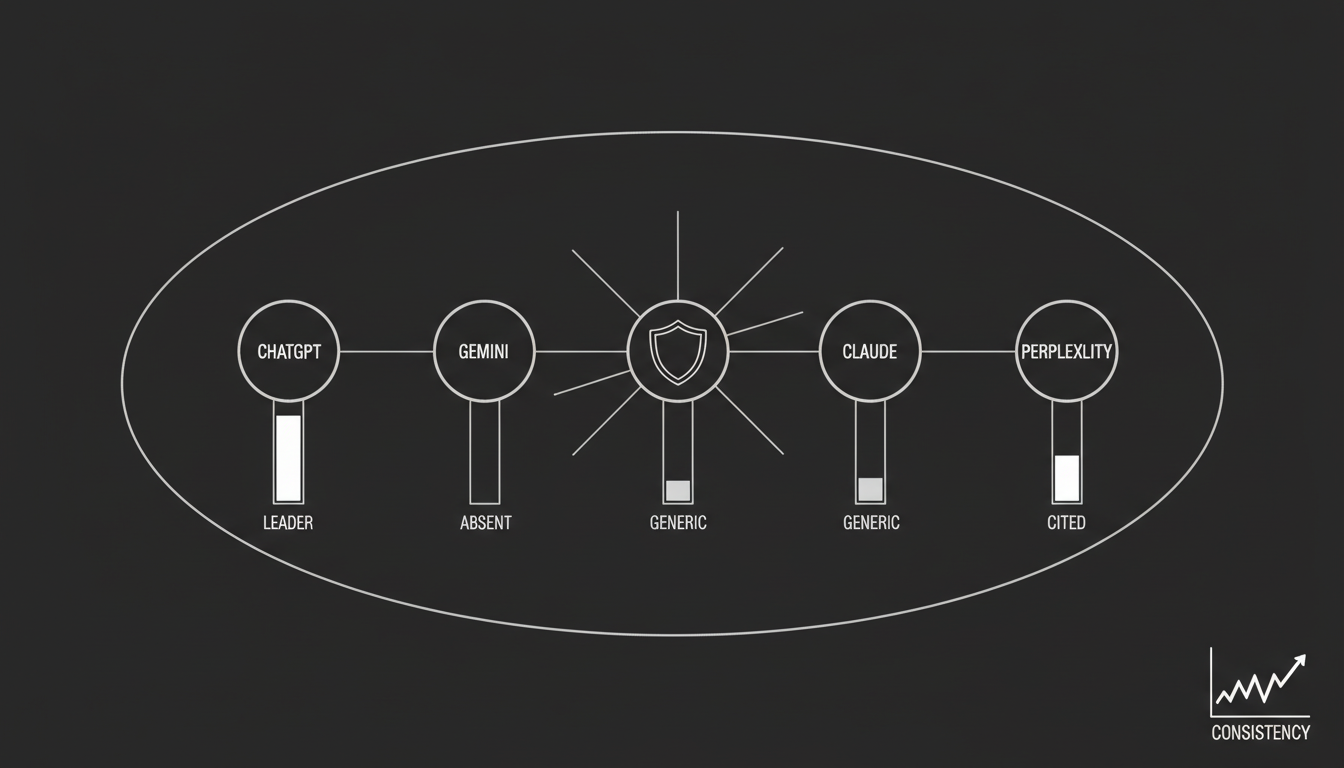

This is the Multi-Engine Visibility Gap. If you're only watching one AI engine, you're seeing a fraction of your actual market presence. I've watched this pattern play out dozens of times, and it almost never ends well for the team that thinks one good screenshot is enough.

Why Does This Feel So Different From Traditional Search?

In the Google era, we had something close to a single source of truth. Rank well on Google, and you generally ranked well on Bing. The signals were consistent. The playbook was shared.

That uniformity is gone.

Generative AI engines are built on different training data, use different retrieval architectures, and apply different risk tolerances. They don't just surface information differently. They construct reality differently.

Your brand can be a dominant market leader inside one model and a hallucination risk inside another. Not a theoretical risk. A thing that is happening right now to real companies losing real deals.

The most dangerous assumption in modern marketing is that AI visibility is binary. That you're either "in" the AI or "out." In practice, your brand reputation varies wildly depending on which tool a person happens to have open.

Here's what makes it a commercial problem rather than a technical curiosity: enterprise buying committees don't use a single tool. One stakeholder uses ChatGPT for initial research. Another uses Perplexity because they want citations. A third uses Gemini because it's wired into their Google Workspace. If your brand shows up inconsistently across those surfaces, it signals instability. It tells the buyer that consensus on your brand is weak, and weak consensus kills deals.

Why Do the Engines Disagree in the First Place?

This isn't a bug. It's a structural feature of how these systems are built. Understanding the reasons is the first step toward fixing the problem.

Different training data mixtures

Every model trains on a massive corpus of text, but the weighting differs significantly.

Gemini leans heavily on the Google system. It prioritizes information from the Google Knowledge Graph and Google Maps. If your Google Business Profile is weak, your Gemini visibility suffers.

ChatGPT casts a wider net. It rewards external quotability and community buzz from places like Reddit and forums, and it often surfaces mid-market brands that other models ignore entirely.

Different retrieval architectures

Perplexity is a transparency-first engine. It relies on live web retrieval and clickable citations. If your content isn't cited by high-authority news or review sites, Perplexity often excludes you.

Claude is more of an embedded-knowledge engine. It leans on its training data rather than live retrieval. That makes it harder to influence with quick fixes. It requires long-term authority building, full stop.

Different risk profiles

AI models have safety filters that dictate how assertive they can be. Claude is conservative. If there's any ambiguity about your brand, it defaults to a neutral summary or excludes you entirely to avoid being wrong. ChatGPT is more willing to make a definitive recommendation, even when the underlying data is thin.

Different citation behaviors

Some engines show their work. Others don't.

Google AI Overviews and Gemini often synthesize answers without clear attribution, making it hard to trace where the information came from. Perplexity provides citations for 91% of its answers, making it the best surface for diagnosing where your authority actually comes from.

When you see a gap between engines, treat it as a diagnostic signal. If you win on Perplexity but lose on Gemini, you likely have strong PR and citations but weak technical entities in the Knowledge Graph. That's a specific, fixable problem.

What Does the Gap Actually Tell You About Your Brand?

Once you accept that gaps are inevitable, you can use them to diagnose specific weaknesses. I think of these as four distinct gap types, each pointing to a different root cause.

The Recognition Gap

What it looks like: ChatGPT gives a detailed description of your brand. Claude says, "I don't have information on that."

What it means: Your entity saturation is low. You have enough buzz from social and blog content for ChatGPT to pick you up, but you lack the deep, authoritative footprint that conservative models require. Think Wikipedia, Wikidata, Crunchbase. Claude needs to verify you as a real node before it will talk about you.

The Understanding Gap

What it looks like: Perplexity correctly identifies you as an enterprise platform. Gemini categorizes you as a free tool.

What it means: You have an entity consistency problem. Your data on Google-favored sources like G2 or your own schema markup might conflict with data on other platforms. Gemini reads one signal. Perplexity reads another. Both are technically correct based on what they're seeing, and both are wrong about the full picture.

The Coverage Gap

What it looks like: You appear in "Best CRM" lists on ChatGPT but you're absent from the same list on Google AI Overviews.

What it means: You lack structured data. Google AI Overviews are highly responsive to FAQ and HowTo schema. If you haven't implemented this markup, Google's crawlers can't extract your brand for the list, even if your content is strong.

The Trust Gap

What it looks like: One engine recommends you as a market leader. Another frames you as a risky alternative.

What it means: This is a sentiment issue. One model might be ingesting recent negative reviews from Trustpilot that you haven't addressed, while another relies on older, positive training data. This gap is a leading indicator. If you don't fix it, the negative signal will eventually propagate to the other engines too.

Can't We Just Focus on the Biggest Engine?

I hear this constantly. And I understand the instinct. Resources are finite. Pick the biggest channel and win there.

In 2026, that's a strategic error. Here's why.

Narrative instability. If a potential investor checks your brand on three different AI tools and gets three different value propositions, your brand narrative collapses. You look undefined. You look risky. The investor moves on.

Competitive asymmetry. Your competitors are likely monitoring these gaps already. If they notice you're invisible on Perplexity, they can double down on PR and citations to lock you out of that channel. Gaps don't stay neutral. They get exploited.

Fragile positioning. Improving for only one engine means you're one algorithm update away from zero. If ChatGPT changes its retrieval logic tomorrow, your entire AI presence evaporates. Multi-engine visibility creates a diversified position that's much harder to disrupt.

The most common mistake I see when teams discover a gap is overfitting. They decide, "We need to win on ChatGPT," and flood the web with AI-generated content designed to trigger ChatGPT's retrieval. The problem? Strategies that work for ChatGPT, like high-volume conversational text, can actively hurt you on Claude, which penalizes fluff and prioritizes authoritative, substantive content.

You widen the gap. You spike in one engine and disappear from the others.

True improvement requires lifting the lowest common denominator of your brand signals. Improving the fundamental data quality that all engines rely on.

How Do You Actually Measure This?

You can't close a gap you haven't measured. Here's the workflow I'd recommend, whether you do it manually or with tooling.

Step 1: Run a structured multi-model audit

Stop randomly searching your brand name. Build a real test.

Create a prompt basket. Select 10 high-priority prompts. Things like "Best [Category] for Enterprise," "What is [Brand Name]?", "[Brand] vs [Competitor]." These should reflect the actual questions your buyers ask.

Execute across five engines. Run identical prompts through ChatGPT, Gemini, Claude, Perplexity, and Google AI Overviews.

Record more than just presence. Don't just look for your name. Record the context. Were you included? What position? Leader or alternative? Was the information accurate? Did it get your pricing right?

This is manually intensive work. Platforms like the Akii AI Search Tracker automate this process, running prompt variations on a regular cadence and tracking the delta over time.

Step 2: Normalize and score

To compare across engines, assign a simple score to each output on a 0 to 3 scale.

- 0: Invisible or hallucinated.

- 1: Mentioned but generic. Shallow understanding.

- 2: Shortlisted. Accurate but not the top recommendation.

- 3: Recommended. Detailed, accurate, positive.

Then calculate the delta. If your average score on Perplexity is 2.5 but your score on Gemini is 0.5, you have a 2.0 visibility gap. That's your priority fix.

Step 3: Close the Recognition Gap first

If you're invisible in one or more engines, this is your Tier 1 problem.

The fix: Unify your entity profile. Ensure your brand description is identical across your website, LinkedIn, Crunchbase, and Wikidata. This creates a master entity that even conservative models like Claude can verify. Consistency forces the model to accept your existence as a real, verified node.

Step 4: Fix the Understanding Gap

If engines know you but describe you incorrectly, like showing wrong pricing on Gemini, this is your Tier 2 problem.

The fix: Deploy structured data. Use Product and Offer Schema. Explicitly tag your pricing, currency, and availability. Gemini and Google AI Overviews rely heavily on schema. If you feed them structured code, they stop hallucinating and start quoting. The Website Optimizer can help generate this markup automatically.

Step 5: Fix the Trust Gap

If you're mentioned but not recommended, that's a sentiment problem. Tier 3.

The fix: Targeted authority building on the specific high-trust sources that the lagging engine prefers.

For Perplexity, focus on data-driven reports and PR coverage. Perplexity wants citations it can link to.

For Gemini, focus on Google signals: YouTube content, Google Maps reviews, Knowledge Panel accuracy.

For ChatGPT, focus on community buzz and broad content distribution. Reddit threads, forums, widely-shared analysis.

Each engine has its own trust hierarchy. You have to feed each one what it's looking for.

Step 6: Monitor continuously

AI search is volatile. A gap can close on Monday and reopen on Friday because of a model update.

Set up continuous monitoring. Use the AI Brand Audit to track your cross-engine delta over time. If the gap widens suddenly, it usually means a new hallucination has entered the training data of a specific model. Catching that early is the difference between a quick fix and a slow reputation leak.

What Does "Winning" Actually Look Like Here?

The goal isn't just to show up somewhere. Random visibility is vanity.

The goal is consistency.

When your brand appears with the same description, the same value proposition, and the same positive sentiment across ChatGPT, Gemini, Perplexity, and Claude, something real happens. You become a verified node in the global AI knowledge graph. Every engine reinforces the same story. Every buyer touchpoint confirms the same narrative.

I've been through enough technology cycles to know what durable advantage looks like. It's never about winning one channel. It's about building a position that holds across multiple surfaces, even as those surfaces shift underneath you.

In 2026, the most valuable asset a brand can possess is not a number one ranking on Google. It's a consistent, accurate, and confident representation in the reasoning engines that now mediate the world's information.

Knowing where you stand is the first step.

If you want to see how wide your visibility gap actually is, run a free multi-model scan to benchmark your brand across Gemini, ChatGPT, and Claude. Takes under two minutes.