Search Results Are Over. What Replaced Them Changes Everything.

That deal you made with search engines for the last twenty years? It's gone.

The deal was simple: improve keywords, earn clicks, show up in a list of ten blue links. Brands understood it. Agencies built entire practices around it. And for a long time, it worked.

I've watched platform shifts play out across multiple technology cycles. Desktop to mobile. Email to social. Each one felt like the ground moving. But what's happening right now with AI-driven search is structurally different from any of those. It's not a new channel sitting alongside the old ones. It's a replacement of the underlying mechanism that connects buyers to solutions.

We've moved from a world of search results to a world of synthesized answers. Users are skipping traditional search pages and turning to AI models like ChatGPT, Gemini, Claude, and Perplexity to answer questions, compare products, and make decisions. Recent industry analysis suggests 68.5% of web traffic is now influenced by AI search.

Most marketing teams are still tuning for Google's 2010 algorithm.

That gap is where brands are quietly disappearing.

Why Is "Ranking #1" No Longer the Goal?

Search is no longer about retrieving a list of URLs. It's about reasoning over information to produce a solution. That's a fundamentally different job, and it requires a fundamentally different response from brands.

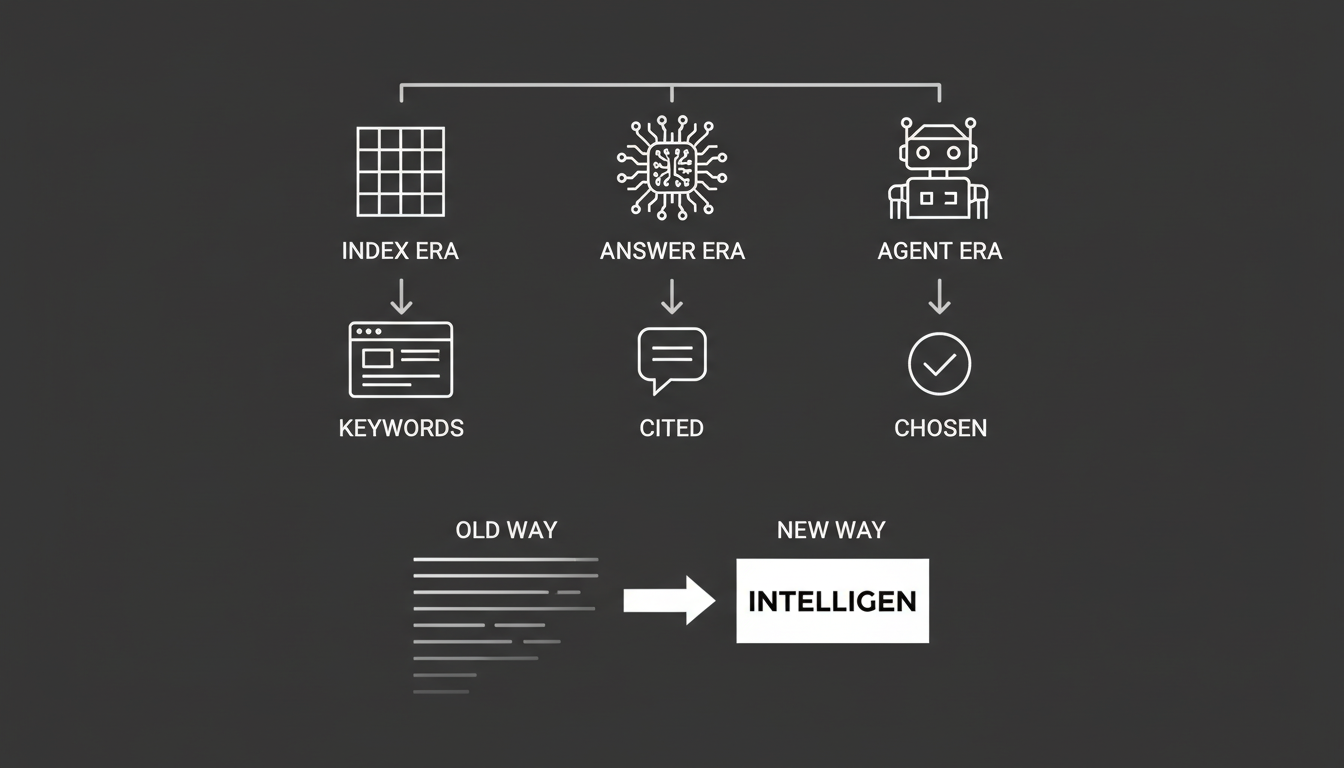

Here's the timeline, stripped down:

The Index Era (1998 to 2022): Search engines acted as librarians. They matched keywords in your query to keywords on a webpage. Success meant ranking in positions 1 through 10 and earning clicks.

The Answer Era (2023 to 2025): AI Overviews and Assistants started synthesizing information. Instead of sending users to a website, platforms like Google's AI Overviews and Perplexity generate a single answer and cite sources as footnotes. Success shifted to inclusion and share of voice.

The Agent Era (2026 and beyond): Autonomous agents don't just answer questions. They perform tasks. They research software, compare pricing, and book demos on behalf of users without a human clicking anything.

In this new reality, there is no page one in a conversation with a chatbot. There is only the answer. If your brand isn't cited in that answer, you're invisible.

I've seen this pattern before. When mobile took over, companies that didn't adapt their sites didn't just lose ranking. They lost relevance entirely. This shift is faster, and the consequences are more binary. You're either in the answer or you're not.

What's the Difference Between an AI Assistant and an AI Agent?

This distinction matters more than most marketers realize, because it changes how brands must structure their data.

AI Overviews and Assistants act as researchers. Tools like ChatGPT and Google Gemini currently function as super-powered research assistants. They scan the web, read reviews, compare pricing, and summarize findings. A user asks: "What is the best CRM for a small agency?" The model synthesizes data from G2, Reddit, and product pages to produce a shortlist of recommendations.

Autonomous Agents act as doers. A user says: "Book a demo with the CRM that fits my budget and integrates with Slack." The agent retrieves pricing via API, checks integration documentation, and interacts with a calendar booking tool. No browsing. No clicking. Just execution.

Here's what most people miss. Agents don't browse websites visually. They retrieve specific data points: pricing, stock status, API documentation, feature lists. They pull this from structured data and knowledge graphs. If your brand's information is locked in unstructured text or buried in PDFs, the agent can't parse it. It will bypass you in favor of a competitor whose data is machine-readable.

That's not a theoretical risk. It's happening now.

How Does AI Discovery Actually Change the Competitive Game?

The transition to AI-driven discovery changes competitive dynamics in two specific ways that are worth understanding clearly.

The Shortlist Problem

In traditional search, a user might browse 10 or 20 results across two pages. AI models don't work that way. They act as gatekeepers, filtering out noise and presenting only the most relevant options, typically three to five.

Being on page 2 of Google used to mean less traffic. In the AI era, being outside the top recommendations means zero visibility. Not reduced visibility. Zero.

What does that mean for any brand that's been comfortable sitting in positions 5 through 10? The middle of the pack just vanished.

The Verified Node Requirement

AI models are programmed to be risk-averse. They're designed to avoid hallucinations, which means inventing facts. To manage that risk, they prioritize what you might call "verified nodes" in their knowledge graph.

If your brand data is inconsistent, say your pricing differs between your website and your LinkedIn profile, the model detects a conflict. To avoid being wrong, it filters you out.

You don't get penalized. You don't get a warning. You just don't exist in the answer.

Visibility is no longer about keyword volume. It's about entity clarity. The brands that get chosen are the ones that provide the cleanest, most consistent signals to the machine.

What Does It Mean to Be "Chosen" Instead of "Ranked"?

Understanding this requires a genuine mindset shift. We're moving from SEO (Search Engine Optimization) to what's increasingly called GEO (Generative Engine Optimization).

Traditional search engines are indexes. They match strings of text. AI models are reasoning engines. They infer meaning and relationship.

When an AI model selects a brand to recommend, it runs something like a rapid four-part interrogation:

Who are you? Are you a distinct, recognized entity in the knowledge graph? Can the model differentiate you from similarly named companies or products?

What do you do? Does the model accurately classify your product category? If it thinks you're a social media tool when you're actually a CRM, you won't show up for CRM queries.

Can you be trusted? Do trusted third-party sources like industry analysts or major review platforms corroborate your claims? Or is the only evidence your own marketing copy?

Are you relevant? Do you solve the specific problem the user asked about? Not generally. Specifically.

Fail any one of those checks and you're out. Not demoted. Out.

Most people are framing this wrong. They treat AI visibility as a new marketing channel to add to their mix. It's not a channel. It's the new default interface for how people find and evaluate solutions. If you're not visible here, the other channels matter less than you think.

So What Should Brands Actually Do Right Now?

Enough theory. To move from invisible to indispensable, you need to execute across three areas: Structured Truth, Continuous Monitoring, and External Defense.

Step 1: Establish Structured Truth

This is the foundation. Answer Engine Optimization (AEO) is the practice of making your content machine-readable so AI agents can extract it instantly.

Deploy Schema Markup. Translate your content into the language of AI. Use Organization, Product, and Offer schema to explicitly tag your pricing, features, and availability.

Why this matters: when an agent is looking for "pricing," it looks for the price schema tag, not the visual text on your page. If you haven't tagged it, the agent doesn't see it.

Create Quotable Canonicals. AI models extract answers by looking for concise summaries. Structure your high-traffic pages with "TL;DR" sections and question-based headings like "What is [Product]?" followed immediately by a direct answer.

Why this matters: this increases the probability that the AI will lift your exact sentence as the definition. You're essentially writing the answer you want the model to give.

Unify Your Entity Profile. Create a master entity profile with one unified description, one taxonomy, and one boilerplate. Replicate this exact text across your website, LinkedIn, Crunchbase, and Wikidata.

Why this matters: consistency forces the model to accept your definition as the ground truth. Inconsistency creates doubt, and doubt means exclusion.

Step 2: Roll out Continuous Monitoring

You can't manage what you don't measure. Traditional rank trackers are blind to AI visibility because there are no "positions" in a chat interface. That's a real problem.

Run Prompt-Based Audits. You need to track how your brand appears across ChatGPT, Gemini, Claude, and Perplexity. This is exactly the kind of problem Akii was built to solve. Specifically, you need to know three things:

- Brand Recognition: How often are you mentioned?

- Brand Understanding: Is the model describing you accurately?

- Sentiment: Is the tone positive or negative?

We built Akii's AI Brand Audit capability specifically because we saw that most companies had no idea how AI engines were representing them. The gap between what brands think AI says about them and what AI actually says is consistently shocking.

Track Competitor Movements. See who the AI recommends when you aren't cited. Often you'll find brands you didn't know existed but that the AI favors because they have better structured data. The competitor beating you in AI answers isn't always the one you're watching in your market. Sometimes it's a smaller player who simply made their data cleaner.

Step 3: Build a Defense with GEO

Generative Engine Optimization focuses on the external authority required for an AI to trust you.

Secure External Corroboration. AI models rely on high-trust nodes to validate claims. Secure mentions in authoritative media, academic journals, or major review platforms like G2 and Trustpilot. Your own claims are inputs. Third-party validation is evidence. Models weight them very differently.

Correct Hallucinations. If monitoring reveals that an AI model is misrepresenting your pricing or features, you need to engineer the correction. This means systematically feeding correct, structured information into the systems that are making recommendations about you. It's not a one-time fix. It's an ongoing process.

This is where most brands are completely asleep. They don't even know the AI is getting their product wrong, let alone have a process for fixing it.

Is This Really Happening Now, or Is It Still Theoretical?

It's happening now. I want to be direct about this because I've seen too many "future of" articles that give teams permission to wait.

When 68.5% of web traffic is influenced by AI search, this isn't a trend to watch. It's the current state of play. Every month you spend improving exclusively for traditional search while ignoring AI visibility is a month your competitors can use to establish themselves as the default answer.

The brands winning this shift aren't doing anything exotic. They're doing the basics of the new era: clean data, consistent entity profiles, structured content, and continuous monitoring of how AI represents them.

If you want to see where your brand stands right now, Akii's platform tracks how AI engines mention, describe, and recommend brands across the major models. It's the starting point for understanding whether you're in the answer or out of it.

Where Does This Go From Here?

The trajectory is clear. AI agents will get more capable. They'll handle more of the research, comparison, and decision-making that humans currently do manually. The window between early adopter advantage and table stakes is shrinking fast.

In 2010, you won by having the most backlinks. In 2026, you win by being the most machine-readable, consistent, and authoritative entity in the knowledge graph.

The companies that structure their truth and monitor their AI reputation now will be the ones chosen by the agents making decisions on behalf of their customers tomorrow.

The ones that don't won't even know they've been filtered out. That's the part that should keep you up at night. In traditional search, you could at least see your ranking drop. In AI answers, you just disappear. No notification. No report showing a decline. Just silence.

Don't wait for the silence to get your attention. By then, the answer has already been written without you.