Why the Traditional SEO Workflow Is Breaking

Most marketing teams are running a workflow built for a world that no longer exists.

The standard model goes like this: plan a campaign, publish content in batches, check rankings in 90 days, adjust, repeat. That worked when Google was the only game in town and the rules stayed relatively stable for years at a time.

AI search doesn't work on your quarterly calendar. It doesn't wait for your next content sprint, and it doesn't reward the same things traditional search did.

Three assumptions are quietly falling apart inside most marketing operations right now.

Campaign-based thinking. Teams plan content in bursts tied to product launches, seasonal pushes, or editorial calendars. The problem is that AI engines pull answers from your content every day, not just during launch week. If your information is stale or your narrative is unclear between campaigns, you're invisible in the moments that matter most.

Quarterly reporting cycles. By the time you pull a quarterly report on how AI engines are referencing your brand, the answers have already shifted multiple times. Quarterly is fine for board decks. It's useless for managing how you show up in AI-generated responses.

Static ranking assumptions. Traditional SEO trained us to think in positions. You're number three for this keyword, number one for that one. AI answers don't have positions. They have narratives. Your brand is either part of the answer or it isn't, and that can change from one day to the next with no warning.

If your team is still operating on these assumptions, you're not behind by a quarter. You're behind by a fundamental shift that's already happened.

How AI Search Changes Marketing Operations

What's actually different about managing visibility in AI search? It comes down to a few operational realities that most teams haven't internalized yet.

Continuous answer evolution. AI engines don't update their results on a predictable crawl schedule. The answers they generate are fluid. They pull from different sources, weight different signals, and restructure their responses constantly. What ChatGPT says about your category today might be different tomorrow, not because you did anything, but because someone else did, or because the model reweighted its sources.

Visibility isn't something you check. It's something you watch.

Multi-engine variability. It's not just ChatGPT. It's Perplexity, Gemini, Claude, Copilot, and whatever launches next quarter. Each one has different training data, different retrieval methods, and different tendencies in how it constructs answers. Your brand might show up accurately in one and be completely absent from another.

Are you tracking all of them? Most teams aren't tracking any of them.

Narrative influence before traffic. This is the one that trips people up most. In traditional search, the goal was traffic. Get the click. In AI search, the influence happens before anyone clicks anything. The AI engine tells the user what to think about your category, your product, your brand. If you're not part of that answer, you've already lost the moment before it becomes a visit.

That means the operational focus has to shift from "how do we rank" to "how are we being represented." Different question. Different workflow. Different team structure.

The New AI Visibility Operating Model

I've spent 25 years watching marketing teams adapt to technology shifts. The teams that win aren't the ones with the best tools or the biggest budgets. They're the ones that build the right operational loop first, then figure out the tools.

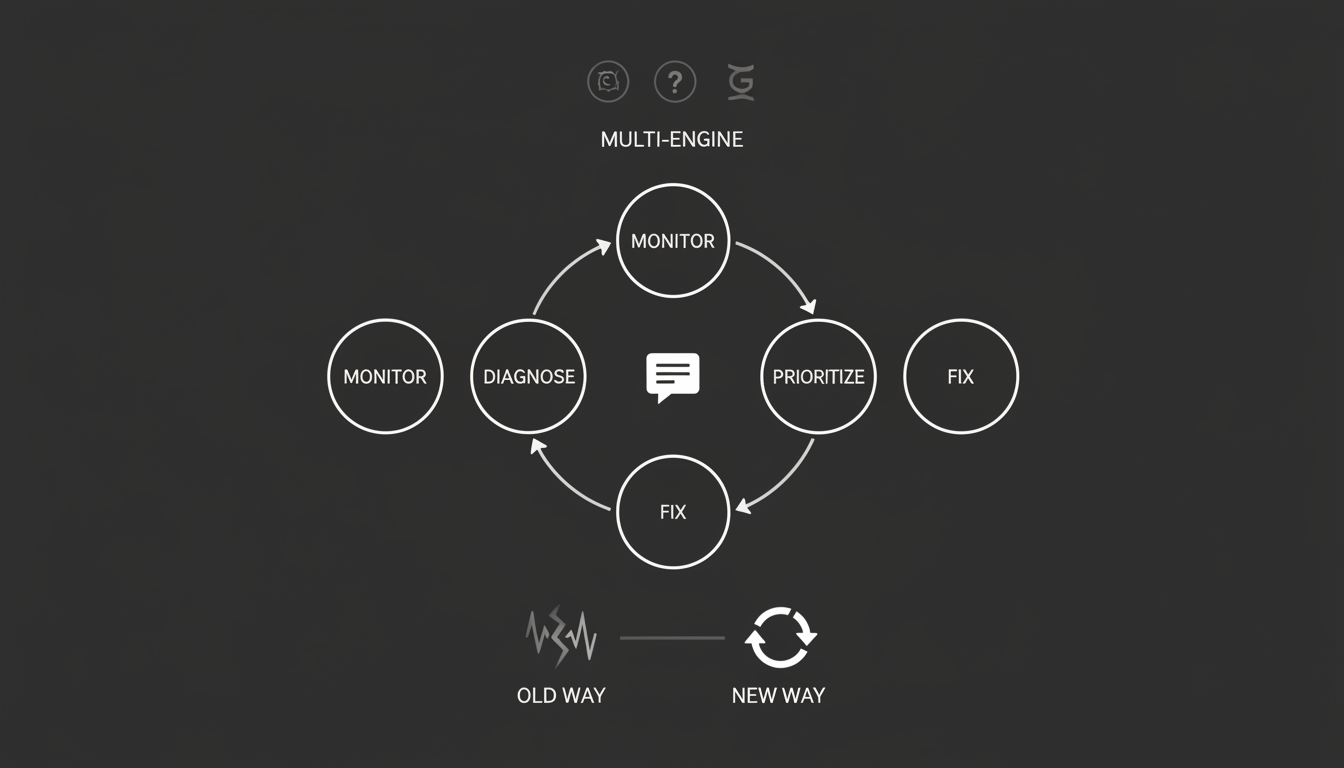

For AI visibility, that loop has five stages. Simple to describe. Hard to execute consistently.

Monitor. Track how AI engines are answering questions in your category. Not once a month. Continuously. What are they saying about you? What are they saying about your competitors? What are they getting wrong? This is the foundation. Without it, everything else is guesswork.

Akii built its AI search tracking capabilities specifically for this stage, because most existing tools simply weren't designed to monitor AI-generated answers across multiple engines.

Diagnose. When you spot a problem, figure out why. Is the AI pulling from outdated content? Is a competitor's narrative stronger? Is your product positioning unclear in the source material the AI is drawing from? Diagnosis is where most teams skip straight to action and waste effort fixing the wrong thing.

Prioritize. You can't fix everything at once. Which answers matter most to your business? Which misrepresentations are costing you the most? Where is a competitor gaining ground in the AI narrative that directly affects your pipeline? Prioritization turns monitoring data into a focused plan.

Fix. This is where content, technical SEO, and product marketing converge. Fixing might mean updating a key page, or publishing new content designed to correct a specific AI misrepresentation. It might mean restructuring how your product information is organized so AI engines can parse it more clearly.

Reinforce. The fix isn't the finish line. You need to verify the correction took hold. Did the AI answer actually change? Did it stick? Reinforcement means closing the loop and confirming the outcome before moving on.

Monitor. Diagnose. Prioritize. Fix. Reinforce. Then start again.

This isn't a project. It's a practice. Teams that treat it like a one-time audit will fall behind teams that treat it like an ongoing operating rhythm.

How Teams Should Divide Responsibility

One of the biggest mistakes I see is treating AI visibility as a single person's job. It usually gets dumped on the SEO lead, which makes sense on the surface but falls apart in practice.

Why? Because AI visibility sits at the intersection of disciplines that no single role fully covers.

SEO and technical signals. Your SEO team owns the structural foundation: site architecture, schema markup, crawlability, structured data. These still matter. AI engines still need to find and parse your content. But good technical SEO gets you into the pool of sources. It doesn't guarantee you're part of the answer.

Product marketing and narrative clarity. This is where most teams have a gap. The way your product is described, positioned, and differentiated in your content directly affects how AI engines represent you. If your messaging is vague, the AI's answer will be vague. If your competitor's positioning is crisper, the AI will favor their framing.

Product marketing needs to be involved in AI visibility work. Not as a reviewer at the end. As a partner from the start.

Content and reinforcement. Your content team is responsible for the ongoing reinforcement cycle. Publishing material that corrects misrepresentations. Updating existing pages that AI engines are pulling from. Creating content specifically designed to shape how AI answers are constructed in your category.

This isn't content marketing as most teams practice it. It's not about driving organic traffic through blog posts. It's about shaping the information environment that AI engines draw from.

The question every marketing leader should ask: do these functions actually coordinate on AI visibility today? In most organizations I've talked to, the honest answer is no. They operate in parallel, not together.

That has to change, and it has to change with clear ownership and shared metrics, not a Slack channel and good intentions.

Building an Operational Rhythm

The operating model only works if it runs on a consistent cadence. Here's what that looks like in practice.

Weekly reviews. Once a week, someone on the team should review how AI engines answered the most important questions in your category. Did anything change? Did a competitor appear where they weren't before? Did an AI engine start getting something wrong about your product?

This doesn't need to be a two-hour meeting. Fifteen minutes with the right data is enough. The AI visibility metrics framework we've written about gives you a structure for what to look at and what to ignore.

Competitive monitoring. Your competitors are doing this work too, whether they realize it or not. Every piece of content they publish, every product page they update, every press mention they earn potentially changes how AI engines answer questions in your shared category.

You need to track not just your own AI visibility, but your competitors'. Not obsessively. Consistently enough that you see shifts before they compound.

Change detection. This is the operational backbone. You need a system that flags when something meaningful changes in how AI engines represent your brand or category. Not every fluctuation matters, but the ones that do need to surface quickly.

If you're waiting for someone to manually check AI answers and compare them to last week, you've already lost the speed advantage. This is where tooling matters. Akii's monitoring and action capabilities were built to detect these changes and connect them to the prioritization and fix stages of the operating model.

The rhythm should feel like this: review weekly, act on what changed, verify that your fixes took hold, keep the cycle turning. It's not glamorous. It's operational discipline applied to a new problem.

What This Actually Looks Like When It's Working

Most teams reading this are not going to build all of it next week. That's fine.

The teams that get this right start small. They pick three to five questions that matter most to their business. They start monitoring how AI engines answer those questions. They assign clear ownership across SEO, product marketing, and content. Then they build the weekly review habit before they worry about scaling.

The shift from monitoring to action is where most teams stall. They collect data but don't have a clear process for turning it into decisions. The operating model I've described here is designed to close that gap.

Here's what I know from watching technology cycles play out over and over: teams that build operational discipline early don't just adapt. They set the pace. Everyone else spends the next two years trying to catch up.

AI visibility isn't a feature you bolt onto your existing marketing workflow. It's a new workflow. Treat it that way and you'll build a structural advantage that compounds over time.

Start the loop. Keep it turning. That's the whole strategy.