From Tools to Systems: Why Point Solutions Are Already Failing

Most companies tracking their AI visibility today are doing it with tools. A dashboard here, a reporting layer there, maybe a manual audit every quarter.

That's not a system. That's fragments pretending to be one.

I've watched this pattern repeat across every major technology shift I've lived through. Early adopters cobble together point solutions. They get some visibility. They feel like they're ahead. Then the market matures, complexity compounds, and the patchwork falls apart.

We're at that exact moment with AI brand intelligence.

The problem with point solutions isn't that they don't work. It's that they don't connect. Your monitoring tool doesn't talk to your content team. Your content team doesn't know what the AI models are actually saying about you right now. Your executive team gets a report once a month that's already stale by the time it lands.

What's missing isn't more tools. It's a closed loop. Monitoring that feeds directly into execution, and execution that feeds back into monitoring. A system that doesn't just tell you what happened but acts on what's happening.

This is where AI brand intelligence stops being a nice-to-have and becomes operational infrastructure.

Why Is Continuous Intelligence a Competitive Advantage?

Here's the question worth sitting with: if your competitor knows how they're being represented in AI answers every single day, and you check once a quarter, who wins over 18 months?

It's not close.

The shift from periodic reporting to continuous intelligence is the same shift that happened in cybersecurity, in DevOps, in financial risk monitoring. Every domain that matters eventually moves from "check it sometimes" to "know it always." AI brand representation is no different.

The models that answer questions about your industry, your products, your competitors are updating constantly. Training data shifts. Retrieval sources change. The way a model frames your brand in a response today might be completely different from last week.

If you're treating this like a campaign, you're already behind. Campaigns have start dates and end dates. Infrastructure runs continuously.

I wrote about this distinction in why AI visibility is not a dashboard. The core idea is simple: dashboards show you the past. Infrastructure gives you the present and a path to act on it.

The companies that will own their AI narrative over the next five years aren't the ones running the best one-off audits. They're the ones building continuous intelligence into how they operate.

What Does Policy-Governed Activation Actually Look Like?

This is where the conversation gets practical, and where most people's mental model breaks down.

When I say "closed-loop," I don't mean a human reviews a report and then emails the content team. That's an open loop with a person in the middle hoping nothing falls through the cracks.

A real closed loop has two things: guardrails and deterministic triggers.

Guardrails define the boundaries. What's acceptable? What's not? What does your brand policy say about how you should be represented in AI-generated answers? These aren't vague brand guidelines. They're specific, measurable conditions.

For example: "If our brand is mentioned alongside competitor X in a way that implies we're a subset of their offering, that's a policy violation." Or: "If our product is described using outdated pricing or discontinued features, that triggers a correction workflow."

Deterministic triggers are the rules that fire when those conditions are met. Not "maybe someone should look at this." Not "flag for review." An actual, predefined response. Update this content. Publish that correction. Adjust the structured data. Notify this team.

The word "deterministic" matters. In a world full of probabilistic AI outputs, the response layer needs to be predictable and governed. You don't want your correction system hallucinating any more than you want the AI models doing it.

This is what separates monitoring from intelligence. Monitoring tells you something changed. Intelligence tells you what changed, whether it matters based on your policies, and what to do about it.

How Do Snapshots Become an Action Loop?

I've been thinking about brand state snapshots as the foundational data layer for a while now. Here's why they matter more than people realize.

A snapshot captures how AI models represent your brand at a specific point in time. Not what your website says. Not what your press release says. What the AI actually says when someone asks about you.

One snapshot is interesting. A sequence of snapshots is intelligence. Connect that sequence to automated responses and you have infrastructure.

Think of it like version control for your brand's AI presence. Every snapshot is a commit. You can see what changed, when it changed, and in what direction. You can diff two snapshots and immediately understand whether your position improved or degraded.

Now connect that to the policy layer I just described. When a new snapshot detects drift that violates your defined thresholds, the system doesn't wait for a human to notice. It triggers the appropriate response.

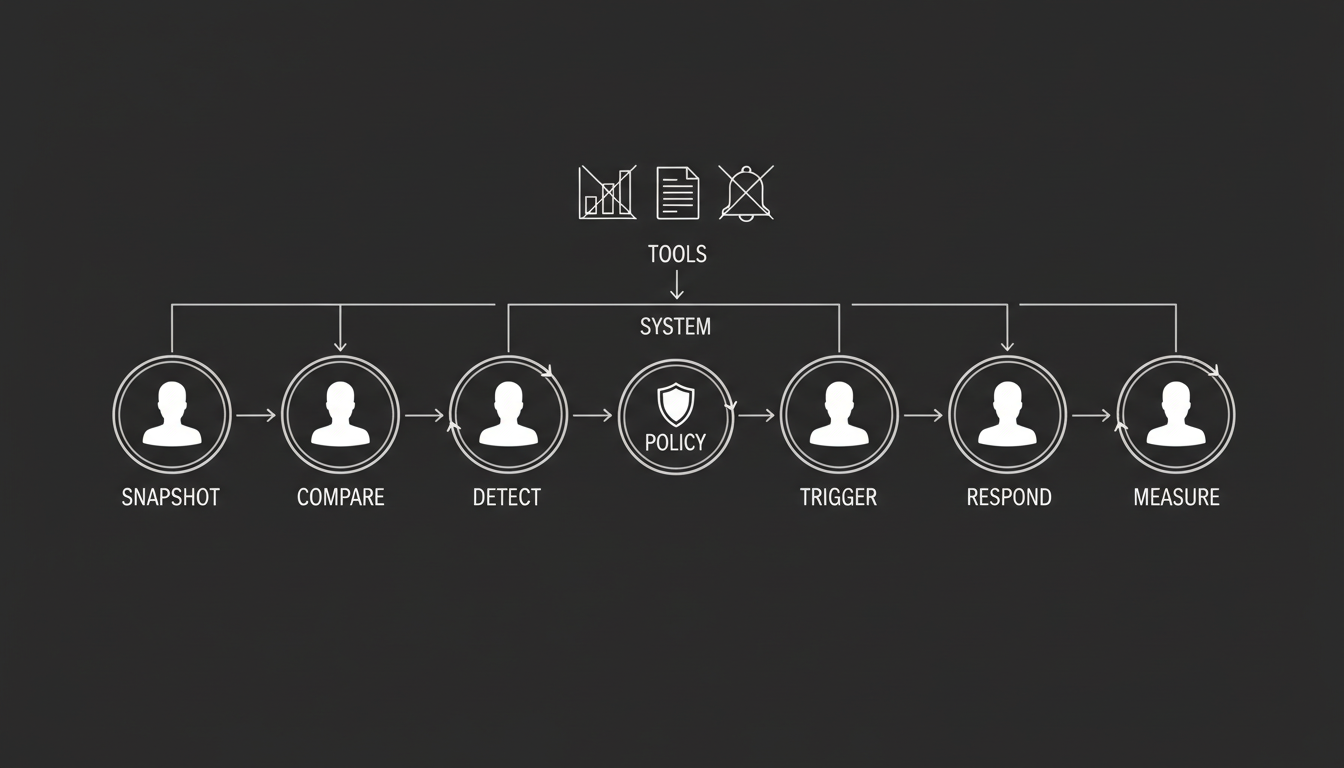

This is the action loop:

- Capture the current state (snapshot)

- Compare against policy and historical baseline

- Detect drift or violation

- Trigger the governed response

- Capture the next state

- Measure whether the response worked

That's the AI visibility flywheel in practice. Each cycle improves your position and sharpens your understanding of what actually moves the needle.

At Akii, this is the architecture we're building toward. Not because it sounds good on a slide, but because every other approach I've seen breaks down at scale. Manual review doesn't scale. Periodic audits miss the drift between checkpoints. Dashboards without action loops are just expensive wallpaper.

What Will the Next Five Years Actually Look Like?

I'll tell you what I think happens. Not what I hope happens. What 25 years of watching technology shifts tells me is coming.

Proactive narrative defense becomes standard practice.

Right now, most brands are reactive. They discover a problem in their AI representation after a customer or analyst points it out. That's embarrassing and expensive. Within three years, serious companies will have always-on monitoring that detects narrative threats before they spread across model updates.

This isn't paranoia. It's the same logic behind reputation monitoring, except the "media" is now an AI model that millions of people treat as a trusted source.

Automated threshold-based intervention becomes normal.

Today, the idea of an automated system correcting your brand's AI presence sounds aggressive or risky. In five years, it'll sound obvious. The same way automated security patching went from "that's too risky" to "that's table stakes."

The thresholds will be brand-specific. A Fortune 500 company might set tight tolerances around competitive positioning. A startup might care more about accuracy of product descriptions. The point is that the response is governed, not improvised.

The data layer will determine who wins.

Companies that start capturing snapshots now will have years of historical data to train their response systems on. They'll know which interventions actually moved the needle. They'll have the pattern data to predict drift before it happens.

Companies that wait will be starting from zero with no historical context. That gap compounds fast.

AI brand intelligence will report to the C-suite.

This won't live in the marketing department forever. When your brand's AI representation directly affects revenue, customer trust, and competitive positioning, it becomes a board-level concern. I've seen this happen with cybersecurity, with data privacy, with digital transformation. The pattern is always the same: it starts in a functional team, then the stakes get high enough that leadership takes ownership.

Why Is This a New Category?

I don't use the phrase "new category" lightly. I've been around long enough to know that most things people call new categories are just existing categories with new labels.

This is different.

AI Brand Intelligence Infrastructure isn't SEO. It isn't brand monitoring. It isn't content marketing or PR. It borrows from all of them, but the core problem it solves is genuinely new: how is your brand being represented in AI-generated answers, and what are you doing about it in real time?

No existing category answers that question. SEO tools track search rankings, not AI model outputs. Brand monitoring tools track media mentions, not how a language model synthesizes your brand into a response. Content marketing tools help you publish, not detect and correct how AI systems interpret what you've published.

The closed-loop part is what makes it infrastructure rather than just another intelligence layer. Intelligence without action is trivia. Infrastructure connects knowing to doing.

At Akii, we're building for this future because we believe it's the only version that actually works at scale. You can see the features we're shipping and the pricing model we've built around it. But the bigger point isn't about us. It's about the shift itself.

The companies that treat AI brand intelligence as operational infrastructure will control their narrative. The ones that treat it as a reporting exercise will spend the next decade reacting to problems they could have prevented.

I know which side of that I want to be on. The question is whether you've decided yet.