The Board Doesn't Care About Your Rankings Anymore

CMOs are walking into quarterly board meetings with the same slide deck they've used since 2018. Organic traffic charts. Keyword ranking tables. Cost-per-lead breakdowns. And they're getting a reaction they didn't expect: polite skepticism.

The board members sitting across the table are the same people who now ask ChatGPT for restaurant recommendations, have Claude summarize competitive spaces, and use Perplexity to research vendors before taking a meeting. They've experienced the shift firsthand. They know something has changed. When your report doesn't reflect that change, you lose credibility fast.

This isn't a hypothetical problem. It's happening right now, in real boardrooms, at real companies.

Why Does the Traditional SEO Slide Fall Flat?

The core issue is simple. For over a decade, "SEO success" and "high traffic" were basically the same thing. That relationship has broken.

Industry analysis suggests that 68.5% of web traffic is now influenced by AI search. But "influenced" doesn't mean "clicked." AI models like Perplexity and Google's AI Overviews synthesize answers directly on the results page. A prospect can read about your product, compare your pricing, understand your differentiators, and make a decision without ever visiting your website.

So when you show the board a flat or declining traffic line, you might actually be underreporting your real market influence. Or you might be losing ground and not even know it. Either way, the traffic chart isn't telling the truth anymore.

Rankings Don't Mean What They Used To

Here's what most people miss. In traditional search, ranking number one was binary. You won or you didn't. In AI search, the output is probabilistic. A model might recommend you to a user in London but leave you out entirely for someone in New York asking the same question with slightly different context.

Showing the board a static "Rank #3" is misleading in an environment that's fundamentally dynamic. What the board actually wants to know isn't where you sit on a list. They want to know how often you're chosen as the answer. They care about share of voice, not share of pixel.

What Happens When the CEO Tests Your Brand in ChatGPT?

I've seen this play out enough times to call it a pattern. The demand for AI visibility reporting almost always starts with a specific, panicked moment.

A CEO or board member opens ChatGPT on their phone and types something like: "What does [Our Brand] do?" Or worse: "Compare [Our Brand] vs. [Competitor]."

If the AI responds with "I don't have detailed information on that," you've got a problem. If it describes your enterprise platform as a "small business tool," you've got a crisis. The board won't frame it as an SEO issue. They'll frame it as a reputation issue.

This is the part most marketing leaders underestimate. For the board, AI search has become the new homepage. It's the first touchpoint for investors doing due diligence, analysts building coverage models, and high-value prospects deciding whether you're worth a meeting.

If Gemini claims your pricing is double what it actually is, that's not a data error. That's revenue leakage. If Claude describes your product category wrong, that's brand damage happening at scale, silently, with no click trail to trace.

When you report AI visibility to the board, frame it as digital reputation management. You're protecting the brand's integrity in the machines that now mediate how the world makes decisions. That framing resonates because it's accurate.

Why Is AI Visibility a Leading Indicator, Not a Vanity Metric?

This is where the conversation gets strategic, and where most CMOs miss the strongest argument they have.

Traditional marketing metrics are lagging indicators. Revenue happens after a contract is signed. Pipeline happens after a lead converts. Traffic happens after a click. AI visibility happens before all of it.

It happens at the moment of ideation. When a prospect asks "What software should I use to scale my sales team?", the brands cited in that initial answer are the only ones that make it to the evaluation stage. If you're not in the AI's shortlist of recommendations, you don't exist in that buyer's consideration set. Full stop.

Think about what that means for pipeline.

The narrative is straightforward: "If we're not in the AI shortlist today, we won't be in the sales pipeline next quarter." That's not speculation. That's how filtering works. AI models process enormous amounts of information and compress it into a tiny set of recommendations. Being excluded from that set means being excluded from consideration before the buyer ever reaches your website.

By framing AI visibility as "pre-pipeline," you move the conversation from tactical SEO to strategic market positioning. That's a conversation boards actually want to have.

What Metrics Should Actually Be on the Board Slide?

Don't show the board a spreadsheet of schema markup errors or token limits. Those are operational details for your team. To hold the room, you need to translate AI signals into business outcomes.

Organize it around these four areas.

Recognition vs. Preference

The old metric was keyword ranking position. The board metric is inclusion rate: the percentage of times your brand is cited in high-intent buyer queries compared to competitors.

The story you tell sounds like this: "When customers ask AI for the best solution in our category, we're recommended 42% of the time. Our main competitor is recommended 60% of the time. Our goal is to flip this ratio by Q3."

That's a competitive framing the board understands immediately. It's market share language applied to a new surface.

Competitive Inclusion

The old metric was share of search. The board metric is competitive win rate in AI.

This is where you track where you appear relative to rivals across AI platforms. Surprises tend to live here. You might discover a brand you weren't tracking that's being recommended by Claude as a cheaper alternative. That kind of intelligence changes your competitive strategy, not just your content calendar.

The story: "Brand X, which we weren't monitoring, is being recommended by Claude as a cheaper alternative to us. We're launching a campaign to correct this positioning."

Category Positioning

The old metric was bounce rate or time on site. The board metric is brand understanding score: a measure of whether the AI accurately describes your value proposition, pricing, and features.

The story: "Last quarter, Gemini was stating that we didn't offer enterprise security. We fixed our entity data, and now our brand understanding score is 92%. The risk of misinformation has been neutralized."

That last one matters more than people realize. When a model confidently misstates your capabilities or pricing to a prospect, it's actively working against your sales team. Fixing it is a revenue protection activity, not a content project.

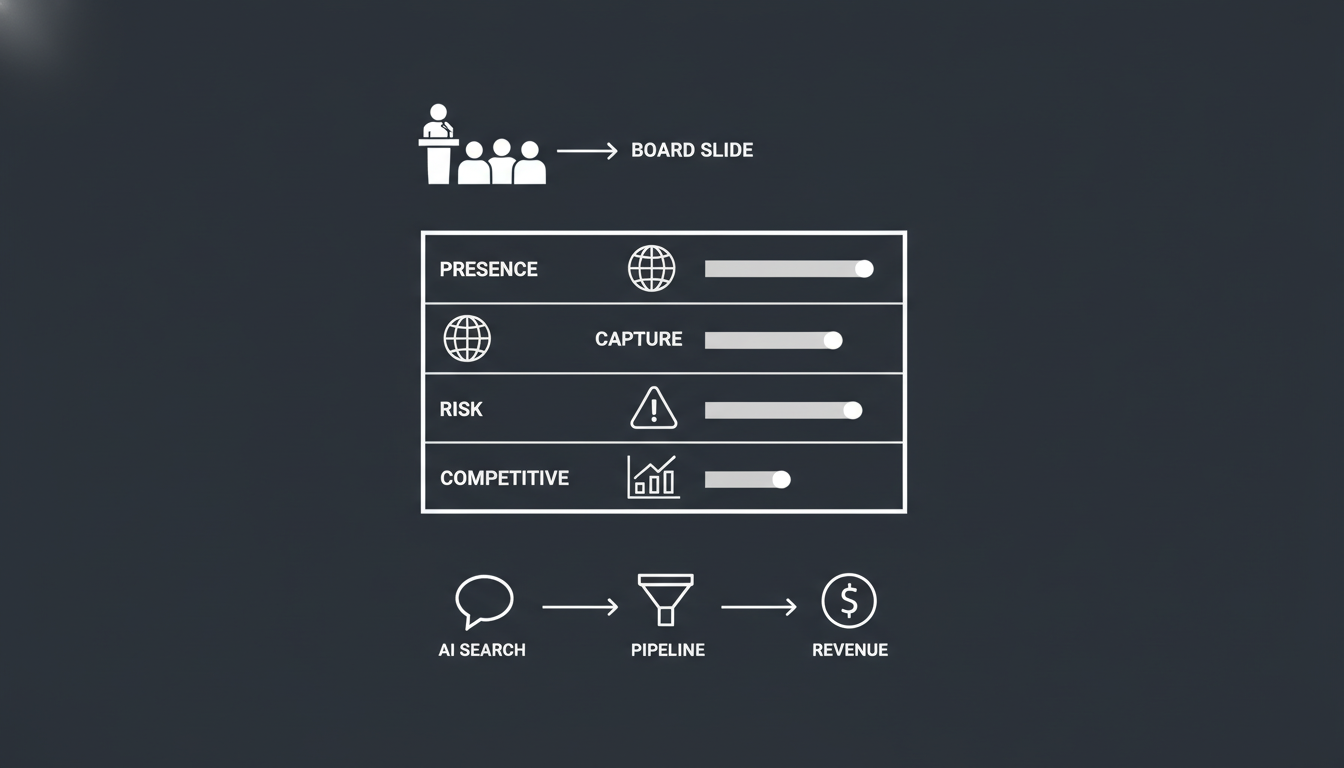

What Should the Actual Board Slide Look Like?

Here's a framework for the single slide you should add to your board deck. It moves from a high-level health score down to specific revenue risks.

Market Presence: AI Visibility Score (0 to 100). Current: 65. Target: 75. This is the aggregate probability of your brand being discovered in modern search.

Demand Capture: Inclusion Rate for transactional queries. Current: 22%. Target: 35%. How often you're cited when buyers ask "Best [Category] Tool."

Brand Risk: Hallucination Frequency. Current: High. Target: Low. Example: "ChatGPT misquotes our pricing. Fixing this is a priority to stop revenue leakage."

Competitive: Share of Voice vs. Category Leader. Current: minus 15%. Target: minus 5%. "We're closing the gap against [Competitor X] in AI Overviews."

That's it. One slide. Four rows. Each row connects to a business outcome the board cares about.

What to Leave Out

Raw prompt lists. Don't show the 1,000 questions you tested. Summarize the findings.

Technical jargon. Skip "JSON-LD," "Robots.txt," and "Vector Embeddings." Use plain language like "machine readability" or "digital infrastructure."

Daily volatility. AI scores fluctuate constantly. Report on 30-day trends, not daily spikes. The board wants trajectory, not noise.

How Does AI Visibility Connect to Revenue?

This question will come up. Be ready for it. Direct attribution is difficult because many AI interactions are zero-click. But the correlation is real and getting stronger.

Explaining the Dark Funnel

If your direct traffic is rising but organic search clicks are flat, AI is often the driver. Buyers are getting their answer in Perplexity or ChatGPT, then coming straight to your site to convert. They skip the Google click entirely.

The argument: "Our increase in direct demo requests correlates with our 20-point jump in AI Visibility Score. Buyers are finding the answer in AI and coming directly to us to convert."

Reducing Downstream Friction

When AI models understand your brand accurately, they qualify leads for you. Prospects arrive already knowing what you do, what you cost, and why you're different.

The argument: "By fixing our pricing data in the AI knowledge graph, we're seeing higher quality leads. Prospects arrive knowing our true price point, which reduces friction for the sales team and shortens the sales cycle."

The Insurance Argument

This one is forward-looking, but it's grounded in what's already happening. Autonomous agents are starting to book software, compare vendors, and make purchasing recommendations on behalf of users. If your brand isn't machine-readable today, you'll be invisible to the automated economy that's forming right now.

The argument: "This investment isn't just about today's pipeline. It's insurance against being excluded from the next generation of how buyers find and choose solutions."

How Do You Actually Build This Report?

Let me be practical about the steps, because frameworks are useless without execution.

First, run the audit. Don't walk into the board meeting with guesses. You need hard data on your current inclusion rates, brand understanding scores, and competitive positioning across AI platforms. This is what tools like Akii's AI Brand Audit are built to provide. Real numbers, not assumptions.

Second, highlight the risk. Nothing creates urgency like a screenshot. Show the board a real example of an AI hallucination about your brand. Show them what happens when someone asks ChatGPT to compare you against your top competitor. If the result is bad, that's your most persuasive slide.

Third, present the plan. Show the roadmap from "invisible" to "recommended." Break it into quarters. Tie each milestone to a business outcome. Make it clear this isn't a side project. It's a strategic initiative.

The Real Shift Underneath All of This

I want to step back and name what's actually happening here, because the reporting question is really a symptom of something bigger.

The CMO's job has changed. It's no longer just about capturing attention on a search results page. It's about engineering how your brand is understood and represented by the AI systems that increasingly mediate how the world makes decisions.

That's not a marketing problem. That's a business problem. Reporting on it correctly is how you demonstrate that marketing understands the shift and is acting on it.

When you report AI visibility to the board, you're not just showing a new metric. You're showing that marketing is positioned as a guardian of the brand's digital presence and a driver of future demand. Not reacting to algorithm changes. Proactively securing the brand's future.

That's the narrative that earns budget, builds trust, and keeps you in the room.

If you want to start with real data instead of assumptions, Akii can show you exactly where your brand stands across the AI platforms that matter. The numbers are usually more surprising than people expect. Sometimes better, sometimes worse. But always worth knowing before your next board meeting.