The Wake-Up Call Is Not the Strategy

Running your first AI visibility scan is like getting bloodwork done after years of skipping checkups. The results tell you something real. Maybe something uncomfortable. But results alone don't make you healthier.

I've watched dozens of teams run a free scan, see their brand is invisible or misrepresented in ChatGPT or Gemini, and then split into two groups. Some treat it as a fire drill, scramble for a week, then move on. Others treat it as the starting point of something more disciplined.

The second group is the one that protects their revenue.

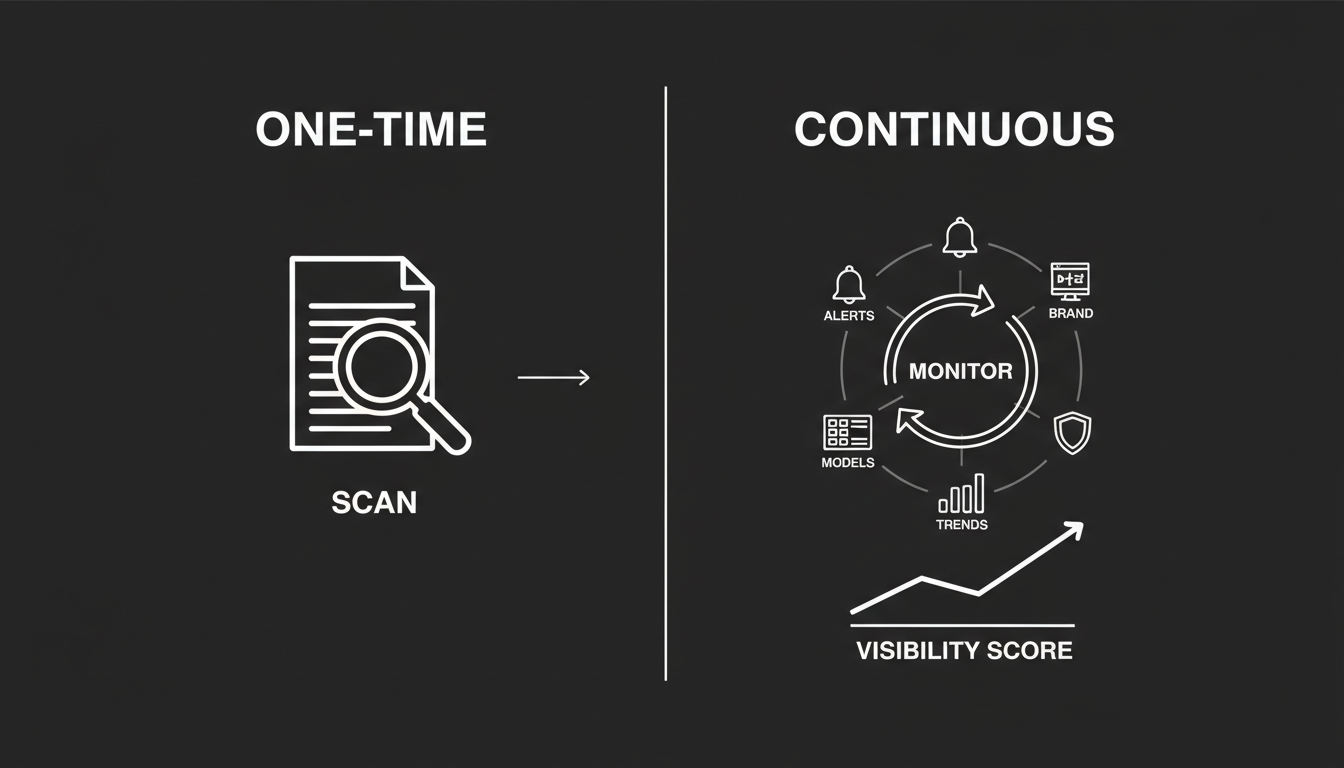

A single scan answers one question: "Am I part of the conversation?" Useful question. But in a world where AI models update weekly and answers shift based on how a user phrases a prompt, a one-time check is a diagnosis. Not a strategy.

If you want to actually protect your brand and your market share inside AI, you need to move from checking visibility to building a system around it. Here's what that looks like in practice.

What Does a Single Scan Actually Tell You?

A free scan like the AI Visibility Score from Akii gives you a fast, honest read across four dimensions:

Brand Recognition. Does the model know you exist? Or are you completely invisible when someone asks about your category?

Brand Understanding. If it knows you, does it describe what you do accurately? Or is it fabricating details about your features, your pricing, your positioning?

Content Coverage. Do you have authority on the topics your customers are actually asking about? Or does the model pull from competitors and generic sources instead?

Sentiment. When your brand does appear, is the framing positive? Or is the model surfacing old negative reviews and outdated criticism?

This baseline matters. It's how you find the immediate problems. Maybe Gemini is telling people you don't offer enterprise support when you do. Maybe Claude is confusing you with a competitor. Those are the kinds of errors that cost you leads right now, today.

But that scan only reflects reality at one specific moment. And that moment passes fast.

Why Do Manual Checks Always Break Down?

I've seen this pattern more times than I can count. A marketing team gets concerned about AI visibility, so they assign someone to type prompts into ChatGPT and Perplexity every Monday morning. Log the results in a spreadsheet. Report back in the team meeting.

Sounds reasonable. Never works. Here's why.

The volatility problem

AI visibility is not stable. Inclusion rates can shift dramatically after a model refresh or new content ingestion. A manual check on Monday might miss a hallucination that appears on Wednesday. That hallucination then costs you leads for the rest of the week, and you don't even know it happened.

The models don't announce when they change their minds about your brand. They just do it.

The scale problem

One prompt in one model gives you one data point. To get an accurate picture, you need to test definitional queries, evaluative queries, and transactional queries across at least five models: ChatGPT, Gemini, Claude, Perplexity, and others. For a single brand, that's hundreds of copy-paste actions. It doesn't scale. It's boring. And it's full of human error.

Who's going to do that consistently for six months? Nobody. That's the honest answer.

The context problem

Even if someone does the manual work faithfully, a manual check tells you what the model said. It doesn't tell you why. It doesn't tell you how that answer compares to what the model said last week or last month. Without historical data, you can't connect a drop in visibility to a specific website update, a PR campaign, or a competitor's content push.

You're flying blind with a clipboard.

What Does "Operational" AI Visibility Actually Look Like?

This is where the shift happens. Operationalizing visibility means you stop treating it as a periodic task and start treating it as continuous intelligence.

Think of it as moving from a one-time scan to a 24/7 watchtower.

With continuous monitoring through a system like the Akii AI Visibility Monitor, you're tracking your brand across multiple dimensions and data points automatically. Here's what changes:

Continuous monitoring. The system tests your brand across all major models around the clock. You're not waiting until Monday morning. You're capturing shifts as they happen.

Automated alerts. You get notified when something changes. If your brand understanding score drops because Gemini started hallucinating your pricing, you know immediately. Not next week.

Historical trends. You can see your performance over weeks and months. This is what lets you prove ROI to stakeholders. You can show a clear line between your optimization work and your rising inclusion rate. That's the difference between "I think we're doing okay" and "Here's the data."

I've spent 25 years building and running technology companies. The pattern is always the same. Teams that build measurement systems around the things that matter are the ones that improve. Teams that check in sporadically are the ones that get surprised.

Who Actually Needs Ongoing Monitoring?

Not every business needs enterprise-grade tracking. Let me be direct about that.

If you're a solo blogger who rarely updates your offer and your primary goal is simple traffic, a periodic free scan is probably enough. Check in quarterly. Fix what's broken. Move on.

For certain types of businesses, though, the cost of invisibility is too high to ignore.

SaaS and tech founders

If you sell software, you're in a zero-click battleground right now. When someone asks an AI model "What's the best project management tool for remote teams?" and your competitor gets named while you don't, that's revenue walking out the door. Every day.

It gets worse when the model doesn't just ignore you but actively misrepresents you. Maybe it says your product is "expensive" based on outdated pricing. Maybe it confuses your feature set with a competitor's. You need monitoring to protect the accuracy of how AI represents your product.

Agencies and consultants

Your clients expect you to know what's happening before they do. Continuous AI visibility monitoring lets you offer something new and genuinely valuable: AI reputation management as a retainer service. Monthly reports on share of voice inside AI models. That's a real differentiator.

eCommerce brands

Product details change constantly. New SKUs, price changes, stock status. You need to know whether AI agents are picking up your latest data through your schema markup, or whether they're serving customers outdated information. If an AI shopping assistant tells someone your product is out of stock when it isn't, you just lost a sale to a competitor who has their data in order.

The honest question to ask yourself

Here's the test: if an AI model gave wrong information about your business to 1,000 potential customers this week, would it matter? If the answer is yes, you need more than a periodic scan.

How Do You Turn Visibility Data Into Actual Decisions?

This is the part that separates monitoring from intelligence. Tracking your AI visibility is only useful if it changes what you do.

The best monitoring systems don't just show you numbers. They give you a prioritized roadmap.

Prioritize fixes by impact

Not all visibility problems are equal. A system that identifies optimization opportunities and ranks them by impact is worth far more than a raw dashboard. Maybe fixing your product schema will yield a higher return than writing a new blog post. Maybe correcting a single hallucination about your pricing will recover more leads than a month of content marketing.

You want to know where to spend your time. The data should tell you that.

Build strategic reporting

If you're reporting to executives or clients, vague reassurances don't work. "I think we're doing fine in AI" is not a report. "We own 42% of the share of voice in ChatGPT for our primary category, up from 28% last quarter" is a report.

Professional dashboards that show competitive positioning inside AI models give you that language. They move the conversation from gut feeling to evidence. That matters when you're asking for budget, defending a strategy, or proving the value of your work.

Catch problems before they harden

Here's something most people miss about AI models. When a model starts hallucinating something about your brand, that misinformation can solidify over time. If the wrong information gets reinforced through the model's training cycles, it becomes harder to correct later.

Early detection is the whole game. Catch a hallucination early, deploy corrective content and structured data before the error becomes entrenched. Wait six months, and you're fighting uphill.

What's the Real Cost of Waiting?

Most teams underestimate how quickly this space is moving. A year ago, the percentage of people using AI models to research purchases and make decisions was a fraction of what it is today. That number is only going in one direction.

Every week you're not monitoring how AI represents your brand, you're accumulating invisible risk. Not the dramatic kind. The slow kind. The kind where your competitor gets mentioned and you don't, and neither of you notices for months, and by the time you check, the gap is big.

The free scan is the right starting point. It shows you where you stand right now. But if what you find matters to your business, the next step is building a system around it.

You can explore what that system looks like at Akii, including the monitoring tools and pricing that make sense for your stage.

The Pattern I Keep Seeing

After 25 years of building companies through multiple technology shifts, I can tell you the pattern holds every time. A new channel emerges. Early movers treat it seriously and build measurement systems. Everyone else treats it as a novelty until it's too late.

AI visibility is not a novelty. It's the next layer of your digital reputation. And reputation, once lost inside a model that millions of people query every day, is expensive to rebuild.

Start with the scan. See what it tells you. Then decide whether your brand is important enough to watch continuously.

For most of the people reading this, you already know the answer.