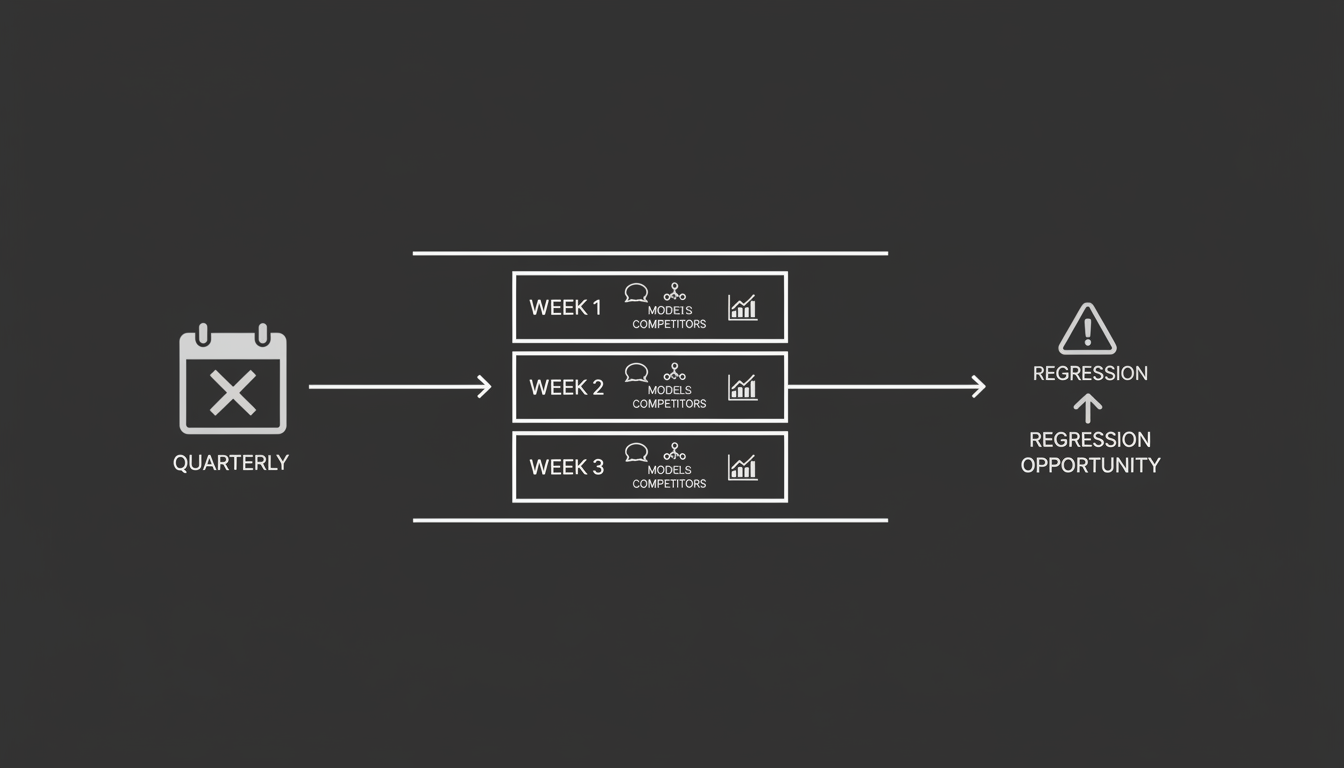

The Reporting Cadence Problem

Most marketing teams still run on a quarterly cycle for anything related to search visibility. Some have upgraded to monthly dashboards. A few check weekly, but they're usually staring at the same static analytics they've used for years.

Here's the problem. The thing they should be watching most closely right now moves faster than any of those cycles can capture.

AI visibility, meaning how your brand shows up in AI-generated answers, doesn't follow the same rhythm as traditional search rankings. It shifts week to week. Sometimes day to day. If you're reviewing it quarterly, you're looking at a snapshot of something that's already changed multiple times since you last checked.

I've watched teams spend weeks preparing quarterly SEO reports that were outdated before the slide deck was finished. That was always somewhat true with traditional search. With AI answers, the gap between reporting cadence and reality is much wider.

Why? Because the inputs shaping AI answers are different. These systems aren't just crawling your site and ranking pages. They're pulling from a mix of sources, weighing recency, and responding to how prompts are phrased. That's a fundamentally different system, and it needs a fundamentally different review cadence.

If your team treats AI visibility like another line item on a quarterly report, you'll miss things that matter. Not small things. Shifts in how your brand is positioned, whether competitors are being recommended instead of you, whether the information AI engines surface about you is even accurate.

The fix isn't complicated. It's a cadence change.

AI Answers Change Faster Than Your Reports

What makes AI visibility so volatile compared to traditional search? A few things.

Prompt sensitivity. The way someone phrases a question to ChatGPT, Perplexity, or Gemini can completely change which brands appear in the answer. "Best CRM for small teams" and "top CRM for startups" might return different results, even though a human would consider them roughly the same question. Small phrasing differences lead to different source material being surfaced, which leads to different brand mentions. This isn't a bug. It's how these systems work.

Competitor insertion. Your competitors are figuring this out too. When a competitor publishes new content, gets cited in a new source, or starts appearing in AI training data, they can show up in answers where they weren't a week ago. No notification. No rank tracker pinging you. Unless you're actively checking, you won't know.

Source updates. AI models pull from sources that update constantly. A blog post gets revised. A review site changes its rankings. A new comparison article goes live. Any of these can shift which brands an AI engine references when answering a given prompt, and the models themselves get updated too, sometimes changing behavior across the board.

Put those together and you have a system where visibility can shift meaningfully in a single week. Not always. But often enough that checking quarterly is like checking the weather forecast once a season and wondering why you keep getting rained on.

So how often should you actually be looking at this? Weekly, at minimum. Not because you need to react to every fluctuation, but because you need to see patterns forming before they become problems.

A useful weekly review doesn't need to be a massive production. It needs to focus on the right signals.

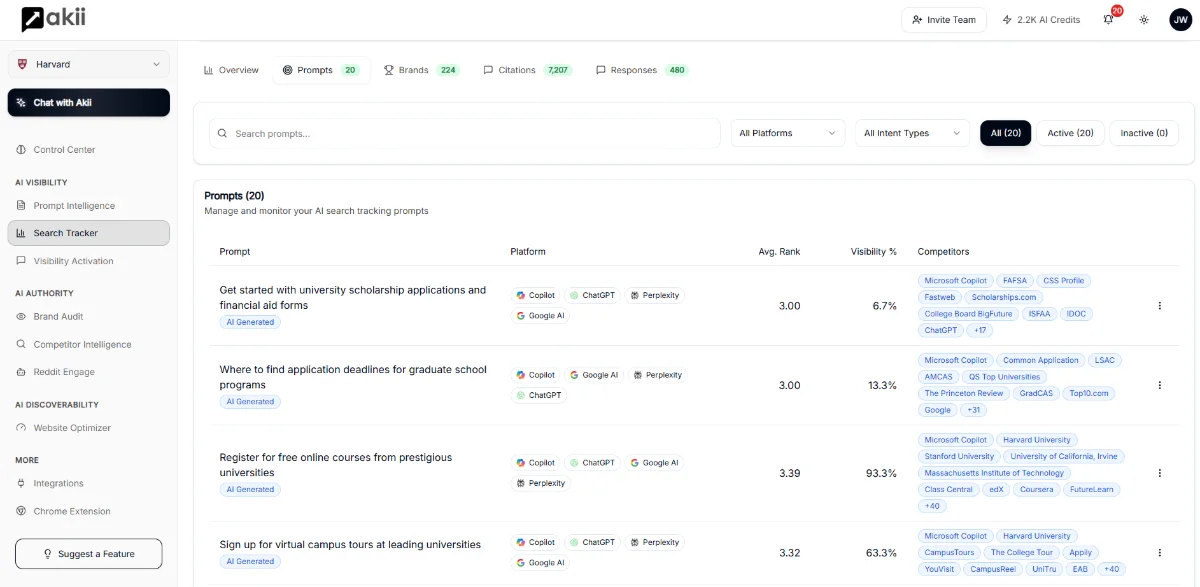

Prompt coverage checks

Pick the 15 to 30 prompts that matter most to your business. These are the questions your ideal customers are actually asking AI engines. "What's the best tool for X?" or "How do I solve Y?" Run them across the major AI platforms every week and track whether your brand appears in the answers.

This is the core of AI visibility tracking. Not page rankings. Not keyword positions. Whether your brand gets mentioned when someone asks an AI engine a question that should lead to you.

At Akii, we built AI visibility tracking specifically because this kind of monitoring didn't exist in a structured way. Legacy SEO tools weren't designed for it. They track pages and keywords. What matters now is whether you show up in the actual answer.

Cross-model comparisons

Don't just check one AI engine. ChatGPT, Gemini, Perplexity, and Claude can give very different answers to the same prompt. Your brand might be well-represented in one and completely absent in another. That's useful information. It tells you where your content and authority are strong and where they're not.

Tracking across models also helps you spot when a specific engine changes its behavior. If you suddenly disappear from Gemini answers but stay present in ChatGPT, that's a signal worth investigating.

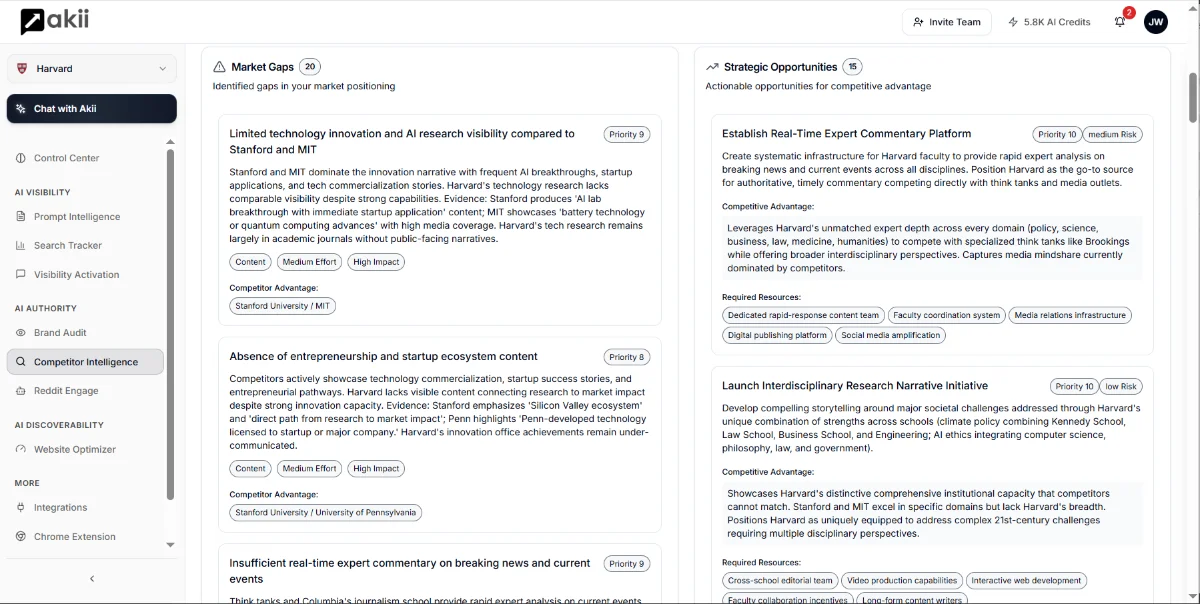

Competitor inclusion shifts

Every week, note which competitors are showing up in the answers you're tracking. Are new names appearing? Are established competitors being mentioned more or less frequently? Is anyone consistently showing up where you're not?

This is competitive intelligence traditional tools can't give you. It tells you who's winning the AI recommendation layer, not just who's ranking on a search results page. Brand state snapshots make this kind of comparison practical rather than manual.

What Teams Should Ignore

Weekly reviews create a real risk: overreacting to noise. Here's what to filter out.

Short-term noise

If your brand disappears from one prompt on one engine for one week, that's not necessarily a trend. AI answers have natural variability. A single data point isn't a pattern. You're looking for consistent shifts over two to four weeks, not one-off fluctuations.

The whole point of weekly cadence is to build a picture over time. Not to panic every Monday morning.

Single prompt tests

Running one prompt and drawing conclusions from it is the AI visibility equivalent of checking one keyword and declaring your SEO strategy a success or failure. It's not enough data. Your review should cover a meaningful set of prompts, and your conclusions should come from the aggregate, not individual results.

Anecdotal results

"I asked ChatGPT about our product and it said something weird" is not a data point. It's an anecdote. The value of a structured weekly review is that it replaces anecdotes with patterns. If someone on your team reports something odd, great. Add that prompt to your tracking set. Don't change strategy based on a single conversation with an AI chatbot.

The discipline here is the same discipline that separates good analytics teams from bad ones. Look at trends, not moments.

Turning Reviews Into Action

A weekly review that doesn't lead to action is just a meeting. Here's how to make it operational.

Detect regressions

The most important thing a weekly review does is catch regressions early. If your brand was consistently mentioned in answers to a key prompt and then stops appearing, you want to know that in week one, not quarter two.

Early detection gives you time to respond. Maybe a competitor published a strong comparison piece. Maybe a source that cited you changed its content. Maybe the AI model updated and is now weighting different signals. Whatever the cause, catching it in a week means you can investigate and act while the window is still open.

This is where tracking AI visibility metrics consistently pays off. You can't detect a regression if you don't have a baseline.

Identify opportunity prompts

Your weekly review will also surface prompts where you're not showing up but should be. These are opportunities. Maybe there's a question your customers are clearly asking that no brand is answering well. Maybe a competitor is weakly represented and you could fill the gap.

Opportunity prompts should feed directly into your content strategy. Not in a vague "let's write about this someday" way. In a specific, prioritized, "this prompt has high relevance and low brand presence, let's address it this sprint" way.

The feedback loop between AI visibility tracking and content creation is where the real value lives. Weekly reviews keep that loop tight.

Build the habit

I've been through enough technology shifts to know that the teams who win aren't always the ones with the best tools. They're the ones who build the right habits early. Weekly AI visibility reviews are a habit. They take 30 to 60 minutes. They don't require a massive team or expensive infrastructure.

What they require is the decision to treat AI visibility as a first-class metric, not an afterthought bolted onto existing reporting.

If you're still running on quarterly SEO reports and wondering why your AI presence feels unpredictable, the answer is straightforward. You're not looking often enough. The system you're trying to understand moves on a weekly clock. Your reporting should too.

Start this week. Pick your prompts. Run them across models. Write down what you find. Do it again next week. Within a month, you'll have more useful signal about your AI visibility than most companies get in a year.

That's not a pitch. That's just how pattern recognition works. You have to actually look at the data to see what's happening, and you have to look often enough to catch shifts while they still matter.

If you want a structured way to do this, Akii was built for exactly this problem. But even without a tool, the cadence change alone will put you ahead of most teams still waiting for their next quarterly review.