The Scoreboard Everyone Trusted Just Stopped Working

For twenty years, digital marketing had a scoreboard everyone understood. Rank #1 on Google for your category keywords and you were winning. Land on page two and you were losing. The metrics were deterministic, the data was public, and the goal was obvious.

That scoreboard is broken now.

We've moved from ten blue links to synthesized answers. When someone asks ChatGPT, Gemini, or Perplexity for a recommendation, the AI doesn't hand them a list of websites to browse. It synthesizes reviews, compares pricing, and delivers a curated shortlist. It acts as the gatekeeper between your brand and your next customer.

This creates a real data crisis for marketing leaders. There's no Search Console for ChatGPT. There's no PageRank for Claude. Most brands are flying blind, unsure whether they're being recommended or ignored by the AI assistants that now shape the majority of web traffic.

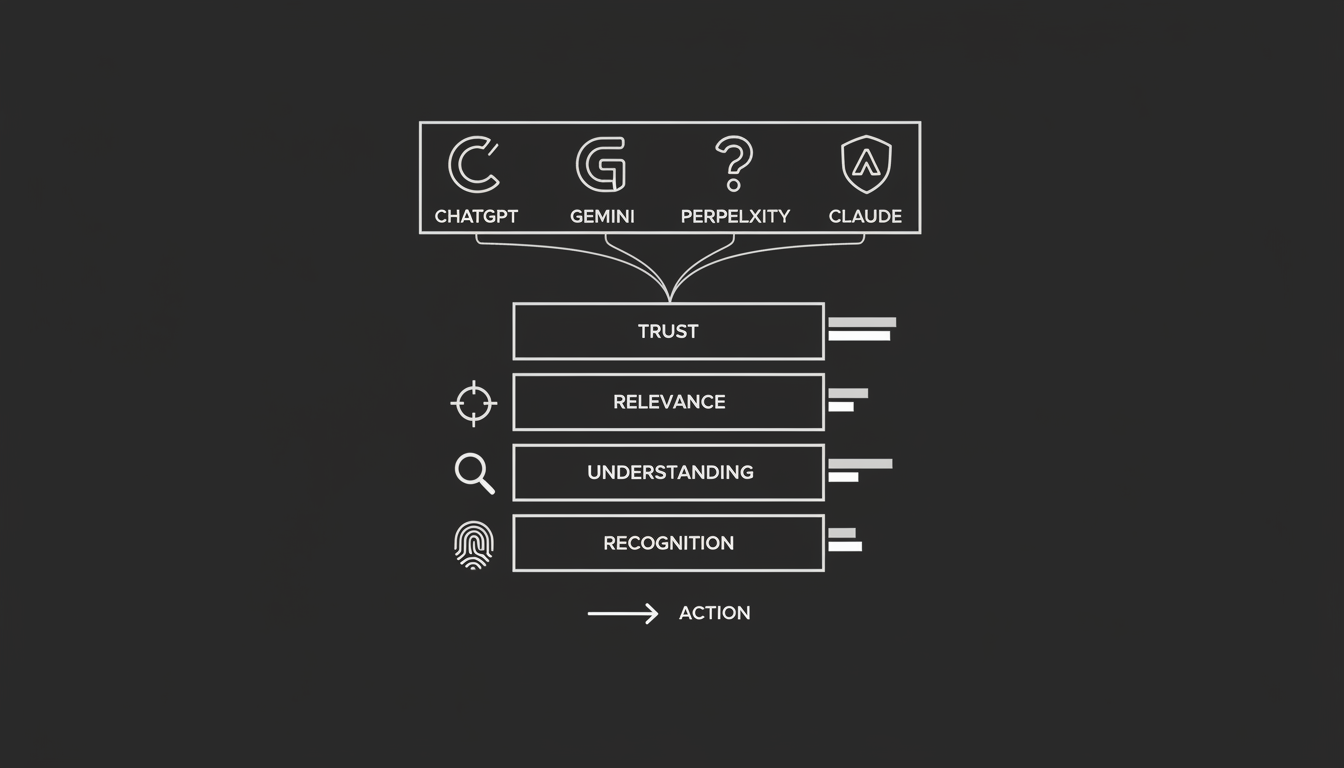

We need a new language of success. Not "rankings" and "traffic," but a system that measures inclusion, understanding, and trust.

That's what this guide covers: a practical, four-layer framework to diagnose how AI models perceive your brand, read the conflicting signals between models, and turn visibility data into action that protects revenue.

Why Do AI Visibility Metrics Even Exist?

Before you can measure success, you have to accept a fundamental shift in how search works.

Traditional search engines are indexes. They match keywords in a query to keywords on a page. Generative engines are reasoning engines. They calculate the probability of which entities, which brands, are most relevant to the context of a question.

That's the key difference. AI search is probabilistic, not ranked.

When you search Google, results are largely static for a given location. When you ask an AI model a question, the answer is generated fresh. Every single time.

So AI visibility metrics aren't measurements of a fixed position like "Rank #3." They're proxies for selection likelihood. They answer one question: in a simulation of 1,000 potential buyer conversations, how often does my brand get selected as the answer?

This distinction matters more than most people realize. You're not improving to move up a list. You're tuning to be selected by a reasoning engine that builds a unique answer for every user, every time they ask.

What's Wrong With a Single "AI Score"?

In the rush to quantify this new reality, many tools and agencies have defaulted to a single "AI Score" or "Visibility Rank." I get the appeal. Executives want a number. Dashboards want a widget.

But a single number can't represent the complexity of AI visibility.

Score doesn't equal understanding. And understanding doesn't equal recommendation.

A brand might have a high visibility score because it's mentioned frequently, but those mentions could be negative. "Brand X is often cited as a cautionary tale" still counts as a mention. Or a brand might be described incorrectly. "Brand Y is a great free tool" is a serious problem when you're actually an enterprise platform charging six figures.

On top of that, different models reward completely different signals. A brand might score 90/100 on Perplexity because it has strong PR citations, but score 20/100 on Gemini because it lacks Schema markup. Same brand, completely different picture.

To actually manage your position in AI-driven discovery, you have to break visibility down into its component parts. You need a framework, not a score.

The 4 Layers of AI Visibility Metrics

The AI Visibility Metrics Framework deconstructs "visibility" into four diagnostic layers. Analyzing each layer independently lets you pinpoint exactly why you're losing ground and which specific lever to pull: content, code, or PR.

Layer 1: Recognition. Is Your Brand Known?

This is the foundation. Before an AI can recommend you, it must recognize you as a distinct entity in its knowledge graph.

What to measure:

- Entity Detection Rate: When asked "What is [Brand Name]?", does the model hallucinate, say "I don't know," or correctly identify you as a business?

- Hallucination Frequency: How often does the model conflate your brand with a competitor or a generic term?

How to interpret the signal:

If you fail at Layer 1, you're experiencing what I'd call technical obsolescence. No amount of blog content will fix this. The fix is strictly about entity SEO: creating a master entity profile and replicating it across Wikidata, Crunchbase, and LinkedIn to force the model to acknowledge your existence.

This is the layer most startups and newer brands underestimate. If the machine doesn't know you exist, nothing else matters.

Layer 2: Understanding. Does the Model Describe You Correctly?

This is where most mid-market brands fail. The AI knows you exist, but it pigeonholes you incorrectly.

What to measure:

- Functional Clarity: Does the model accurately identify your product category? There's a real difference between being called a "CRM" and a "Project Management Tool."

- Attribute Accuracy: Does the model correctly identify your pricing model, target audience, and key features?

How to interpret the signal:

High recognition but low understanding points to a structured data gap. The model has read your text but hasn't ingested the facts in a way it can reliably use. That's a signal to deploy Product and Offer Schema to make your attributes machine-readable.

I've seen this pattern repeatedly. A company wonders why they keep showing up in the wrong recommendation lists. The answer is almost always that their own website sends mixed signals about what they actually do.

Layer 3: Relevance and Coverage. Which Topics Do You Appear For?

Visibility isn't universal. It's topical.

You might be visible for "best free accounting tools" but invisible for "enterprise accounting software." Both matter, but they represent completely different buyer intent and revenue potential.

What to measure:

- Inclusion Rate by Intent: What percentage of the time are you cited in definitional queries versus transactional queries?

- Competitive Gap: Where are your competitors appearing that you're missing entirely?

How to interpret the signal:

If you're missing from Layer 3, you lack content coverage. You need to create what I call "quotable canonicals": concise, definitive answers to specific high-intent questions that the AI can lift directly into its response.

Think of it this way: the AI is writing an essay about your category. Are you providing the quotes it wants to use?

Layer 4: Trust and Sentiment. How Confidently Does the Model Speak About You?

This is the most subtle layer. AI models use hedging language when they lack trust. A recommendation that says "Some users suggest..." is far weaker than one that says "The industry standard is..."

Can you feel the difference? Your customers can too.

What to measure:

- Sentiment Score: Is the language positive, neutral, or cautionary?

- Citation Density: Does the answer include clickable citations to third-party authorities like G2, Gartner, or TechCrunch?

- Confidence Markers: Does the model use definitive verbs ("is," "offers") or probabilistic ones ("might," "appears to")?

How to interpret the signal:

Failure at Layer 4 is a GEO (Generative Engine Optimization) problem. You don't need to change your website. You need to change your external reputation. The model needs corroboration from high-trust sources to feel safe recommending you.

This is where brand building and AI visibility converge. It's not just about being known. It's about being trusted enough to be stated as fact.

Why Do Metrics Look So Different Across Models?

One of the most confusing aspects of AI visibility is that you can lead on one platform and be invisible on another. This isn't a bug. It's a signal.

Different models rely on different training data and retrieval behaviors. Understanding these biases helps you interpret the metrics correctly and avoid chasing the wrong fix.

ChatGPT: The Generalist

ChatGPT provides the broadest coverage but is inconsistent with citations. High visibility here signals strong community buzz and common crawl presence. Low visibility usually means you lack basic web footprints.

Where to focus: External quotability and broad content distribution.

Gemini: The Librarian

Gemini skews heavily toward incumbents with strong Google Knowledge Graph entries and Schema markup. If you're invisible on Gemini, you likely have a technical AEO problem: poor schema or inconsistent entity signals.

Where to focus: Google-aligned entity signals (Wikidata, Google Business Profile) and solid Schema implementation.

Perplexity: The Researcher

The most transparent engine, providing clickable citations in the vast majority of its answers. This is your best proxy for GEO success. If Perplexity cites you, your external PR and thought leadership strategy is working.

Where to focus: Data-driven reports and placements in high-authority media outlets.

Claude: The Conservative

Claude is risk-averse, citing fewer brands but with high stability. Inclusion here is a lagging indicator. It means you've achieved deep, long-term brand authority.

Where to focus: Reputation management and academic-level authority signals.

The Real Insight

Disagreement between models is valuable data. Win on Perplexity but lose on Gemini? Your PR is working (GEO) but your technical website structure (AEO) is weak. Strong on Gemini but weak on ChatGPT? Your structured data is solid but your broader content footprint is thin.

The gap between models tells you exactly what to fix. That's more useful than any single score.

How Should You Interpret Metric Changes?

In traditional SEO, a rank drop from #1 to #4 is a crisis. In AI visibility, score fluctuation is normal. Because AI is probabilistic, scores will wobble based on temperature settings and minor model updates.

Don't panic-refine based on daily changes. Instead, watch for these specific patterns.

The Hallucination Spike

If your brand understanding score drops suddenly, check for hallucinations immediately. A drop here usually means the model has ingested conflicting data. Maybe a new press release contradicts your homepage. Maybe a third-party review site has outdated information. This requires immediate entity hygiene work.

The Sentiment Drift

If your inclusion rate stays steady but your sentiment score declines, the model has likely ingested recent negative reviews or critical press. This is a leading indicator. Your inclusion rate will drop soon if trust isn't restored.

I think of sentiment drift as the canary in the coal mine. By the time your inclusion rate drops, you're already behind.

The Competitor Breakout

If a competitor's share of voice jumps while yours stays flat, they've likely deployed a new technical strategy. Maybe they published a quotable canonical report. Maybe they implemented FAQ schema across their site. Use competitor intelligence tools to reverse-engineer the shift.

The rule of thumb: look for meaningful improvement over 30-day trend lines rather than reacting to daily noise.

How Does This Framework Connect to GEO Metrics?

There's an important distinction between the map and the instrument panel.

The framework (what we've covered here) is your map. It tells you the territory: Recognition, Understanding, Relevance, and Trust.

GEO metrics are your instrument panel. These are the specific data points, citation count, share of voice, sentiment score, that you track daily.

The framework tells you what to fix. "We have a trust problem." The GEO metrics tell you if the fix is working. "Our Perplexity citation count is up 10% this month."

You need both. The map without instruments is theory. Instruments without a map is noise.

From Metrics to Action: A Repeatable Cycle

Data without action is vanity. The purpose of this framework is to drive an optimization cycle that protects revenue.

Step 1: Diagnose

Use the four-layer framework to categorize your problem.

Is it Recognition? That's Level 1 maturity. Is it Understanding? Level 2. Is it Trust? Level 3 or 4.

You can automate this diagnosis using tools like the Akii AI Visibility Score, which benchmarks your brand across these exact dimensions.

Step 2: Fix

Apply the specific lever for that layer.

Fix Understanding: Deploy Schema markup (Product, Offer, FAQ) to make your data machine-readable. This is AEO.

Fix Trust: Launch a GEO campaign to secure citations in high-authority sources that validate your expertise.

Fix Coverage: Create quotable canonicals for the specific high-intent queries where you're missing.

The specificity matters. "Improve our AI visibility" is not a strategy. "Deploy Product Schema to correct our category classification on Gemini" is a strategy.

Step 3: Validate

Don't wait for sales to drop. Set up continuous monitoring to verify the model has accepted your changes.

Watch for the brand understanding score to tick up, indicating the schema worked. Watch for citation quality to improve, indicating the GEO campaign landed. Watch for inclusion rate by intent to expand, indicating the content gaps are closing.

Then run the cycle again.

What Does Measuring AI Visibility Actually Look Like in Practice?

The era of "set it and forget it" SEO is over. AI models are living systems that learn and unlearn facts about your brand every week. Sometimes faster.

To make this framework operational, you need to move from manual spot-checks to automated surveillance.

Track across models. Don't just check ChatGPT. You need to know how Gemini and Perplexity perceive you simultaneously. The discrepancies are where the insights live.

Monitor change over time. Spot the difference between daily volatility and a genuine reputation crisis. One bad day is noise. Two weeks of declining sentiment is a problem worth solving.

Contextualize with competitors. Your score only matters relative to who else is on the shortlist. If you improve 5% but your top competitor improves 15%, you've lost ground even while moving forward.

Tools like the Akii AI Visibility Monitor are built to automate this specific framework, providing the diagnostic data you need to turn visibility from a guessing game into something you can actually manage.

The Point

Stop improving for keywords. Start tuning for understanding.

I've watched multiple technology cycles reshape how businesses get found. This one is different in degree, not in kind. The companies that adapted to Google's algorithm changes in 2012 didn't just tweak their meta tags. They rethought how they communicated value. The same thing is happening now, just at a deeper level of the stack.

The brands that win in 2026 will be the ones that speak the language of the machine: clearly, consistently, and with enough external corroboration that the AI doesn't have to hedge when it recommends them.

That's not a mystery. It's a system. And now you have the framework to build one.

If you want to see where your brand stands across all four layers today, start with Akii.